CS 488 – Week 14

I have been working on cleaning up and refining my code as well as the frontend. I also worked on the final poster as well as the final version of the paper.

CS 488 – Week 13

I have been working on wrapping up my project and worked on finishing up the final paper and the poster. The obstacle remains the same where the model gives out very erratic outputs for sentences that are not in its vocabulary but works well with sentences that it has seen before. I will keep continuing to keep working on this and try to figure out a way to make it work, but this goal might be outside the scope of the project given the current workload and time.

CS 488 – Week 12

I worked on finishing the frontend and wrapping up my project and figuring out what metrics to use measure and compare my models with. I also worked on the poster for my project. This upcoming week I will work on wrapping up my final paper and add the description of my second model to the final paper.

CS 488 – Week 11

I have finished the front end of the project, and am trying to wrap it up. One obstacle that I am facing is that my project proposed to have human testing, which will not be possible due to the current situation. I will be working on the poster in the next few days.

CS 488 – Week 10

Having implemented my first two models, I started working on the front end of my project for the user to interact with. This upcoming week I will keep working on the frontend as well as the poster and the paper.

CS 488 – Week 9

This past week I worked on my other model that uses both engaging/readable and non-engaging/readable advertisements, I found that this model does not perform well because of the lack of parallel data and sentence structures between the two different sentences. For the next week, I will work on refining my initial model as well as writing the frontend for the project.

CS 488 – Update – Week 8

Since I uploaded the architecture design last week, this will I will go back to posting the normal updates here – I have been slowly working on my second model that I will compare my initial mode to. I have not faced any obstacles yet except the learning curve that comes with learning Keras, but since Keras is well documented it does not take much time for me to figure out something that I am stuck in. In the upcoming week, I will keep working on the second model and plan to have it finished by the end of spring break.

CS 488 – Week 6

— Elevator Pitch —

My project aims to use a sequence to sequence encoder-decoder model to make text-based advertisements more engaging and readable. This will help businesses get an edge over their competitors by attracting new customers as well as retaining their existing customers by making sure that their advertisements are readable and engaging to their target audience. This will be done through the analysis of pre-existing advertisements which will then be used to train the model on how to restructure sentences to make them more readable and engaging.

CS 488 – Week 5

I finished writing my initial model and have trained it using a small sample dataset. The accuracy of my initial model is quite bad. I am currently researching how I can modify and improve the accuracy of my model as well as downloading a larger dataset to increase the accuracy. I also worked on the outline of the first draft of the final paper. Currently, the obstacle is the lack of accuracy of my model. This next week I will work on finishing up the first draft of the final paper as well as increasing the accuracy of my model.

CS 488 – Week 4

Last week I worked on collecting and preprocessing the data using Groupon API. I also started learning about and implementing my autoencoder model. So far the obstacle has been the learning curve but I have been extensively reading about neural networks and Keras and should be able to continue working on the project without any hiccups. Next week I plan to start my first draft of the paper as well as have a somewhat working version of the autoencoder model.

CS 488 – Week 3

Since my project involves a significant part that’s marketing, I was advised by my instructor to talk to Seth and other professors about how I should approach a dataset. After talking to them, I have decided that a good approach would be creating a dataset using the readability formulas. First I will calculate the average readability and then filter the dataset using that average readability. A marketing dataset has been extremely hard to find, but asking around has led me to the Groupon API – it lets me get 100 deals per second which will help me easily scrape millions of deals in a few days. I plan to run a script in the background that does it. Since last week, I have also successfully implemented word2vec using Genism – a python library.

CSS 488 – Week 2

Due to a lack of available usable datasets, after talking to my advisor and instructor I decided to modify my project to focus on readability and sentiment instead. I researched papers on readability and sentiment this last week and have starting writing code using python(Keras). My next week’s goals are to have some working code for a trained network that produces more readable code. I still need to look a bit more into what constitutes as readable when it comes to marketing material.

CS 488 – Week 1 – Update

This week I worked on setting up Keras and completed a course on deep learning using Keras (Learn Keras: Build 4 Deep Learning Applications). As I prepped for implementing the project, one of the significant challenges I have encountered is finding an appropriate dataset to train my neural network. Since my project aims to make a business’s marketing material more engaging, an appropriate dataset with labeled data to set up a clear definition of what counts as engaging and what counts as non-engaging is necessary. After some research and talking to my advisor and the instructor, one of the parameters that I am now looking for while searching for datasets is data that might be labeled based on reading level/hard to read/easy to read. The main goal for next week as I move forward with my project is to have a concrete dataset that I can train my neural network with.

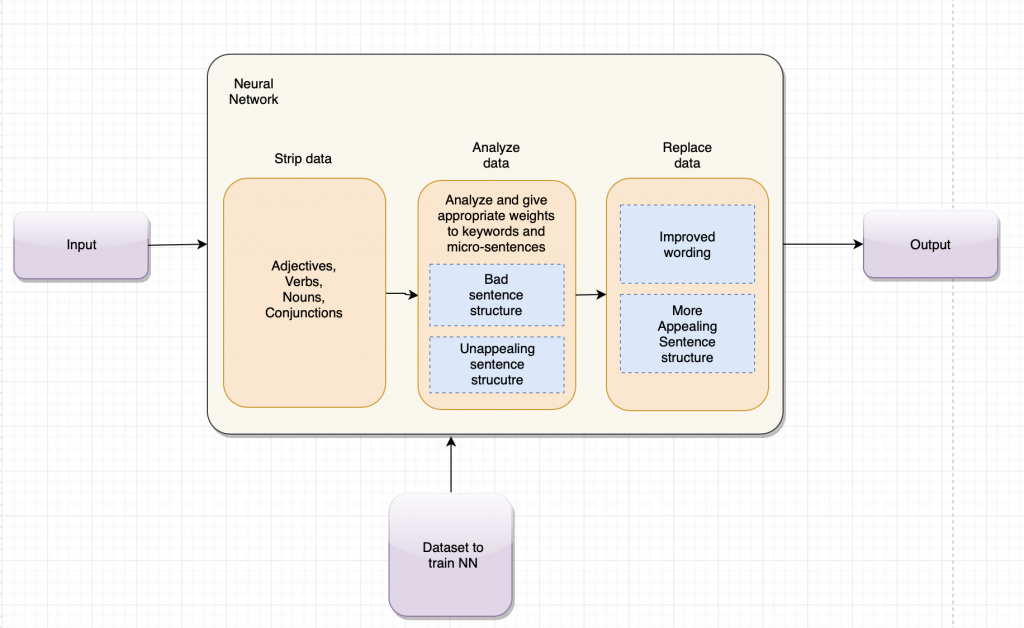

CS 388 -Week 15 – Updates

I have researched and read a few more papers in the last week. I have expanded upon my analyze -> split -> replace modules with actual implementation details using an encoder-decoder model to swap less engaging text with more engaging text. In order to do this, the text needs to be vectorized and then trained. I have also found a module that can help me achieve that. I have also extensively worked on my proposal presentation. I also met with my advisor and went over the presentation and was advised to explain the slides in a way that a person with no understanding of neural networks can understand what is being communicated.

CS 388 – Week 14 – Updates

This week I have been working on my presentation and have refined my proposal a bit. I will keep working on my presentation until the upcoming Wednesday as I wait for feedback on my second draft of my proposal

CS 388 – Week 13 – Updates

I have mostly worked on the second draft of my proposal in the past week. I simplified and restructured my framework model. I also looked for more use cases of my project and decided what frameworks to use while implementing it.

CS 388 – Week 12 – Updates

In the past week, I have spent most of my time working on the first draft of the proposal. I decided to research and include a new category of papers in my proposal that I had not spent a lot of time before on. The new category that I included was “Sentiment Analysis.” While working on the proposal and refining the design of my framework, I realized that sentiment analysis, something that has been thoroughly covered by researchers of neural networks is very close to my research since I also need to know the sentiment behind the email/piece of text that is to be improved.

CS 388 – Week 11 – Updates

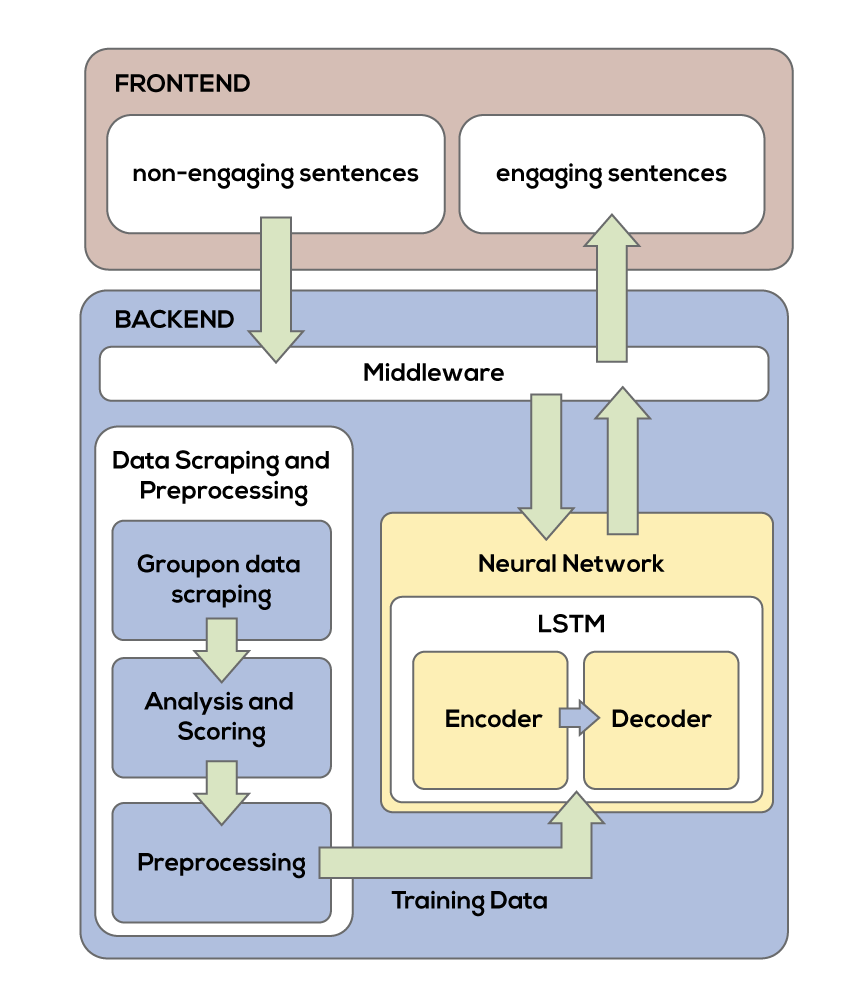

I extensively worked on the proposal last week, reading more papers and writing out what I plan to do helped me figure out the scope of the proposed project. I also experimented a bit more with tensorflow. I made some changes to my initial framework design to now include a frontend and backend for the end-user to interact with.

CS 388 – Week 10 – Updates

In order to get more familiar with neural networks I decided to use a program that lets you create neural networks. In order to do this I started reading about tensorflow and tensorflow graphs and their inner workings like variables, constants and operations. I read some tutorials on tensorflow and also studied about the Keras model subclassing API which is one of the building blocks of tensor flow to start building a simple neural network. I also read I also searched for more papers that are similar to my research and read Semantic expansion using word embedding clustering and convolutional neural network for improving short text classification, Semantic Clustering and Convolutional Neural Network for Short Text Categorization in order to familiarize myself more with neural networks that are used for text classification.

CS 388 – Week 9 – Updates

This past week I worked on revising my literature review as well as writing my proposal outline that will serve as a starting point for my first proposal draft. I met with my advisor who helped me to come up with a good starting dataset for my initial neural network. I will continue read about neural networks and maybe try to implement a simple one in the upcoming weeks.

CS 388 – Week 8 – Updates

I read over 20 papers in the last two weeks to work on my literature review. I met with my advisor and talked to him about my proposal and what could be improved in my literature review. I have found some projects/papers that touch upon what my project aims to be. This will help me find and establish a starting point once I start working on my project. One of the challenges that I am currently facing is finding a dataset. I have come across a few datasets that I can use from kaagle.com.

This is what my initial model looks like (This does not delve deeper into how the neural networks are configured.)

CS 388 – Week 7 – Updates

I now know what idea I am going to go with, it’s a new idea and is not related to any of my old ideas. My new idea is about using neural networks and natural language processing to predict a better way to write emails or other forms of text in order to better engage the reader. This will be focused on business emails and other forms of business-related texts. I have read a lot of papers on neural networks in the past week and have spent most of my time writing my literature review on it.

CS388 – Week 6 – Update

I read more papers on my ideas, met with my advisor to schedule a weekly meeting and discussed a new idea proposed by the company I interned with this summer.

The new idea is a Neural Network driven A.I. that can learn and predict better ways to write emails, marketing campaigns and other forms of communication with the customer.

388 – Week 5 – Update

Most of the articles I have read this week have been quite informative and take the time to explain even the most basic things. However, this has not been true for all the articles that I have read. Some of these articles assume that the reader is already familiar with the terminologies and technologies used in their research or study. Although this helps the reader dig deeper into the topic and come out with a better understanding of the study or research, it also means that doing a 1 or 2 par reading often does not suffice in these cases. Along with reading research papers this week, I also worked on some technical diagrams for my projects this week.

CS 388 – Week 4 – Update

I did a 2 pass reading of all the sources that I had collected over the last two weeks. I realized that some of the things I had in mind already exist. I’m still researching about pre-existing technologies/frameworks that could help me set up a reference point to build upon as I work on my project.

CS 388 – Week 3 – Update

I researched and made a list of papers that I will read in the upcoming week. Here is the list of papers, that I plan to read:

Topic 1 Name: 3d Printer – A new Technique

Paper List:

(1) Innovations in 3D printing: a 3D overview from optics to organs (2013) - Journal: British Journal of Ophthalmology -

Journal: British Journal of Ophthalmology –

Authors: Carl Schubert, Mark C van Langeveld, Larry A Donoso

(2) Development of a 3D printer using scanning projection stereolithography (2015) -

Publication: Scientific Reports 2015

Authors: Michael P. Lee, Geoffrey J. T. Cooper, Trevor Hinkley, Graham M. Gibson, Miles J. Padgett & Leroy Cronin

(3) Printing 3D microfluidic chips with a 3D sugar printer (2015) -

Publication: Microfluidics and Nanofluidics

Authors: Yong He, Jing Jiang, Qiu Jian, Zhong Fu

(4) A Layered Fabric 3D Printer for Soft Interactive Objects (2015) -

Conference: 33rd Annual ACM Conference on Human Factors in Computing Systems

Authors: Huaishu Peng, Jennifer Mankoff, Scott E. Hudson, James McCann

(5) Recent advances in 3D printing of biomaterials (2015) -

Journal: Journal of Biological Engineering

Authors: Helena N Chia, Benjamin M Wu

Topic 2 Name: 3d – Drone Mapping

Paper List:

(1) Automatic wireless drone charging station creating essential environment for continuous drone operation (2016) -

Conference: International Conference on Control, Automation and Information Sciences (ICCAIS)

Authors: Chung Hoon Choi, Hyeon Jun Jang, Seong Gyu Lim, Hyun Chul Lim, Sung Ho Cho, Igor Gaponov

(2) INTEGRATED THREE-DIMENSIONAL LASER SCANNING AND AUTONOMOUS DRONE SURFACE (2013) -

Conference: 2013 ICS Proceedings

Authors: D. A. McFarlane, M. Buchroithner, J. Lundberg, C. Petters, W. Roberts, G. Van Rentergen

(3) Submodular Trajectory Optimization for Aerial 3D Scanning (2017) -

Conference: The IEEE International Conference on Computer Vision (ICCV)

Authors: Mike Roberts, Debadeepta Dey, Anh Truong, Sudipta Sinha, Shital Shah, Ashish Kapoor, Pat Hanrahan, Neel Joshi

(4) Google Street View: Capturing the World at Street Level (2010) -

Journal: Computer ( Volume: 43 , Issue: 6 , June 2010 )

Authors: Dragomir Anguelov, Carole Dulong, Daniel Filip, Christian Frueh, Stéphane Lafon, Richard Lyon

(5) Integrating Sensor and Motion Models to Localize an Autonomous AR.Drone (2011) -

Journal: International Journal of Micro Air Vehicles

Authors: Nick Dijkshoorn, Arnoud Visser

Topic 3 Name: AI-NLP multi platform application builder

Paper List:

(1) A Primer on Neural Network Modelsfor Natural Language Processing (2016) -

Journal: Journal of Artificial Intelligence Research

Authors: Yoav Goldberg

(2) Natural Language Assistant: A Dialog System for Online Product Recommendation (2015) -

Publication: AI Magazine

Authors: Joyce Chai, Veronika Horvath, Nicolas Nicolov, Margo Stys, Nanda Kambhatla, Wlodek Zadrozny, Prem Melville

(3) Web-based models for natural language processing (2005) -

Journal: ACM Transactions on Speech and Language Processing (TSLP)

Authors: Mirella Lapata, Frank Keller

(4) Web 2.0-Based Crowdsourcing for High-Quality Gold Standard Development in Clinical Natural Language Processing (2013) -

Publication: J Med Internet Res

Authors: Haijun Zhai, Todd Lingren, Louise Deleger, Qi Li, Megan Kaiser, Laura Stoutenborough, Imre Solti

(5) The impact of cross-platform development approaches for mobile applications from the user's perspective (2016) -

Conference – Proceedings of the International Workshop on App Market Analytics

Authors: Iván Tactuk Mercado, Nuthan Munaiah, Andrew Meneely

CS 388 – Week 2 – Three Ideas

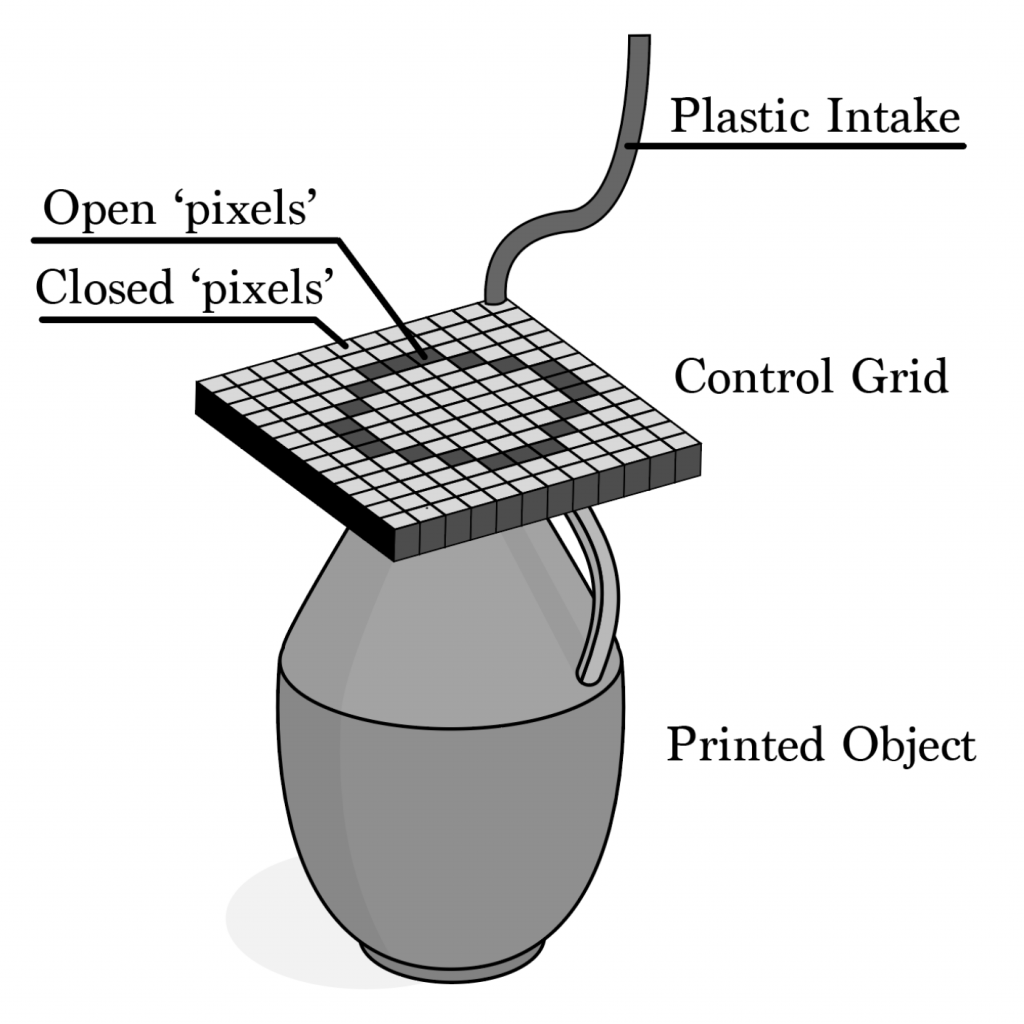

3d Printer

A new way of 3d printing. The implementation includes a pixel grid that is controlled by a computer, the 3d object is converted into layers, the layer then controls the corresponding pixels the molten plastic flows through. Based on the layer the pixels ‘open’ and ‘close’ to form the 3d object. The pixel grid moves up as it finishes a layer forming the 3d object.

3d – Drone Mapping

A drone that creates a google street view like map. A radius can be set using a mobile interface and the drone will go around using pathfinding algorithms. It will take images that will be stitched together to create a 3d space – google street view like maps. This can be used to map and study deep cave systems.

A new way of 3d printing. The implementation includes a pixel grid that is controlled by a computer, the 3d object is converted into layers, the layer then controls the corresponding pixels the molten plastic flows through. Based on the layer the pixels ‘open’ and ‘close’ to form the 3d object. The pixel grid moves up as it finishes a layer forming the

AI-NLP multi platform application builder:

Based on natural language processing and AI – a program that takes in a description of the application that needs to be built. The AI will then use a natural language processor to convert the description to code. This can be useful for businesses that cannot afford to hire software developers to build their application.

CS388 – Week 1 – First Idea

Name of Your Project?

Pixel Printer

What research topic/question your project is going to address?

My project aims to improve the current process of 3d printing that is used for mainstream industrial 3d printing.

What technology will be used in your project?

- 3D-Printing

- A small computer (Raspberry Pi)

What software and hardware will be needed for your project?

- A plastic pixel grid made of either wood or metal.

- Raspberry Pi or similar small computer to control the opening and closing of the pixel grid.

- Plastic to print the 3d object out of.

- Motors that will control the vertical movement of the pixel grid.

How are you planning to implement?

My implementation includes a pixel grid that is controlled by a computer, the 3d object is converted into layers, the layer then controls the corresponding pixels the molten plastic flows through,. Based on the layer the pixels ‘open’ and ‘close’ to form the 3d object. The pixel grid moves up as it finishes a layer.

How is your project different from others? What’s new in your project?

My project is an entirely new concept. This process of 3D-printing does not exist. The current 3d printers consist of a nozzle through which the molten plastic flows, the nozzle then moves around forming the object which is very time consuming. My process uses a pixel grid that allows molten plastic to flow through the pixels forming the object.

What’s the difficulties of your project? What problems you might encounter during your project?

- Since this is an entirely new process of 3d printing, I will not be able to use pre-existing online resources.

- I have no experience working with a raspberry pi and I’m not familiar with hardware programming.

- My process relies on the density of the grid. The more ‘’pixels’’ my grid contains the more detailed the 3d object will be.

- Creating an extremely dense grid could be expensive and not viable in an academic setting.