Unsupervised Anomaly Detection in Brain MRI

Comparison Analysis between 3D AutoEncoder vs 3D Denoising Diffusion Probabilistic Model

About Me:

My name is Kenny Shema, and I am a class of 2025 student pursuing a major in Computer Science and Economics.

Project:

My project consists of a personalized music recommendation system that uses real-time heartbeat data to suggest songs matching the user’s current mood or energy level. Built with a focus on integrating wearable sensor data and machine learning, the project aims to enhance emotional connection and user experience through adaptive, data-driven music selection.

Proposal:

Introduction

Introduction

My name is Helena Aleluya José, and I’m an international student from Angola. I’m also a Computer Science and Theater double major, and an African and African-American Studies and Creative Writing double minor. As both a computer scientist and a playwright, I wanted to create a project that reflects the intersection of my passions. For my senior capstone, I developed a framework that applies topic modeling techniques (LDA) to a corpus of dramatic texts in order to study playwright originality

Abstract

This capstone project aims to develop a novel approach for analyzing and comparing playwrights’ unique voices and styles by applying topic modeling techniques, specifically Latent Dirichlet Allocation (LDA), on a corpus of theater texts. By leveraging advanced natural language processing (NLP) methods, the project will preprocess and prepare a diverse collection of plays for topic modeling, implement custom algorithms tailored for dramatic literature, and analyze the discovered topics to identify recurring themes, character archetypes, and narrative structures prevalent across different playwrights’ works. Interactive visualization tools will be developed to facilitate the exploration and interpretation of these insights, enabling literary scholars and critics to understand the creative processes better and the influences that shape dramatists’ voices.

Keywords

Topic modeling techniques, textual analysis, LDA model, Digital Humanities

Karolayne Gaona

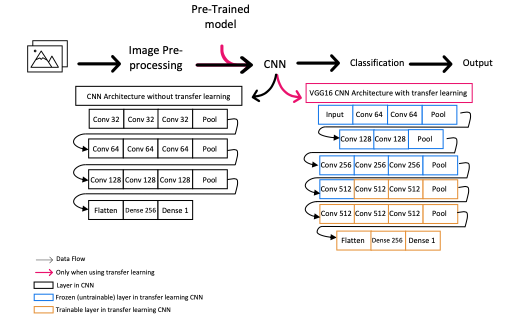

Pet identification is important for veterinary care, pet ownership,

and animal welfare and control. This proposal presents a solution

for identifying dog breeds using dog images. The proposed method

applies a deep learning approach to identify the dog breeds. The

starting point for this method is transfer learning by retraining

existing pre-trained convolutional neural networks on the Stanford

Dog database. Three classification architectures will be used. These

classifiers will take images as input and generate feature matrices

based on their architecture. The stages these classifiers will undergo

to create feature vectors are 1) Convolution to generate feature

maps and 2) Max Pooling: highlight features are extracted from

the feature maps. Data augmentation is applied to the database to

improve classification performance.

CS388-annotated-bib

The new version is the one used for the project but ver 5 was the last version to still include the sources for pitch A.

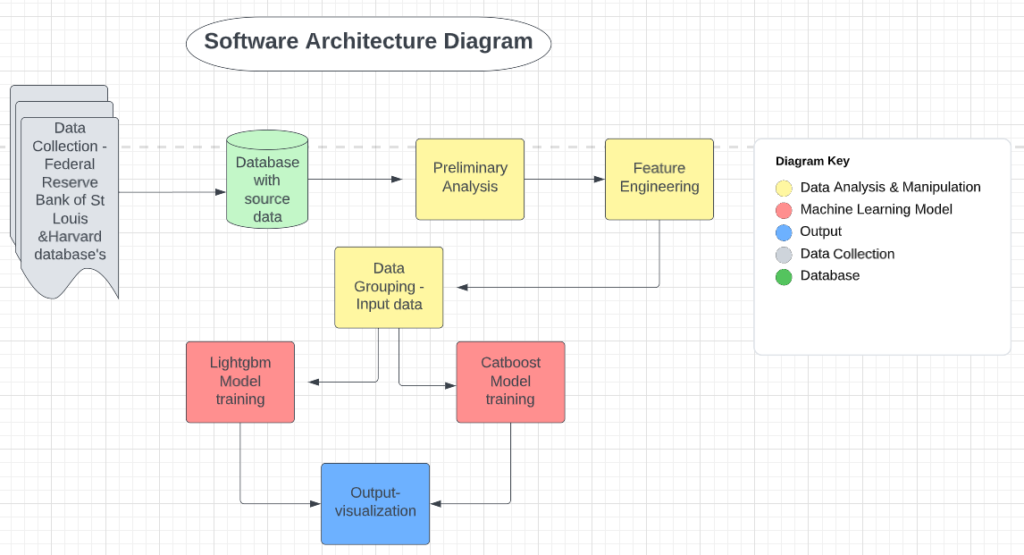

Building a textual dataset for the Generative Design in Minecraft Competition

Abstract:

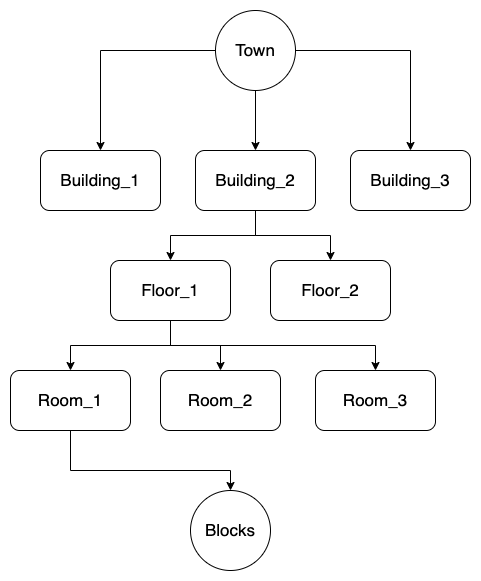

This paper presents the structure and design of a novel open-source textual database named the Brick Database for Minecraft settlements. Aimed at addressing the current lack of comprehensive datasets for model training in the Generative Design in Minecraft Competition (GDMC), this study explores the methodologies and potential challenges in creating a textual database and automatically detecting buildings and rooms.

Paper:

Software demonstration:

Poster:

Data diagram:

Final Three Pitches

- Machine learning to automatized music composition

This project aims to build a system that uses machine learning algorithms to generate original music compositions based on original ones. This project will be studying machine learning techniques for pattern recognition.

The feedback I received from this seems to be more approachable than my first pitch, but I haven’t been able to receive more feedback because nobody has experience with this matter.

However, Doug questioned if there are Machine learning packages that I could use to build my project. Because in my project I aim to produce a new product from already existing ones. So, do we have packages to generate a new thing? It is possible to configure a train to produce something new.

I am currently getting in contact with the music department because there is a professor who teaches a class, “Making Music with Computer.”

I learned about software for music composition called “Max” which is a visual programming language for music and multimedia. I also learn about authors as David Cope, who he has a lot of work regarding artificial intelligence in music. I also investigated different types of music algorithms for composition and am currently looking for a specific problem or field to explore in this area.

Figure out because off all the complexity of this keep things simple.

- translational models

- mathematical models

- knowledge-based systems

- grammars

- optimization approaches

- evolutionary methods

- systems which learn

- hybrid systems

- Detecting non-child-appropriate content in videos.

Nowadays, it is very easy to get access to a lot of content online, including music, articles, games, videos, and movies. In the last couple of years, the use of video platforms such as YouTube has become very important for education, entertainment, hobbies, etc. However, YouTube is a platform where anyone can upload content, and not all content is appropriate to everyone. It can be because they may include sensitive content or bad words. This project will aim to use deep learning and machine learning for video analysis. It will require image processing to process video clips. It can also work with pattern recognition. This project aims to detect images and audio from the videos.

Challenge: video processing,

Data set of video file tag if they are not appropriate for children.

Potential dataset:

https://zenodo.org/record/3632781 (Restricted)

https://figshare.com/articles/dataset/The_Image_and_video_dataset_for_adult_content_detection_in_image_and_video/14495367/1 (adult content detention)

Youtube Data API (meta data)

Good Resources

- Real-time sign language translator to text.

This project aims to use machine learning to accurately translate real-time sign language to text by capturing sign language gestures using a camera (more likely from a computer’s web camera) to text. This system should be able to recognize various signs. I would like to study computer vision techniques.

From the input I received from Doug, this is a very ambitious project. We thought about what would be more convenient. Translate from moves gestures to text or from text to moves gestures. The first option seems to be more complicated because computer vision techniques can be a vast area. More than understanding computer vision techniques, but will also be required to recognize sign language and the context. To be able to train machine learning, I will require a lot of data that may not be easy to obtain.

This project will aim to have a friendly user interface. So it will be easy for the user to navigate through the translator.

- Machine leaning for the prediction of stroke diseases

Stroke is a cerebrovascular disease and is a significant causes of death. It causes significant health and financial burdens for the individual and the system. There are many machine-learning models built to predict the risk of stroke or to automatically diagnose stroke using predictors such as life factors or images. However, there has not been an algorithm that can predict using lab tests.

CS 388 Momo Hirose Pitches

Idea #1

My research question:

What algorithms can be used to simulate policy making?

Public policy should obviously be for all citizens, but people often feel that their voices and real needs are not reflected in policy. In my home country of Japan, I have witnessed many voices on Twitter (now called X) about the policies they want from the government. I would like to create software that can use government data to simulate and predict what the impact would be if the government actually implemented those policies. Such a tool would help in better evidence-based policy making.

- One example of a specific policy I would like to examine:

- The average salary in Japan has not changed much in the last 30 years, while taxes and commodity prices keep rising, causing poverty especially among young people. If the government raised the average salary by 50%, what would happen to Japan’s economy (both positively and negatively)?

- * Data on taxes, average salary, commodity prices and poverty in the country can be found on the government website.

- Average Salary in Japan 1989-2018: https://www.mhlw.go.jp/stf/wp/hakusyo/kousei/19/backdata/01-01-08-02.html

- Consumer Price Index ~2022:

https://www.stat.go.jp/data/cpi/1.html - Results of the analysis of the survey on relative poverty rates 1999~: https://www.mhlw.go.jp/seisakunitsuite/soshiki/toukei/dl/tp151218-01_1.pdf

- * These data are in Japanese, published by the government

- * Data on taxes, average salary, commodity prices and poverty in the country can be found on the government website.

- The average salary in Japan has not changed much in the last 30 years, while taxes and commodity prices keep rising, causing poverty especially among young people. If the government raised the average salary by 50%, what would happen to Japan’s economy (both positively and negatively)?

Idea #2

My research question:

How can we combine speech recognition, AI, and data extraction to automatically and instantly display the data mentioned in public discussions?

In Japan, when public policies are made, they have to be discussed in the Council or the Diet (Japanese national congress) in order to be approved. However, these discussions are often not easy to understand for the citizens for the following two reasons.

- Politicians tend to criticise each other for trivial words or actions, so it is hard to understand the points of what is being discussed.

- What we, the citizens, see on television is only politicians arguing. Without visual aids it is even harder to follow the discussions.

If the data (graphs and numbers) are automatically displayed when politicians refer to data by recognising the voice, policy-making will be more evidence-based and discussions will be easier for citizens to understand.

- An example of a specific issue I would like to look at is Japan’s declining birth rate – this is often discussed in the National Diet, but because it is such a broad topic, the discussions sometimes get lost without data.

- https://www.shugiintv.go.jp/jp/

- * This link above is the website where you can access all the archives of live broadcasts of the National Diet.

- * The videos cannot be downloaded (only available for watching in the web browser)

- https://www.shugiin.go.jp/internet/itdb_shitsumon.nsf/html/shitsumon/menu_m.htm

- This government official website shows text data of the questions and answers that were discussed in the National Diet. They are listed by topics and available in PDF and HTML format. (* Only in Japanese, but I can translate for the purpose of this research)

Idea #3 Computational Modeling of National Budget

My research question:

What algorithms can be used to better allocate the national budget?

What I realized while working with the government agencies in Japan last summer was that the policy-making process focuses heavily on the rationale of policies, and after they are approved, the evaluation is not as important as it should be. This could lead to a situation where the national budget continues to be allocated to policies that are not effective. If we can create a system to quantify the impact of each policy and also get comments from citizens, and use an algorithm to allocate the national budget, I think we could have a better cycle of public policy.

- Since examining the entire national budget could be huge, here’s a specific topic I’m interested in investigating:

- The budget for developing the startup ecosystem in Japan

- How is it currently allocated to each policy?

- How effective are these policies?

- How should the budget be allocated in the future, using computer modelling?

- * Data and information on the development of the startup ecosystem and its budget can be found at the Japanese Ministry of Economy, Trade and Industry.

- https://www.meti.go.jp/shingikai/sankoshin/shin_kijiku/pdf/004_03_00.pdf

- * This document is an example of data that is available. It was published by the Ministry of Economy, Trade, and Industry.

- The budget for developing the startup ecosystem in Japan

6 Annotated bibliography – Tien

Facial Expression Recognition: This pitch will focus on facial expression recognition (FER) using convolutional neural networks (CNNs), which helps people with difficulties in communication, analyzes human’s emotion, and helps with development of AI in supporting humans during their daily life. Dataset: CK+ : 48×48 pixels images in grayscale format; face cropped; emotions includes anger (45 samples), disgust (59 samples), fear (25 samples), happiness (69 samples), sadness (28 samples), surprise (83 samples), neutral (593 samples), contempt (18 samples). Tufts Face Database: multi-modal face image images with more than 100,000 images, 74 females and 38 males from different age groups.

Suppressing uncertainties for large-scale facial expression recognition

Kai Wang, Xiaojiang Peng, Jianfei Yang, Shijian Lu, and Yu Qiao. 2020. Suppressing uncertainties for large-scale facial expression recognition. Proceedings of the IEEE/CVF conference on computer vision and pattern recognition (2020), 6897–6906. https://openaccess.thecvf.com/content_CVPR_2020/html/Wang_Suppressing_Uncertainties_for_Large-Scale_Facial_Expression_Recognition_CVPR_2020_paper.html

This paper addressed a specific problem in large-scale FER, which is “uncertainties caused by ambiguous facial expression, low-quality facial images, and the subjective of the annotators” by using the Self-Cure Network. Because it focuses on a problem in FER, it is a good example if we want the purpose of our proposal to be about addressing a problem in the domain. It also mentions a lot of good dataset for FER along with works on FER using algorithms in the citation, which are good resources for our proposal. Related works in the field are also provided and went into detail to showcase the problem that the paper is focusing on. The methods that were proposed in the paper are based on the observation that CNNs can be uncertain about their predictions.

Deep-emotion: Facial expression recognition using attentional convolutional network

Shervin Minaee, Mehdi Minaei, and Amirali Abdolrashidi. 2021.Deep-Emotion: Facial Expression Recognition Using Attentional Convolutional Network. Sensors 21, 9, 3046. https://www.mdpi.com/1424-8220/21/9/3046

This paper is about FER using attentional CNNs. It discusses the challenges of FER and how attentional CNNs can be used to address them. The author proposed a new attentional CNN architecture that is able to focus on important facial regions for emotion detection. Because I decided to use CNN as the main method for processing the data in my paper, I can consider using the proposed attention CNNs from this paper. The proposed attentional CNN architecture consists of 2 main components: a feature extraction network and an attention network. The feature extraction network extracts features from the input image, while the attention network learns to focus on the most important facial regions for emotion detection.

Facial expression recognition: A survey

Yunxin Huang, Fei Chen, Shaohe Lv, and Xiaodong Wang. 2019. Facial expression recognition: A survey. Symmetry 11, 10, 1189. MDPI. https://www.mdpi.com/2073-8994/11/10/1189

This paper is a survey for FER of visible facial expressions, which provides a lot of necessary background knowledge like terminologies and difficulties in the field. It also provided a throughout FER approach from beginning to end process including Image processing, feature extraction, gabor feature extraction, and expression classification. CNNs, Deep Belief Network, Long Short-Term Memory, Generative Adversarial Network are introduced and cited with current works related to them.

Facial emotion recognition using transfer learning in the deep CNN

M. A. H. Akhand, Shuvendu Roy, Nazmul Siddique, Md Abdus Samad Kamal, Testuya Shimamura. 2021. Facial emotion recognition using transfer learning in the deep CNN. Electronics 10, 9, 1036. MDPI. https://www.mdpi.com/2079-9292/10/9/1036

This paper focuses on Deep CNN and Transfer Learning (TL). CNN is a popular technique used for FER and it is one that I’m considering moving forward with. This paper also focuses on using these techniques to reduce the development efforts, which is an understanding problem that all of the previous ones haven’t touched on. FER systems need to be able to handle occlusion, noise, and other challenges. Deep CNNs have been shown to be effective for FER tasks. CNNs are able to learn complex features from images, which can be helpful for identifying FER. Transfer learning is a technique where a pre-trained model is used as a starting point for a new model. This can be useful for tasks where there is limited training data available. In the paper, they introduced the technique of adopting a pre-trained Deep CNN model and replacing its dense upper layer(s) compatible with FER, and then fine-tuning the model with facial emotional data. This approach has been shown to achieve remarkable accuracy on both the FDEF and JAFFE facial image datasets.

Local multi-head channel self-attention for facial expression recognition

Roberto Pecoraro, Valerio Basile, and Viviana Bono. 2022. Local multi-head channel self-attention for facial expression recognition. Information 13, 9, 419. MDPI. https://www.mdpi.com/2078-2489/13/9/419

This paper proposed Local multi-head Channel self-attention (LHC) in the context of computer vision and in facial expression recognition. LHC is a very new approach in the field of FER. This paper will be useful because the LHC module is a type of self-attention module that can be integrated into CNNs, and it has been shown to improve the performance of CNNs on FER tasks. In a CNN, each layer learns to extract different features from the input image. The LHC module allows the CNN to learn long-range dependencies between features, which can be helpful for FER.

Guided open vocabulary image captioning with constrained beam search

Peter Anderson, Basura Fernando, Mark Johnson, and Stephen Gould. 2016. Guided open vocabulary image captioning with constrained beam search. arXiv preprint arXiv:1612.00576. https://arxiv.org/abs/1612.00576

I read this paper for another class, and I think it is pretty interesting if I can incorporate this into my paper. The paper proposed a method for improving the performance of open vocabulary image captioning models. Open vocabulary image captioning models are able to generate captions that contain words that are not present in the training data. The author introduced a technique called constrained beam search to guide the generation of captions. Contrainsed beam search forces the generated captions to include certain words. FER uses words “happy”, “sad”, “angry”, and “neutral”, constrained beam search could be used to force the system to predict at least one of these words in each prediction.

Sentiment analysis of online food reviewWhen people buy products online, the one thing that they tend to look closely at are the reviews of the product. Having good reviews and understanding the needs of the customers through the review can help the business grow tremendously. A review usually comes in two parts: the rating and the reviews. While the text-review system can be easy to interpret the customers’ overall experiences without any biases, the star-rating system tends to be less informative and it is up to the viewer to interpret the rating. This project aims to perform sentiment analysis on the reviews dataset, so it provides more accurate feedback on the products. Another aspect that this project can develop toward is that it can perform analysis on the negative languages on the recent reviews to let the businesses know what they should focus on improving. Amazon Fine Food Reviews dataset, which contains data over a 10 year period (1999 to 2012). Another plan to replace this dataset is using DoorDashAPI to collect reviews from the restaurants on DoorDash.

Comparative study of deep learning models for analyzing online restaurant reviews in the era of the COVID-19 pandemic

Yi Luo, and Xiaowei Xu. 2021. Comparative study of deep learning models for analyzing online restaurant reviews in the era of the COVID-19 pandemic. International Journal of Hospitality Management 94, 102849. Elsevier. https://www.sciencedirect.com/science/article/pii/S0278431920304011

The paper performs analysis on four features of 112,412 restaurants on Yelp and shows outcome comparison between algorithms. The data are collected by using a web scraper, which is a method that we proposed for our paper if we can’t find a more recent dataset. They also mentioned the process of data cleaning, which includes 2 procedures: tokenization and stopwords removal. They provided 2 deep learning and machine learning algorithms: gradient boosting decision tree classifier, random forest classifier, bidirectional LSTM, and simple word-embedding model. They also proposed both theoretical and practical implications for future work, which is a good place to find motivation for our paper.

A survey on sentiment analysis methods, applications, and challenges

Mayur Wankhade, Annavarapu Chandra Sekhara Rao, and Chaitanya Kulkarni. 2022. A survey on sentiment analysis methods, applications, and challenges. Artificial Intelligence Review 55, 7, 5731-5780. https://link.springer.com/article/10.1007/s10462-022-10144-1

This paper provided a background on sentiment analysis like the survey for FER, which also gives us a good start to understanding how to approach sentiment analysis. It provides a throughout beginning-to-end process of a sentiment analysis. I find it useful when reading about the 3 main approaches, which are Lexicon Based Approach, Machine Learning Approach, and Hybrid Approach. It also introduced some common machine learning algorithms for sentiment analysis: Naive Bayes, support vector machines, and deep learning. Naive Bayes is easy to implement and can be trained on a relatively small dataset. Support vector machines can be trained to achieve high accuracy, but they can be more difficult to implement and require a larger dataset than Naive Bayes. Deep learning algorithms like recurrent neural networks and CNNs are more difficult to implement and require a large dataset to train.

Sentiment analysis of restaurant reviews using hybrid classification method

M. Govindarajan. 2014. Sentiment analysis of restaurant reviews using hybrid classification method. Sentiment analysis of restaurant reviews using hybrid classification method 2, 1, 17-23. https://www.digitalxplore.org/up_proc/pdf/46-1393322636127-133.pdf

This paper compared the effectiveness of different methods made for classifying restaurant reviews and whether it is beneficial to use ensemble techniques. It includes methods like Naive Bayes, Support Vector Machine and Genetic Algorithm. I think they provide a broad look at what methods are available for classification and that can be helpful for our paper, but I am not sure if it will be useful for our paper since we are not planning to explore classification in restaurant reviews but more about the sentimental analysis of it. However, the amount of paper available on the topic that I proposed is quite limited. If we can get access to one of the paper I listed below, they can be a good resource since it is more related to the direction I want to develop my proposal.

Sentiment analysis of customer reviews of food delivery services using deep learning and explainable artificial intelligence: Systematic review

Anirban Adak, Biswajeet Pradhan, and Nagesh Shukla. 2022. Sentiment analysis of customer reviews of food delivery services using deep learning and explainable artificial intelligence: Systematic review. Foods 11, 10, 1500. MDPI. https://www.mdpi.com/2304-8158/11/10/1500

This paper focuses on AI and DL for sentiment analysis. They explained more about how AI, DL, ML are developing within each other. I think this paper acts as an overall guide on the techniques that I should use for my paper. It includes information about different AI methods that are used in sentiment analysis of customer reviews for food delivery services and also challenges when using DL techniques on customer reviews.

“His lack of a mask ruined everything.” Restaurant customer satisfaction during the COVID-19 outbreak: An analysis of Yelp review texts and star-ratings

Maria Kostromitina, Daniel Keller, Muhittin Cavusoglu, and Kyle Beloin. 2021. “His lack of a mask ruined everything.” Restaurant customer satisfaction during the COVID-19 outbreak: An analysis of Yelp review texts and star-ratings. International journal of hospitality management 98, 103048. Elsevier. https://www.sciencedirect.com/science/article/pii/S0278431921001912

This paper is similar to what I might want to do for my pitch: it includes background information about the review text in relation to the choice of star-ratings. It also provides an interesting aspect of how Covid-19 affected the reviews of the customers, and how, depending on the situations, the reviews that the customers read might not help them make the right decision of choosing a good restaurant.

Sentiment analysis of customers’ reviews using a hybrid evolutionary svm-based approach in an imbalanced data distribution

Ruba Obiedat, Raneem Qaddoura, Al-Zoubi Ala’M, Laila Al-Qaisi, Osama Harfoushi, Mo’ath Alrefai, and Hossam Faris. 2022. Sentiment analysis of customers’ reviews using a hybrid evolutionary svm-based approach in an imbalanced data distribution. IEEE Access 10, 22260–22273. IEEE. https://ieeexplore.ieee.org/abstract/document/9706209/

This paper, other than proposing new techniques for sentiment analysis, addresses the problem of imbalance dataset, which is a common problem to encounter in data analysis. Even thought, the paper doesn’t perform the work on an English-based dataset, it is useful to see how they deal with imbalance data problems. They use Naive Bayes, SVM, and Genetic Algorithm on the dataset, then compare with their proposed hybrid model built with all three classification methods

3 Pitches – Polished version

Pitch 1: Reduce the encounter of local minima in heuristic search space. In the heuristic search space of heuristic algorithms, there are areas where the nodes appear to be closer to the goal state, but when the algorithm encounters these areas, they actually wasted more resources to reach the goal. These areas are called local minima. Local minima tends to arise more when using distance to go as the heuristic value instead of cost to go, which is an aspect that this project plans to explore. Avoiding local minima and understanding the behavior can help improve the performance of heuristic search algorithms. Overall, this project will explore the problem of local minima with beam search.

Risk: There is limited information on this topic and a lot of knowledge gaps that will need to be filled.

Pitch 2: Facial expression recognition: classify facial expression using machine learning. Problem: help people in communication, analyze human’s emotion, help with the development of AI in supporting humans during their daily life. Dataset: CK+ : 48×48 pixels images in grayscale format; face cropped; emotions includes anger (45 samples), disgust (59 samples), fear (25 samples), happiness (69 samples), sadness (28 samples), surprise (83 samples), neutral (593 samples), contempt (18 samples). Tufts Face Database: multi-modal face image images with more than 100,000 images, 74 females and 38 males from different age groups.

Risk: Finding a suitable machine learning algorithm, process image data.

Pitch 3: Sentiment analysis of online food review. When people buy products online, the one thing that they tend to look closely at are the reviews of the product. Having good reviews and understanding the needs of the customers through the review can help the business grow tremendously. A review usually comes in two parts: the rating and the reviews. Users, a lot of the time, mistakenly choose the wrong rating for the products, so the reviews are more reliable in most situations. This project aims to perform sentiment analysis on the reviews dataset, so it provides more accurate feedback on the products. Another aspect that this project can develop toward is that it can perform analysis on the negative languages on the recent reviews to let the businesses know what they should focus on improving. Amazon Fine Food Reviews dataset, which contains data over a 10 year period (1999 to 2012). Another plan to replace this dataset is using DoorDashAPI to collect reviews from the restaurants on DoorDash.

Risk: dataset is not recent and will keep looking for a more recent dataset.

Annotated Bibliography

- Pitch #1: “Online Child Safety: Using deep learning to detect inappropriate video content for children.”:

In today’s digital world, where online content is easily accessible, my project aims to use deep learning and machine learning techniques, such as Neural Networks, to tackle the challenge of inappropriate content on video platforms like YouTube. Using advanced image processing and pattern recognition, my goal is to detect and flag unsuitable imagery and audio within videos. With a focus on creating a child-safe environment, I aim to build a comprehensive dataset of video file tags, making online platforms more secure for users of all ages and fostering a responsible and enjoyable digital experience.

Research question: To improve the accuracy and efficiency of content classification on YouTube, can I compare and evaluate the performance of different deep learning architectures, such as Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) versus human judgment, in the context of detecting inappropriate content? What are the trade-offs in terms of computational resources and real-time processing?

Datasets:

https://zenodo.org/record/3632781 (Restricted)

https://figshare.com/articles/dataset/The_Image_and_video_dataset_for_adult_content_detection_in_image_and_video/14495367/1 (adult content detention)

YouTube Data API (metadata)

Good Resources:

- Bringing the Kid back into YouTube Kids: Detecting Inappropriate Content on Video Streaming Platforms

- Nowadays, YouTube (YT) has become a very popular platform used by a lot of people, including children. YT has a section for children called YT Kids, but sometimes inappropriate children’s content may be leaked onto the platform. In this research, they collected data manually from a range of videos and used deep learning as a filter mechanism.

- The researchers designed and implemented a deep learning model just for the task of content classification. They used a variety of deep learning models, such as Convolutional Neural Networks (CNN) and Recurrent Neural Networks (RNN), to analyze elements of the content of the videos, including visual, audio, and motion. These model were chosen for their ability to learn complex patterns from raw data.

- The management of video data is more complex because there are more elements to evaluate than a photo, for example, so they used a pre-trained CNN (VGG19) to extract relevant visual features from individual frames in a video. They used a type of RNN Bidirectional Long short-term memory (LSTM) to capture temporal relations in the motion features in videos, and finally, to process the audio of the videos, they used spectrograms to extract relevant audio and then deep learning models can analyze audio features to detect inappropriate language, words, etc.

- The way this research processed and classified their data was that the extracted motion, audio, and visual elements of each scene were put together into a single feature vector for that scene. Then, this single feature vector is fed into a fully connected neural network layer, followed by a softmax layer for classification. Deep learning models can effectively process and combine all these features to make decisions about the content of each scene.

- This paper is relevant to my pitch because my project aims to use deep learning and machine learning for video analysis, which is the research of this paper which also deals with the issue of content filtering and detection.

- A Deep Learning-Based Approach for Inappropriate Content Detection and Classification of YouTube Videos

- This paper talks about YT being a platform that attracts malicious uploaders who share inappropriate content targeting children. To address this issue, this article proposes a deep learning-based architecture for detecting and classifying inappropriate content video by using an ImageNet pre-trained CNN model, specifically EfficientNet-B7 to extract video descriptors. This descriptor is then processed by a bidirectional long-short-term memory (BiLSTM) network to learn effective video representation and video classification. An attention mechanism is also incorporated into the network.

- Their proposed model uses a dataset of cartoon clips collected from YouTube videos, and the results are compared with traditional machine learning classifiers.

- This paper’s methodology is processed in three steps. Video processing in which YouTube videos are split into small clips and labeled through manual annotation. Then, the CNN model and pre-trained on ImageNet is used to extract deep features from processed video frames. These features are also used to feed the BiLSTM network. The extracted videos are processed by BiLSTM Network to learn video representations. The output is then used for multiclass video classification.

- The data set of this paper contained a total of 111,561 video clips. Out of that number, 57,908 clips were labeled as safe, 27,003 as sexual nudity, and 26,650 as fantasy violence. This distribution was made to ensure a balanced dataset for training and evaluation.

- The researchers compared different classifiers, such as Support Vector Machines (SVM), k-nearest Neighbors (KNN), and Random Forest that use EfficientNet. They found out that these machine learning classifiers were outperformed by their deep learning model.

- This article mentions that for future work, combining temporal and spatial features and increasing the number of classifications, their model can be even better.

- My project focuses on the same problem domain as the article’s. My pitch attempts to use machine learning and deep learning as well. This article could potentially provide me with guidance for dataset collection.

- This article cited the previous article, “Bringing the Kid Back into YouTube Kids: Detecting Inappropriate Content on Video Streaming Platforms.”

- This article is directly related to my area of research. I could use as a framework some of the methodologies from this paper because it is related to the use of the CNN model.

- Evaluating YouTube videos for young children

- This article discusses the evaluation of YT videos for small children. The main concern of this article is about the quality of the appropriate quality of video content for children. This paper proposes to provide a framework for evaluating the quality of YT videos for young children, saying that this information can be used by educators and YouTube creators. I found this information relevant to my pitch because it can provide me guidance for video classification.

- The article discussed YT official labeling for the classification of children’s content. The author proposes that these classifications do not necessarily study how children learn from screen media.

- This study studies children’s interaction with screen media, develops a rubric for YT videos, and uses this rubric on YT videos.

- The rubric they made is based on criteria related to age appropriateness, content quality, design features, and learning objectives.

- They tested five videos with different content by human judgment.

- This paper can provide a framework to understand a clear understanding of what is appropriate for children on YT videos.

- This paper discusses the use of Cohen’s Kappa to measure inter-rater reliability. In my project, I will probably be dealing with a large dataset, I can use a similar statistical method to asses between automated judgment and human judgment.

- A Deep-Learning Framework For Accurate And Robust Detection Of Adult Content

- This paper highlights the importance of filtering sensitive media, such as pornography and explicit content in general, on the internet. It talks about the negative consequences of exposing children to this content.

- The paper discusses old alternatives to solve this issue, such as IP-based blocking, textual analysis, and statistical color models for skin detection. However, this paper introduces the idea of using and transitioning to deep learning. To go from handcrafted features to deep neural networks.

- This paper proposes to use the deep-learning framework and spatial and temporal characteristics of video sequences for adult content detection. The framework is based on CNN architecture called Inception-v3 for spatial feature extraction. Temporal characteristics are modeled using long-term short memory (LSTM). The authors used different deep-learning network architectures such as VGGNET, RESNET, and Inception. Inception-v3 was selected to be the most efficient in the feature extraction.

- The Dataset is the NPDI dataset, which contains explicit (pornographic) content as well as non-explicit content. This dataset is used for training and classification.

- After extracting features from images or video frames using color distribution, shape information, skin likelihood, etc., clustering techniques (k-means) and classifiers (Support Vector Machines, SVM, etc.) are used to separate explicit from non-explicit content.

- They used HueSIFT and space-time interest points with SVM and Random forest for better classification using statistical machine learning algorithms.

- The paper also mentions that deep learning models such as AlexNet, GoogleLeNet have higher accuracy compared to traditional machine learning methods.

- The results said that the deep leaning based approach outperforms previous methods and achieves high accuracy, mostly using LSTM networks.

- This paper has great visuals that may help me better orient when creating the visuals and graphs for my paper. I probably won’t be using the dataset from this paper, but it is a great source to have a better understanding of how LSTM works and how could implemented in CNN architecture.

- Disturbed YouTube for Kids: Characterizing and Detecting Inappropriate Videos Targeting Young Children

- This paper mentions that many YT channels are for young children, but there is a significant amount of inappropriate content that is also targeted at young children.

- YT recommendation system sometimes suggests not the best content for children.

- This research collected a dataset of videos, including inappropriate and for children as well as random videos, and classified the videos as suitable, disturbing, restricted, or irrelevant.

- A deep learning classifier is developed to detect disturbing videos automatically.

- The dataset is collected using YT Data APU and multiple approaches to obtain seed videos. Manual annotation is performed to label videos.

- This project starts with the seed videos as a starting point. These seed videos are videos that are appropriate for young children. Then, randomly choose from the recommended videos and choose the videos recommended by the platform. Then, a trained binary classifier is used to predict if the recommendation is appropriate or not. Keep track of whether the next video is appropriate or not. They analyze these random walks and product statistics.

- The authors trained a deep-learning classifier to classify the videos automatically. To train the classifier, the authors used a labeled dataset of videos.

- Very Deep Convolutional Networks For Large-Scale Image Recognition

- This paper talks about the impact of convolutional neural network (ConvNet) depth in the context of large-scale image recognition. It explores the use of deep networks with small (3×3) convolutional filters and shows that increasing network depth improves performance.

- They found that using small (3×3) convolutional filters and pushing the network depth to 16-19 layers can lead to a huge improvement.

- This paper focuses on ConvNets architecture and explores increasing the depth.

- During the paper, the authors argue their decisions about input size, convolutional layers, etc. The training process involves optimizing the multinomial logistics regression objective using mini-batch gradient descent with momentum. Regularization techniques such as weight decay and dropout are used.

- To test, they used a trained ConvNet as input images for classification. The input is rescaled to a predefined smallest side as Q, which is a test scale.

- The network is applied densely over the rescaled test image. The fully connected layers are converted to convolutional layers, and the result is a fully convolutional network is applied to the whole uncropped image.

- This paper, very similar to other papers, will provide me with a better understanding of using CNN models on a big data set of videos. I want to review this paper to study how they worked increasing the depth of the layers.

2. Pitch #2: Machine learning for music recognition:

This project aims to use machine learning for music genre recognition. In a world where music is everywhere, music recognition can be a bit of a challenge. I will use machine learning techniques to teach the system to recognize unique patterns and characteristic that defines the music genre. I may try to duplicate already well-known models for music recognition and compare two of them to see which one is more efficient.

Research question: To optimize music genre recognition using machine learning, can we compare and evaluate the performance of traditional machine learning classifiers, such as logistic regression and support vector machines (SVMs), with deep learning architectures like convolutional neural networks (CNNs) using a diverse dataset? How do these algorithms perform in terms of accuracy?

Data sets:

- A Machine Learning Approach to Musical Style Recognition

- This article is about the applications of machine learning for music recognition. The main problem of this article is the challenge of making computers understand and perceive music beyond low-level features like low-level and tempo.

- There is a lot of avoidance in research about high-level inference, so it is a challenge to build music-style classifiers.

- This article proposes the development of a machine learning classifier for music style recognition.

- The data collection in this research is by recording trumpet performances in different styles and then labeling them according to the style.

- The machine learning techniques used are naive Bayesian classifier, linear classifier, and neural networks to build the style classifier.

- They trained the classifier a portion with the data and then the rest of the data.

- There are a lot of challenges because music can be multifaceted. Also, selecting features by trying to capture the essence is not easy.

- There are a lot of overlapping styles in music.

- The training of the data can be time-consuming and requires a lot of human effort.

- This article could be relevant to my work to provide context and framework.

- Music Genre Classification using Machine Learning Algorithms: A comparison

- They built multiple classification models and trained them over the Free Music Archive (FMA) dataset without hand-crafted features.

- To be able to classify the genre of a song, previous work had used both Neural Network (NN) and machine learning. To use NN, this has to be trained end to end using spectrograms of the audio signals. Machine Learning algorithms, like logistic regression and random forest, use hand-crafted features from the time and frequency domains. The manually extracted features like Mel-Frequency Cepstral Coefficients (MFCC), Chroma Features, Spectral Centroid, etc., are used to classify the music into its genres using ML algorithms like Logistic Regression, Random Forest, Gradient Boosting (XGB), Support Vector Machines (SVM). The VGG-16 CNN model gave the highest accuracy.

- With deep learning algorithms, we can achieve the task of music genre classification without hand-crafted features.

- Convolutional neural networks (CNNs) are a great choice for classifying images. The 3-channel (R-G-B) matrix of an image is given to a CNN, which then trains itself on those images.

- In this project, the sound wave can be represented as a spectrogram, which can be treated as an image as spectrograms using Short Time Fourier Transform (STFT). CNN will process the spectrograms by capturing patterns. There are a lot of elements that will be extracted: statistical moments, zero-crossing rate, root mean square energy, tempo, etc.

- CNN accuracy was about 88%, but models such as LR and ANN may have higher accuracy.

- The used dataset is from a Free Music Archive dataset.

- This paper is relevant to my project because provides a very detailed methodology in the research that I may use as guidance.

- Music instrument recognition using deep convolutional neural networks

- Identifying musical instruments within many instruments is very complicated. The research uses a deep CNN to try to achieve this task. The music data set of the instrument is labeled and entered into the network and can estimate multiple instruments from audio signals of many lengths.

- Mel spectrogram representation is used to convert audio data into matrix format.

- The neural network in this research is formed of 8 layers. The softmax function is also used to provide higher chances of identification.

- They use CNN for its convolutional layers, pooling layer, etc.

- This article could be relevant to my project because the techniques for the extraction of relevant data from the music data set could help me in editing my data. Also, the information about deep learning, including CNN could be relevant as a guide. I could explore a similar research but using a different model.

- The conversion of audio data into spectrograms is also very needed information for my project.

- Music genre classification and music recommendation by using deep learning

- This paper talks about the importance of music in people’s lives and the need to classify music by genre.

- This paper reviews preview work on music classification, including Time-Frequency analysis, Mel Frequency Cepstral Coefficients, wavelet transformations, and support vector machines but the authors introduce a convolutional neural network for extracting features from raw music spectogram and mel scpectogram. They compared the performance of CNN-based methods with traditional processing methods.

- In this paper, the author proposes MusicRecNet, a new CNN-based model for music genre classification.

- They claimed that this model outperformed their previous classifier.

- The dataset used in this research is the GTZAN dataset, which contains 1000 music samples from ten genres to evaluate the model.

- Each music sample is divided into six 5-second parts and generates Mel Spectograms. MusicRecNet, with three layers and additional features such as dropout, is trained on these spectrograms.

- They used various classification algorithms such as MLP, logistic regression, random forest, LDA, KNN, and SVM applied to the vectors.

- The accuracy of MusicRecNet is 81.8%, but when used with SVM, it is 97.6%.

- Music Genre Classification: A Comparative Study between Deep-Learning and Traditional Machine Learning Approaches

- This paper compares the deep learning convolutional neural network approach with five traditional off-the-self classifiers using spectrograms and content-based features. This experiment uses GTZAN dataset, and the result is of 66% accuracy.

- The paper introduces the importance of music and the role of genres in categorizing music. Music genre classification is identified as an Automatic Music Classification problem and part of Automatic Music Retrieval.

- The study uses automatic music genre classification using spectrogram images and content-based features extracted from audio signals. It uses deep learning CNN and traditional classifiers such as logistic regression, k-nearest neighbors, support vector machines, random forest, and multilayer perceptrons.

- The dataset has 1000 songs and is 30 seconds long. It includes raw audio files, extracted mel frequency cepstral coefficients spectrograms, and content-based features.

- To train the data, the data set was duplicated and divided into 10,000 3-second song pieces.

- The spectrogram size was 217×315 pixels, and 57 features were selected, such as chroma short time Fourier, root mean square error, spectral centroid, Harmony, Tempo, zero crossing rate, etc.

- Then CNN was used for deep learning, which consists of an input layer followed by five convolutional blocks. Each block had specific layers. They used a 2D matrix in a 1D array.

- The traditional machine learning models were implemented by using Scikit Learn library.

- Multimodal Deep Learning for Music Genre Classification

- This article discusses music genre classification using a multimodal deep-learning approach. They aim to develop a system that can automatically assign genre labels to music based on different types of data, including audio tracks, text reviews, and cover art.

- The authors proposed a 2 step approach: 1) to train a neural network for each modality (audio, text, etc.) on the genre classification and extract intermediate representations from each network and combine them in a multimodal approach.

- Audio representation is learned from audio spectrograms using CNN, text from music-related texts using a feedforward network, and visual uses a residual network.

- The model uses weight matrices and hyperbolic tangent functions to embed audio and visual representations into the shared space.

- The dataset used is The million song dataset, which consists of metadata. The data is split into training, validation, and test sets.

- According to the authors, combining the three modalities outperforms individual modalities.

CS388 Fall2023 Three Pitches

Saki Pitches

- Data visualization (information visualization)

I would like to create data visualizations about the rate of females in STEM areas in different countries /eras. One of the visualizations I can think of is a map visualization since I would like to focus on areas. I specifically would like to explore the cause why Korean females tend to go to STEM areas in college more than Japanese females, focusing on the education system, role models, and some other social facts.

Data Sets Examples

https://www.oecd.org/gender/data/

https://statusofwomendata.org/explore-the-data/employment-and-earnings/additional-state-data/stem/ - Chatbot

I will create a chatbot which prospective students and current students can ask about Earlham College. It will be great research about Human Computer Interaction. A difficult point might be how to get the information about Earlham to the database which the chatbot will base on. I am also interested in designing its webpage at the same time. - Evaluation and Redesign of Instagram

As a computer science major, I am particularly interested in web development. The problem that I found interesting to solve was how people can live with social media without much stress. I always think that social media such as Instagram now becomes a platform for people to “show off” part of their lives in some ways. I can do research on what kinds of User experience effects on humans mental health. I could evaluate the app based on Use experience aspects. I can work on researching user experience principles and its effects.

Test 2

This is a test

Test

This is a test

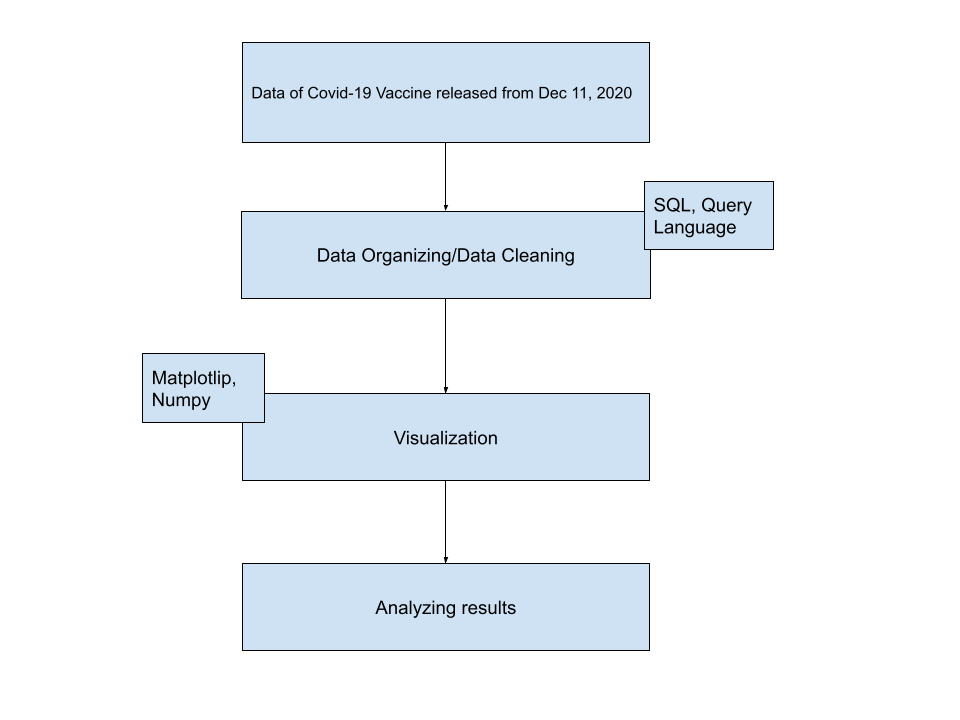

Andex Nguyen – Covid-19 Reception Rate in South-East Asian Countries

About Me:

I am Andex Nguyen, a Senior who’s majoring in Data Science.

Introduction:

Since 2020 when the pandemic struck us for the first time, Covid-19 has always been a globally noteworthy issue. In this project, the questions that I am trying to find the answers for are how different countries in 1 region, specifically South-East Asian Countries, responded to the Vaccine, and what may be the reasons for that difference. A lot of the time, the matter of the people not having vaccinated stem from the political issues, economic and medical ability to acquire the vaccines, and the belief systems of those countries. My country, Vietnam, did very well in preventing Covid-19 by executing strong quarantine policies for our first step, and we proceeded to excellently secure an adequate amount of vaccines for our people. I want to see if the countries around the same area as my country also did a similarly good job. There would also be a case difference between fully vaccinated and those who are still waiting to complete their vaccination process.

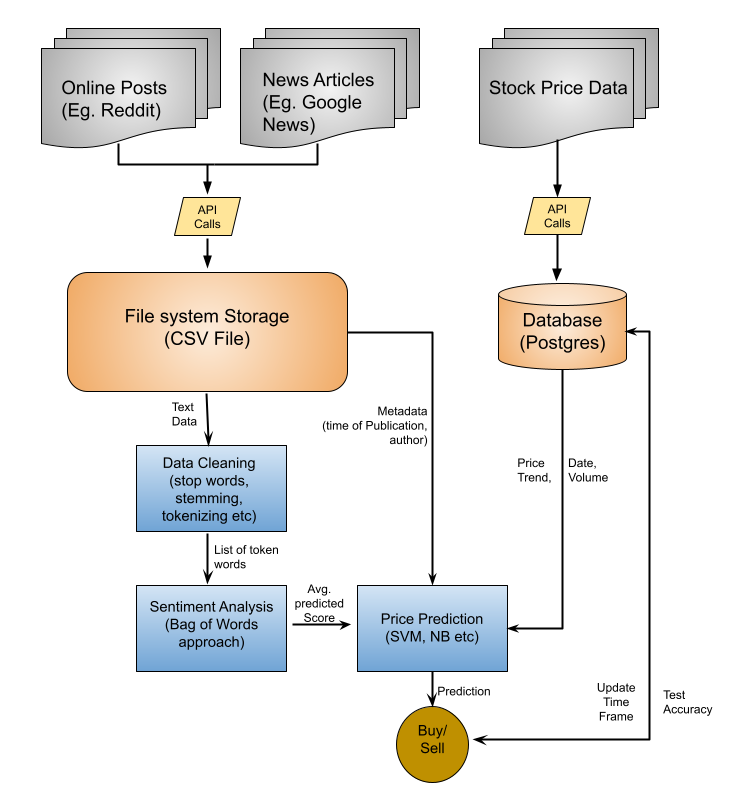

Data Architecture Diagram:

GitLab link:

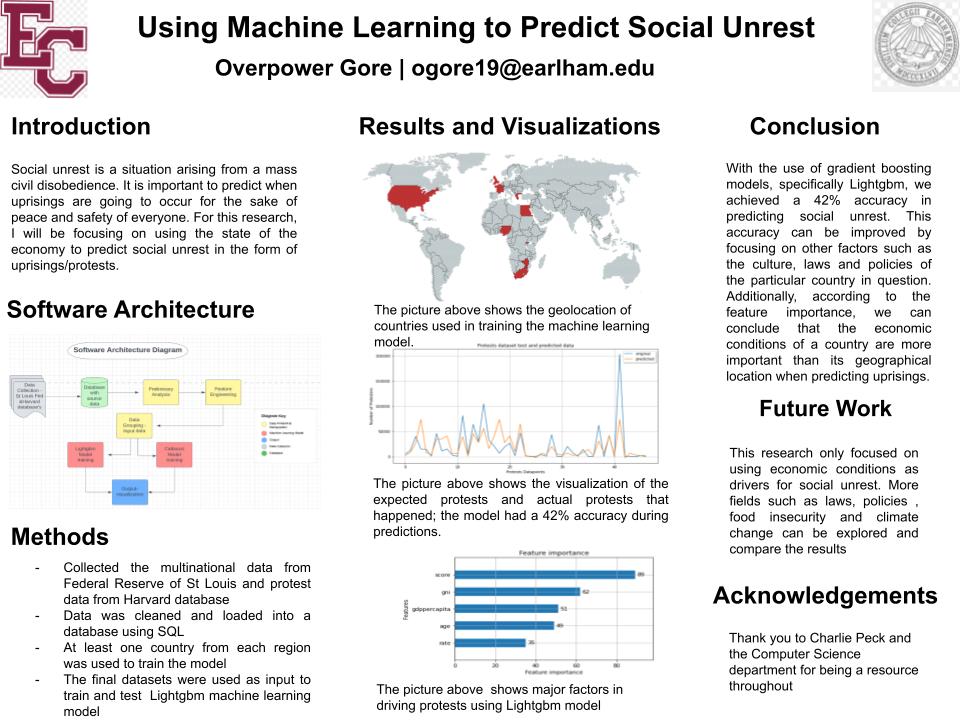

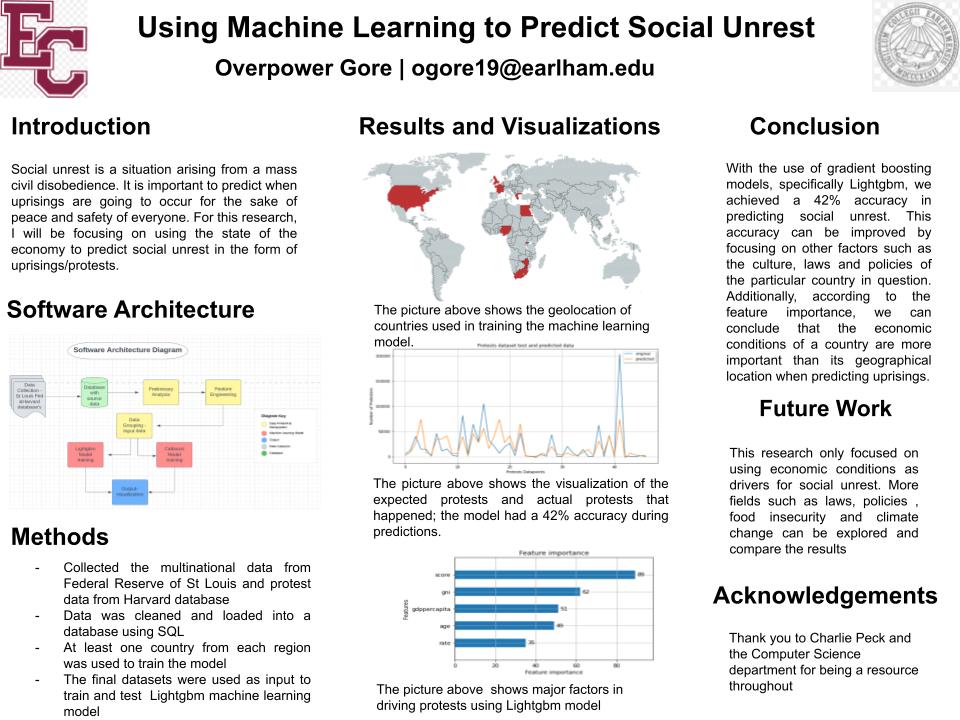

Abstract

Uprisings are an act of resistance/rebellion. Uprisings could be the general population rising against the government (such as the protests in Zimbabwe as people protest over the poor economic conditions) or it could be people with morally questionable intentions against the general populace such as the Boko Haram in Nigeria. It is important to predict when uprisings are going to occur for the sake of peace and safety of everyone. This leads to the question of how we can possibly know before a social upheaval begins. Currently there is no official way to predict social unrest or a protest; people only know when a protest has already started. For this research, I will focus on using machine learning to study the effects of economic trends causing social uprisings. I use the trained machine learning model to predict when social upheaval’s are likely to happen. This model will be continually improved by doing exploratory data analysis to see which financial metrics are more reflective of the economy at any given time and using those metrics as input into the model. Continuously improving the accuracy of the model means we get closer to accurately predicting social unrest consequently ensuring the peace and safety of the public in the event of a protest

Introduction

Hi, my name is Overpower Gore but everyone calls me OPG. I am a senior Data Science student at Earlham College with a passion for information technology. I have held multiple internships impacting positive organizational outcomes through software development, data analysis, and data visualization.

CS 388 Annotated Bibliographies

1)

I am interested in completing research related to the use of machine learning to generate decision trees which control non-player character behavior in video games. Decision trees are relatively interpretable and have a high-level correspondence to behavior trees, which are used in the most common approach to AI for video games (Świechowski, 2022). I also enjoyed working with them when I took Artificial Intelligence and Machine Learning. The two environments I would propose using for testing is the Micro-Game Karting template in the Unity Asset Store, which is available for free and was used for testing by Mas’udi (2021).

Annotated Bibliography

Chan, M. T., Chan, C. W., & Gelowitz, C. (2015). Development of a Car Racing Simulator Game Using Artificial Intelligence Techniques. International Journal of Computer Games Technology, 2015.

- Has focus on emulating human behavior

- Use of waypoint system combined with conditional monitoring system

- Uses trigger detection because it detects objects within the trigger area, where ray-casting can only detect collisions in one direction

- Unity’s waypoint system and built-in gravity and vector calculations minimize development effort

This paper argues that Unity, as a development platform for a racing game, has advantages over the use of traditional AI search techniques for implementation of path-finding. These points could help to justify a decision to utilize Unity as an environment for testing.

Fairclough, C., Fagan, M., Mac Namee, B., & Cunningham, P. (2001). Research Directions for AI in Computer Games. Department of Computer Science, Trinity College, Dublin.

- Covers the role of AI in action games, adventure games, role-playing games, and strategy games

- Describes the status of AI within the game industry at the time of its publication

- Lists a wide range of benefits to exploring the field of game AI

- Discusses the TCD Game AI Project

This article gives a number of reasons for the simplicity of AI used by game developers in comparison to that used in academic research and industrial applications, and mentions a number of well-understood techniques in wide use at the time of its publication, including FSMs and A*. It also offers a number of reasons why research into AI for computer games is a worthwhile endeavor.

French, K., Wu, S., Pan, T., Zhou, Z., & Jenkins, O. C. (2019, May). Learning Behavior Trees from Demonstration. In 2019 International Conference on Robotics and Automation (ICRA) (pp. 7791-7797). IEEE.

- Focuses on how a behavior tree can be learned from demonstration

- Understands behavior trees as modular, transparent, reactive, and readily executable

- The control nodes of behavior trees are Sequence, Fallback, Parallel, and Decorator

- Algorithm 1 naively converts a decision tree to a behavior tree, while BT-Express takes advantage of the properties of a decision tree in doing so

- Robot uses behavior tree to execute task without human input

- Task executed is dusting with a micro fiber duster, requiring six distinct steps

This article gives advantages of behavior trees and describes two methods by which a decision tree can be converted to a behavior tree. This could be helpful in understanding the relationship between the decision trees which I intend to work with and the behavior trees that are prevalent in video game AI.

Guo, X., Singh, S., Lee, H., Lewis, R. L., & Wang, X. (2014). Deep Learning for Real-Time Atari Game Play Using Offline Monte-Carlo Tree Search Planning. Advances in Neural Information Processing Systems, 27.

- Environment used is the Arcade Learning Environment (ALE), a class of benchmark reinforcement learning (RL) problems

- Results for Deep Q-Network used as a basis

- Repeated incremental planning via the Monte Carlo Tree Search method UCT

- Combines UCT-based RL with deep learning by using a UCT agent to provide training data

- Tests three methods, the most successful of which trains a classifier-based convolutional neural network (CNN) through interleaving of training and data collection to obtain data for an input distribution bearing a closer resemblance to that which would be encountered by the trained CNN

This paper presents results from game-playing agents whose performance was tested on the ALE, an environment which includes a variety of games for the Atari 2600 and thus would allow for testing the performance of decision trees on a range of different games, as well as permitting straightforward evaluation of the agent’s performance through scores achieved on the seven games used to evaluate Deep Q-Network. It also offers an example of the use of a method that would be infeasible for real-time play to generate training data for a faster agent, which, similar to the use of machine learning to generate a decision tree, uses a time-intensive process to create a classifier which can make decisions in real time.

Mas’udi, N. A., Jonemaro, E. M. A., Akbar, M. A., & Afirianto, T. (2021). Development of Non-Player Character for 3D Kart Racing Game Using Decision Tree. Fountain of Informatics Journal, 6(2), 51-60.

- Waypoint system and raycasting used for pathfinding

- Decision trees used with waypoint system and raycasting to determine NPC actions

- Performance tested through FPS (frames per second), lap time over ten laps, and a driving test based on collisions with walls over ten laps

- Agent’s performance compared to that of the ML NPC provided with Karting Microgame

This article offers an example of the use of decision trees to control NPC behavior. The environment it uses, Micro-Game Karting, which is now known as Karting Microgame, also offers an accessible environment for testing the performance of decision trees.

Van Lent, M., Fisher, W., & Mancuso, M. (2004, July). An Explainable Artificial Intelligence System for Small-Unit Tactical Behavior. In Proceedings of the national conference on artificial intelligence (pp. 900-907). Menlo Park, CA; Cambridge, MA; London; AAAI Press; MIT Press; 1999.

- Full Spectrum Command, a commercial platform training aid developed for the U.S. Army, includes an Explainable AI system

- In the after-action review, a soldier within the game can answer questions about the current behavior of its platoon, and the AI system identifies key events

- Gameplay resembles the Real Time Strategy genre most closely, though with a number of differences

- NPC AI controls individual units, while Explainable AI works during the after-action phase

- NPC AI is divided into Control AI, which handles immediate responses and low-level decision-making, and Command AI, which handles higher-level tactical behavior

This paper offers an example of one approach to transparency of video game AI, that of creating a distinct system to explain its action in retrospect, as well as a different context in which explainability is relevant. It could be compared with the use of behavior trees and decision trees to allow for transparency in video games.

2)

I wish to complete research related to the use of databases in video games to improve the efficiency of computations by increasing data locality and taking advantage of the optimizations of database management systems. While the article and dissertation by O’Grady (2019, 2021) which are referenced below both appear to focus on the feasibility of that approach, it seems likely that additional exploration is warranted regarding demonstrable advantages. For this exploration, I would propose focusing on the execution of path-finding through a database management system, one of the main areas examined by O’Grady (2021). Specifically, I wish to focus on sub-optimal path-finding, since optimality is generally not essential in the context of video games (Botea, 2013), despite the widespread use of A* (Kapi, 2020).

Annotated Bibliography

Abd Algfoor, Z., Sunar, M. S., & Kolivand, H. (2015). A Comprehensive Study on Pathfinding Techniques for Robotics and Video Games. International Journal of Computer Games Technology, 2015.

- Covers techniques for known 2D/3D environments and unknown 2D environments, represented through either skeletonization or cell decomposition

- Grids can be divided into regular grids and irregular grids, both of which are widely used in video games

- As an alternative to grids, hierarchical techniques are used to reduce memory space needed.

This article offers an overview of the various pathfinding techniques used for video games and robotics. It is organized by environment representation and could be helpful in comparing and evaluating path-finding algorithms in order to determine which to use with a specific representation. It might also assist in deciding on an environment representation.

Bendre, M., Sun, B., Zhang, D., Zhou, X., Chang, K. C., & Parameswaran, A. (2015, August). Dataspread: Unifying databases and spreadsheets. In Proceedings of the VLDB Endowment International Conference on Very Large Data Bases (Vol. 8, No. 12, p. 2000). NIH Public Access.

- Data exploration tool called DataSpread

- Relational database system used is PostgreSQL

- Offers Microsoft Excel-based front end while managing all the data in a back-end database in parallel

- Spreadsheet design focuses on presentation of data, while databases have powerful data management capabilities

This paper describes a system created by unifying relational databases and spreadsheets, with the goal of retaining the advantages of spreadsheets while taking advantage of the power, expressivity, and efficiency of relational databases. The objective and approach of this research bear significant resemblances to those of O’Grady’s 2021 dissertation, in that both seek to increase the efficiency of a system by handling costly computations in a relational database. However, this article differs from O’Grady’s work in that it is based on a spreadsheet interface and an underlying database, rather than focusing on the implementation of specific components of a system within a database management system.

Botea, A., Bouzy, B., Buro, M., Bauckhage, C., & Nau, D. (2013). Pathfinding in games. Schloss Dagstuhl-Leibniz-Zentrum fuer Informatik.

- Explains A*, Manhattan distance, and Octile distance

- Discusses alternative heuristics such as the ALT heuristic, the dead-end heuristic, and the gateway heuristic

- Covers approaches involving hierarchical abstraction

- Discusses symmetry elimination, triangulation-based path-finding, and real-time search

- Covers compressed path databases and multi-agent path-finding

- Discusses a range of potential areas for further research, including the generation of sub-optimal paths

This paper explains the logic and advantages of various approaches to path-finding in video games. It also recognizes that in video game contexts, suboptimal paths are acceptable

O’Grady, D. (2019). Database-Supported Video Game Engines: Data-Driven Map Generation. BTW 2019.

- Constructs maps composed of tiles through use of rules applied at specific frequencies

- Map generation implemented in SQL and run on PostgreSQL 10

- OpenRA used for on-site demonstration

This paper provides a demonstration of how a portion of a game’s internals can be moved to a database system. Its goal is to show that the benefits of moving expensive computations to a database system can be obtained while still allowing straightforward modification of the process by game developers.

O’Grady, D. (2021). Bringing Database Management Systems and Video Game Engines Together (Doctoral dissertation, Eberhard Karls Universität Tübingen).

- Implementing data-heavy operations on the side of the database management system (DBMS) increases data locality

- DBMS benefit from decades worth of development and optimization

- Explores how databases can be involved in the operation of video game engines beyond their role in data storage

- Evaluates practicality of three video game engine components when implemented in SQL

- The third of these components is path-finding

- The path-finding algorithm investigated is A*.

- Storing spatial information about the game world in a DBMS allows for easy implementation of additional constraints to avoid collisions upon planning, preliminary reduction of the search space, preparatory analysis of the search space, and performing the path search close to the data

- The pgRouting extension, implemented in C++ is used as a baseline

- Two other custom implementations are also explored, both of which expect vertex-weighted graphs

- The map is assumed to already be in a relational representation, as is the ability of actors to pass over certain types of tiles

- Finding unilaterally connected components allows path-finding to be sped up in some cases

- An implementation of A* in pure SQL is included

- An implementation of Temporal A*, designed to avoid collisions, in SQL is also included

- An Iterative Path Finding implementation in SQL, which gradually finds paths for multiple agents, is also given

- Path-finding has to be completed in a timely manner, but players are willing to accept higher latencies for it compared to other actions

- Shorter paths were found faster using the native implementation of OpenRA

- As paths increased in length, the time needed to find them in the native implementation gradually increased, while the time needed to find them using pgRouting remained about the same

- 57% of path searches were found to be within the range of lengths that resulted in agreeable time boundaries

- The DBMS was found to offer means to find paths sufficiently fast

This dissertation is the main work which I intend to build on in my thesis. Specifically, I wish to further explore the advantages of implementing path-finding in a database management system, as covered in Chapter 4.

Kapi, A. Y., Sunar, M. S., & Zamri, M. N. (2020). A review on informed search algorithms for video games pathfinding. International Journal, 9(3).

- Challenges are limited memory, time constraints, and path quality

- Most prominent algorithm is A*

- Popular graph representations are grid maps, waypoint graphs, and navigation meshes, each of which has advantages and disadvantages depending on the type of game

- NavMesh is often used in optimization to reduce memory consumption

- Heuristic functions are generally modified to reduce time usage

- Hybrid path-finding algorithms have been used for optimization

- Data structure used for implementation of an algorithm can also be changed for optimization

This paper provides an overview of various ways of optimizing informed search algorithms for path-finding in video games, presenting various factors which influence memory and time usage. It would be useful for considering potential consequences of different experiment designs, given its focus on approaches to improving the efficiency of path-finding which do not rely on the optimizations of a database management system.

CS 388 Initial Pitches

1)

I am interested in completing research related to the use of machine learning to generate decision trees which control non-player character behavior in video games. Decision trees are relatively interpretable and have a high-level correspondence to behavior trees, which are used in the most common approach to AI for video games (Świechowski, 2022). I also enjoyed working with them when I took Artificial Intelligence and Machine Learning. The environment I would propose using for testing is the Micro-Game Karting template in the Unity Asset Store, which is available for free and was used for testing by Mas’udi (2021).

References

Chan, M. T., Chan, C. W., & Gelowitz, C. (2015). Development of a Car Racing Simulator Game Using Artificial Intelligence Techniques. International Journal of Computer Games Technology, 2015.

Guo, X., Singh, S., Lee, H., Lewis, R. L., & Wang, X. (2014). Deep Learning for Real-Time Atari Game Play Using Offline Monte-Carlo Tree Search Planning. Advances in Neural Information Processing Systems, 27.

Mas’udi, N. A., Jonemaro, E. M. A., Akbar, M. A., & Afirianto, T. (2021). Development of Non-Player Character for 3D Kart Racing Game Using Decision Tree. Fountain of Informatics Journal, 6(2), 51-60.

Świechowski, Maciej and Ślęzak, Dominik, Monte Carlo Tree Search as an Offline Training Data Generator for Decision-Tree Based Game Agents (2022). BDR-D-22-00241, Available at SSRN: https://ssrn.com/abstract=4152772 or http://dx.doi.org/10.2139/ssrn.4152772

2)

I am considering completing research related to the use of databases in video games for purposes apart from data storage. While the article and dissertation by O’Grady (2019, 2021) which are referenced below both appear to focus on the feasibility of that approach, it seems likely that additional exploration is warranted regarding demonstrable advantages. For this exploration, I would propose focusing on the execution of path-finding through a database management system, one of the main areas examined by O’Grady (2021).

References

Muhammad, Y. (2011). Evaluation and Implementation of Distributed NoSQL Database for MMO Gaming Environment (Dissertation, Uppsala University).

O’Grady, D. (2021). Bringing Database Management Systems and Video Game Engines Together (Doctoral dissertation, Eberhard Karls Universität Tübingen).

O’Grady, D. (2019). Database-Supported Video Game Engines: Data-Driven Map Generation. BTW 2019.

Jovanovic, R. (2013). Database Driven Multi-agent Behaviour Module (Thesis, York University).

3)

I am also considering completing research related to the use of machine learning for recognition of Kuzushiji, an old style of Japanese cursive writing. Kuzushiji mainly appear in works from the Edo period of Japanese history and are difficult to identify correctly due to their lack of standardization (Ueki, 2020). Another difficulty comes from the Chirashigaki writing style, in which text is not written in straight columns (Lamb, 2020). The Center for Open Data in the Humanities has released a dataset of them (Ueki, 2020), which is available at http://codh.rois.ac.jp/char-shape/.

References

Clanuwat, T., Bober-Irizar, M., Kitamoto, A., Lamb, A., Yamamoto, K., & Ha, D. (2018). Deep Learning for Classical Japanese Literature. arXiv preprint arXiv:1812.01718.

Clanuwat, T., Lamb, A., & Kitamoto, A. (2019). KuroNet: Pre-Modern Japanese Kuzushiji Character Recognition with Deep Learning. arXiv preprint arXiv:1910.09433.

Lamb, A., Clanuwat, T., & Kitamoto, A. (2020). KuroNet: Regularized Residual U-Nets for End-to-End Kuzushiji Character Recognition. SN Computer Science, 1(3), 1-15.

Ueki, K., & Kojima, T. (2020). Feasibility Study of Deep Learning Based Japanese Cursive Character Recognition. IIEEJ Transactions on Image Electronics and Visual Computing, 8(1), 10-16.

3 Pitches

Machine Learning and Dueling:

A fighters skill comes from their training, their learned experience through failure, and thousands of reps. This is also exactly how reinforcement learning agents gain their skills– I propose a mutli-agent learning experiment where we attempt to teach two agents how to fight with swords and shields.

There are a couple common issues that hinder how AI’s learn in a 3D space. First, multi-agent learning (learning with two or more agents) is notoriously challenging. This is made difficult because an agent not only must learn how to move in general, but also move around another agent. As of 2017, a recent algorithm called MAD-DPG has proposed a solution to this problem, but it’s still buggy and could present issues. Additionally, without proper motion capture data, the agents will learn incorrectly, most commonly seen in issues like body parts artifacting through the ground. Finally, while I have motion capture data for individual fighting movements, such as slashing a sword or blocking with a shield, it is much more difficult to find motion capture data that of two humans sword fighting. Finally, swords and shields will be weighted, throwing off the model’s sense of balance, which will take lots of training to get used to.

Using Unity 3D machine learning agents made possible through PyTorch, CUDA and the unity facing engine, ML-agents, I propose I propose creating a deep reinforcement learning agent. Our control strategy would have 2 parts, a pretraining stage (a deep learning model used to create a neural network to solve problem) and a Transfer learning stage, which uses a prebuilt neural network from our pretraining stage to solve a problem.

Steps of pretraining:

- Feed the network a collection motion capture data of sword fighting sourced from Adobe Mixamo to understand basics, such as how to hold a sword and shield, how to walk with one, etc.

- This motion capture data is passed through a Imitation Policy Learning network, which tries to learns through imitation of the motion captured movement.

- The goal from this point is to pass the data is to translate our Imation Policy to a Comptetive Learning Policy, which learns through the goal of winning. To do this, we must preform two steps:

- Task encoding, adding understanding to goals of movements

- Motor decoding, decoding motor function in a way the competitive Learning Policy can understand

Steps of Transfer Learning:

- Turn the Motor Decoding and Task Encoding data into learning policies for both agents (Agent 1/Agent 2)

- Run these policies through Competitive Learning Policy, which mimics the way humans learn skill, through trail and error against eachother.

Social Simulation of Scaricity:

Scarcity–how do we divide resources fairly when their isn’t enough for everyone? The entire subject of Economics boils down to this question, and has caused many conflicts throughout history. Sometimes, we have to make tough decisions because of scarcity.