During the past couple of weeks, I made some good progress on my project. I now have a functioning driver file that the user will run to train or validate a model, or to predict from their input data. This has allowed me to tie up different parts of my software into one functioning project. I also have predictions working (big step) and am currently working on the best way for the user to view their results.

Week of march 23

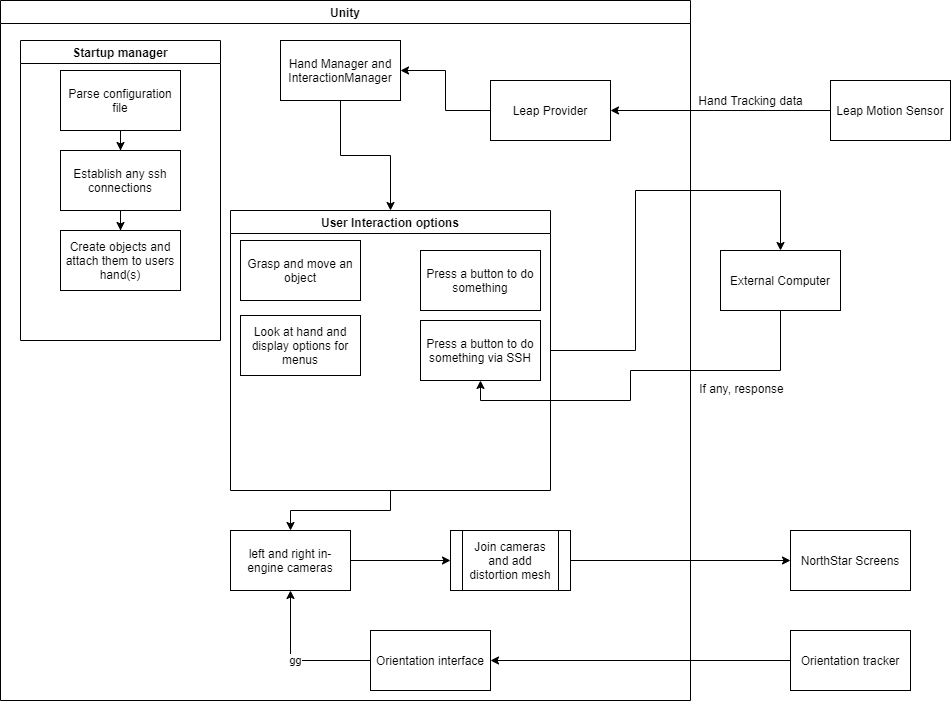

I’ve done some work on the configuration parser to enable to core of the project to work: allowing a user to generate a ui with functionality from a text file. All i’ve been able to do so far runtime wise is to have the user select whether they are using VR mode or AR mode. Backend wise, i’ve done a lot of setup of methods and variables and i’ve done the first tests with ssh and telnet (long story) integration which didn’t turn out like i wanted. Next on my list is to create the actual objects that the user defines and start hooking up the ability for said objects to do stuff.

CS488-Week10-Update

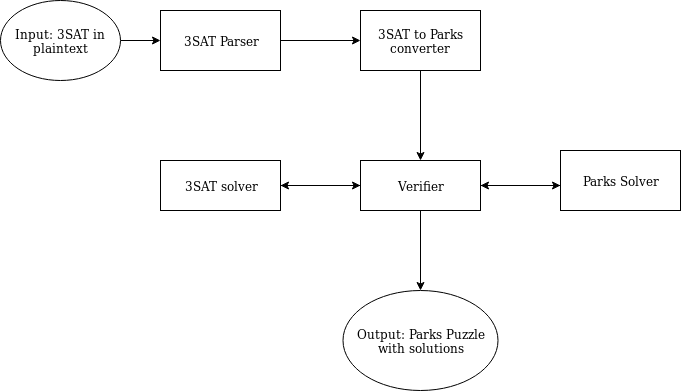

I spent this week on finishing up a very detailed proof that Parks is NP complete. I also worked on improving the exposition of ideas leading up to the main result in my paper, which really helped me with the software video.

CS 488 – Week 10

Did not get much to do due to the college shut down caused by corona virus. Hoping to get in touch with my advisor and figuring out the remaining schedule.

CS 488 – Week 9

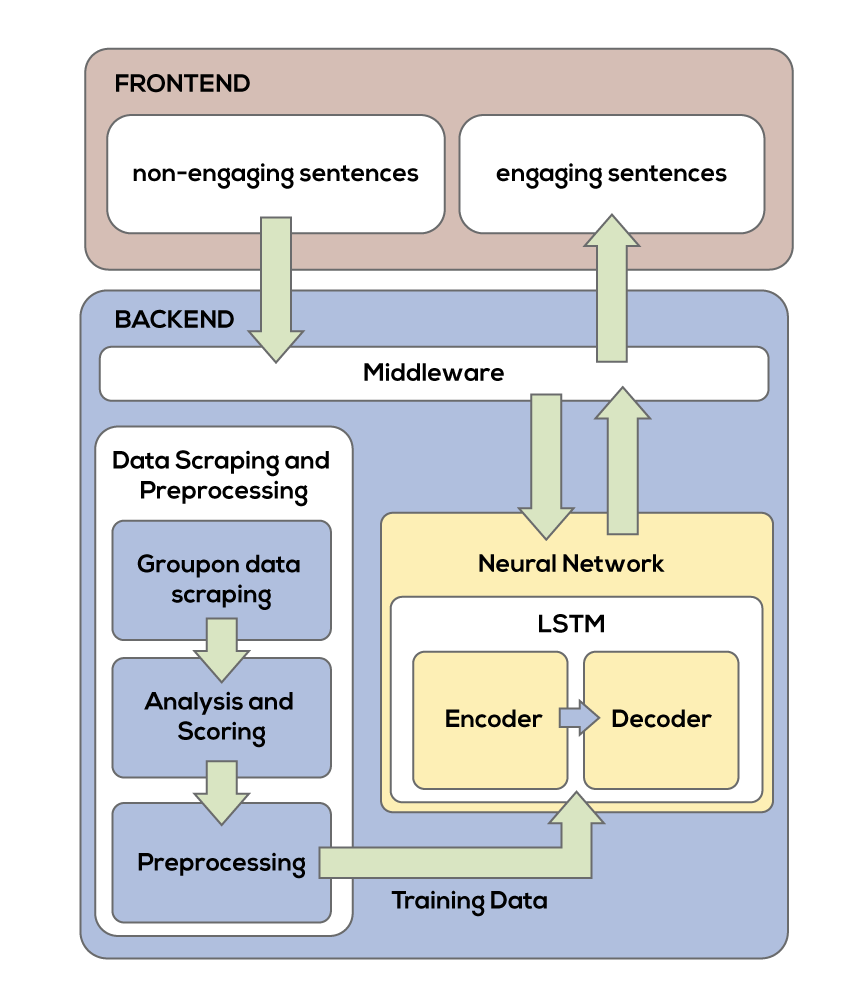

This past week I worked on my other model that uses both engaging/readable and non-engaging/readable advertisements, I found that this model does not perform well because of the lack of parallel data and sentence structures between the two different sentences. For the next week, I will work on refining my initial model as well as writing the frontend for the project.

Week of 23 March

I parallelized some of the python code.

I also rewrote the color processing code into C++. The C++ code for individually changing each pixel is 600 times faster.

I read up on how to make images of food look better and added more algorithms. I want to improve the algorithms/add more algorithms. They are currently not hooped up to the rest of the code.

Next I need to link the C++ code to my Python code, and find a way to to generate meaning full varaibles to pass the processing algorithms. I might rewrite and benchmark the cropping-and-retargeting code in c++ as well.

CS488-Week9-Update

I did not get to do much due to the various disruptions caused by Corona virus. I am currently working on getting things in order and figuring out meeting times with my adviser and whatnot.

CS 488 – Week 9

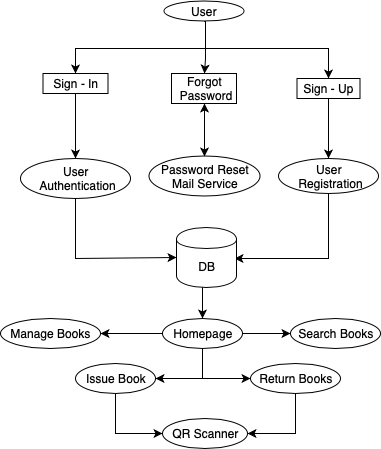

Working on the logout procedure and to be able to pull up books from the database correctly when the QR code is scanned. The profile page of the librarian and student needs some design elements since the users can view the profile page to see the history of books issued. Planning on working on the second video in the coming week.

CS388 – Week 8 – Update

I read more papers and started thinking about the proposal idea.

CS 488 – Week 1 – Update

My advisor: Xunfei Jiang

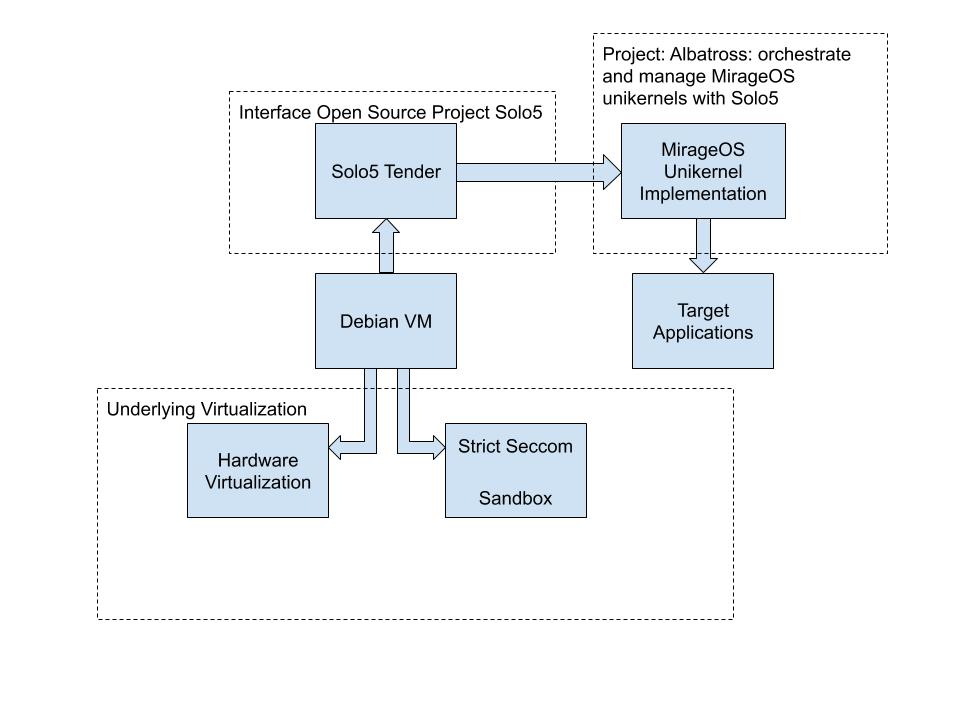

Set up a VM and I am installing Solo5 on VM

CS488 – Week 8 – Updates

The feature extraction module is finished (ready to use) now, but I am still stuck on the modeling module… The model I am using is called VGGVOX which is available on Keras. I am stuck on input pre-processing. The bug is on a function on BatchNormalization(). This function normalizes the activations of the previous layer at each batch. But the issue is not on this function, instead, it is on some deep layers of tensorflow innate functions… which i cannot modify. I am kind of lost which step is wrong exactly.

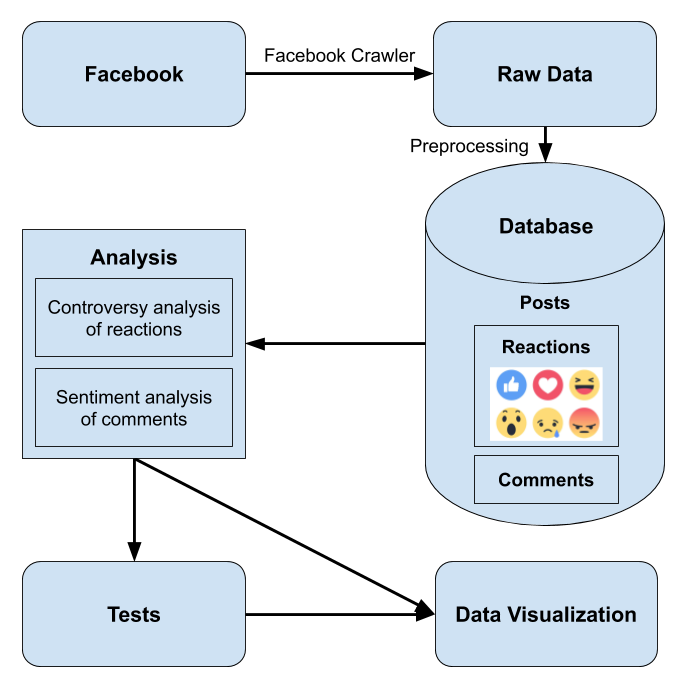

Project Description: Gender Bias Detection Using Facebook Reactions

Gender bias on Facebook might be measured by analyzing the difference in reactions on posts by women or men. My project is studying bias on Facebook pages of United States politicians using Facebook Reactions and post comments. Specifically, I am focusing on politicians running for US Senate in 2020. Data is being collected from Facebook pages of the politicians using a crawler and will be into a database.

The data will be analyzed by performing sentiment analysis on the comments and using an entropy function on the reactions for each post. The comment analysis is both focused on whether a comment contains more negative or positive words, and if it contains more personal or professional related words. My hypothesis is that female politicians may have comments directed at them that are both more negative, and more focused on personal issues. I am using an entropy function on the reactions to each post to measure how divided the reactions are. Related work used an entropy function on reactions to measure the controversy of a post. My hypothesis is that, in general, posts by female politicians will be more controversial than posts by male politicians.

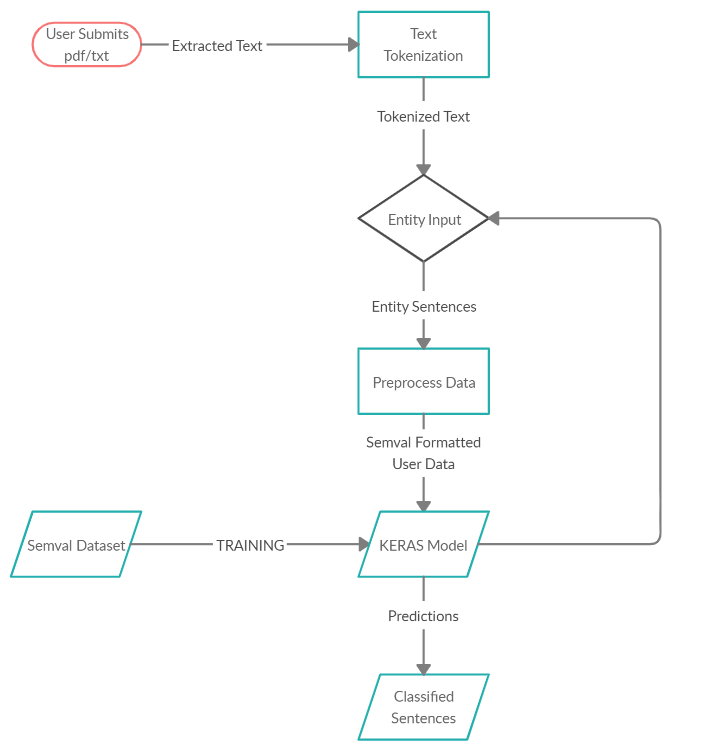

Software architecture diagram

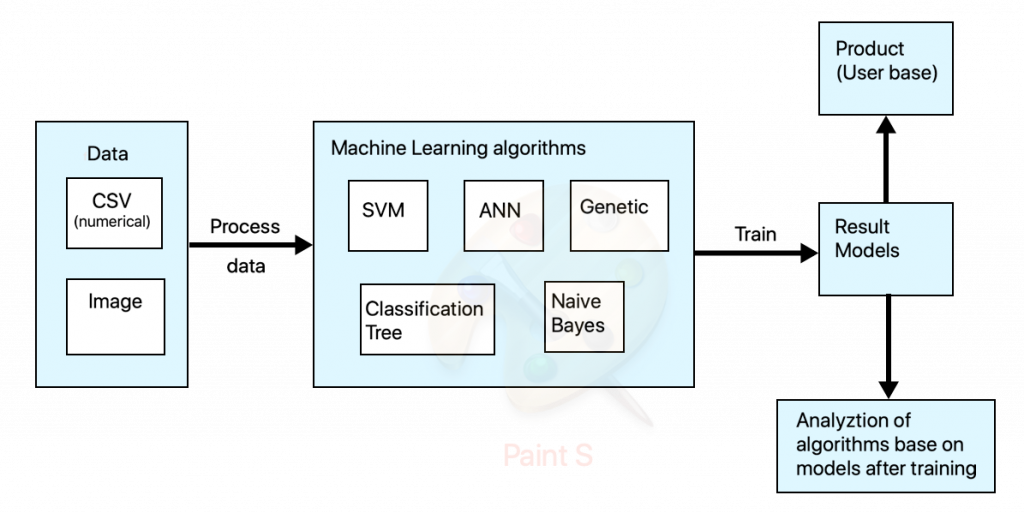

CS 488 – Succinct description

My project aims to develop models where we can predict the risk of having cancer based on both numerical data and image data. After training all the models, they will be analyzed to see which has the best accuracy and possible ideas to improve the accuracy. After that we can decide the best model to use if we want to predict the risk of having cancer.

CS 488 – Update – Week 8

Since I uploaded the architecture design last week, this will I will go back to posting the normal updates here – I have been slowly working on my second model that I will compare my initial mode to. I have not faced any obstacles yet except the learning curve that comes with learning Keras, but since Keras is well documented it does not take much time for me to figure out something that I am stuck in. In the upcoming week, I will keep working on the second model and plan to have it finished by the end of spring break.

CS 488 – Week 8

Week 7

I fixed the problem where all returned images look the same. I started looking into how to parallelize the code and learning photography techniques to make images better.

I have also made a more presentable diagram, which I will also use in my paper and poster.

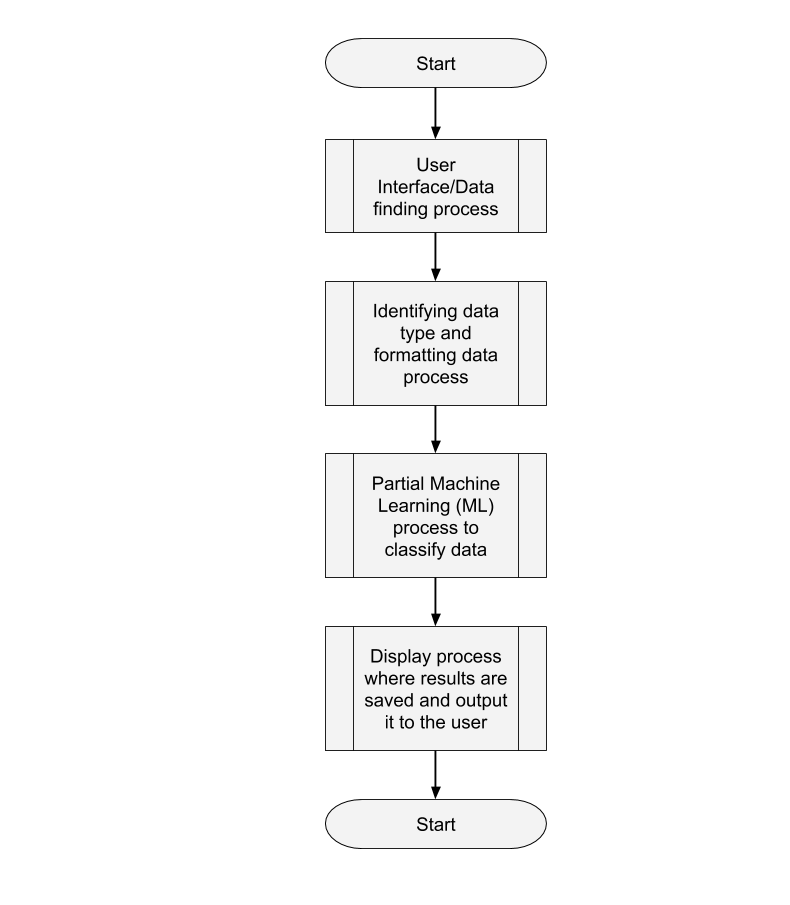

CS 488 – Software Architecture Design

The overall design of the project:

The design of the User Interface/Data Finding process:

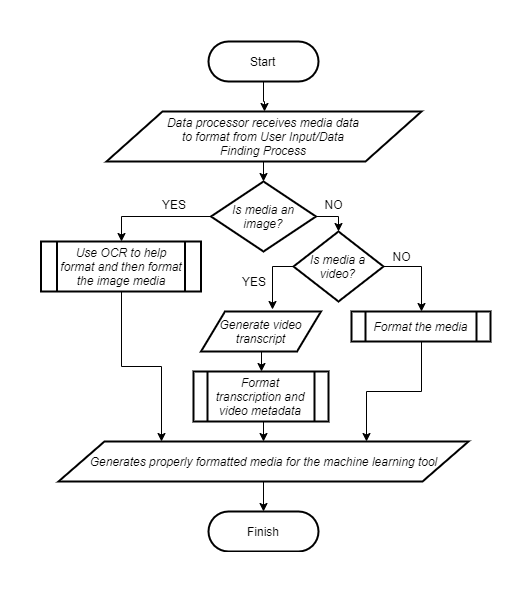

The design for the Data Formatting process:

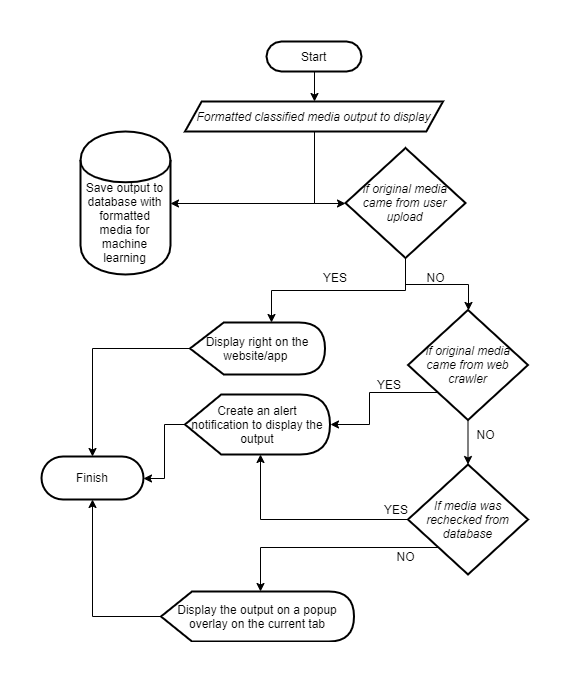

The design for the Displaying Results process:

The Partial Machine Learning process has yet to be defined as this is a new development in my project and there needs to be more research in the best way to create something that would allow me to easily add future scripts to the work.

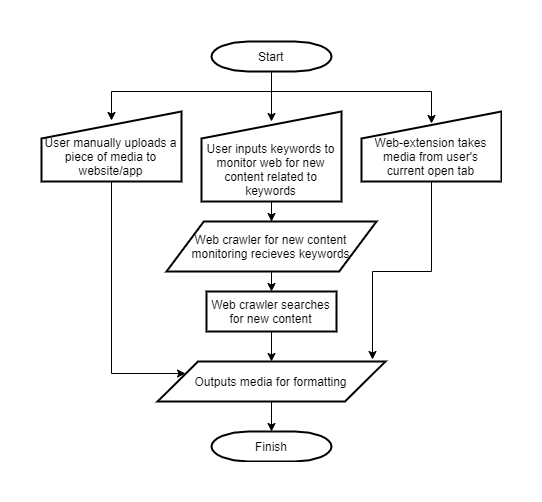

CS488 – Elevator Pitch

Information informs our entire lives. Information shapes public opinion which shapes things like public policy, elections, the health and safety of the public, and more. No one is above the harm that can come from misinformation, which is why we need to fight against its spread.

Fake News as an area of research is relatively new and so some of the aspects are not very well researched, and new aspects to research pop up. Some existing problems in this research are that all of the solutions to these aspects are made in isolation, therefore no one solution can be used to find all instances of fake news, and that most solutions do not have an accessible, comprehensive user platform to disseminate their solution to the people.

This solution that I will provide will be a functional model of a user platform that demonstrates how an engaging and accessible one-stop-shop for fake news detection can work. It allows the user to interact in many different ways that require different levels of effort and is able to scale to include many different automatic detection methods.

CS 488 – Week 8 Update

In the past week, I worked to finish implementing non content-based filtering which recommends products based on the user’s skin type and desired beauty effects. I was able to apply the concept of TF-IDF to judge which ingredients are heavily related to each beauty effect. Now that all my methods are working, I will implement widgets to the python notebook to create some sort of interface so that I don’t need to change the input each time. I will also start revising the paper and validating my method.

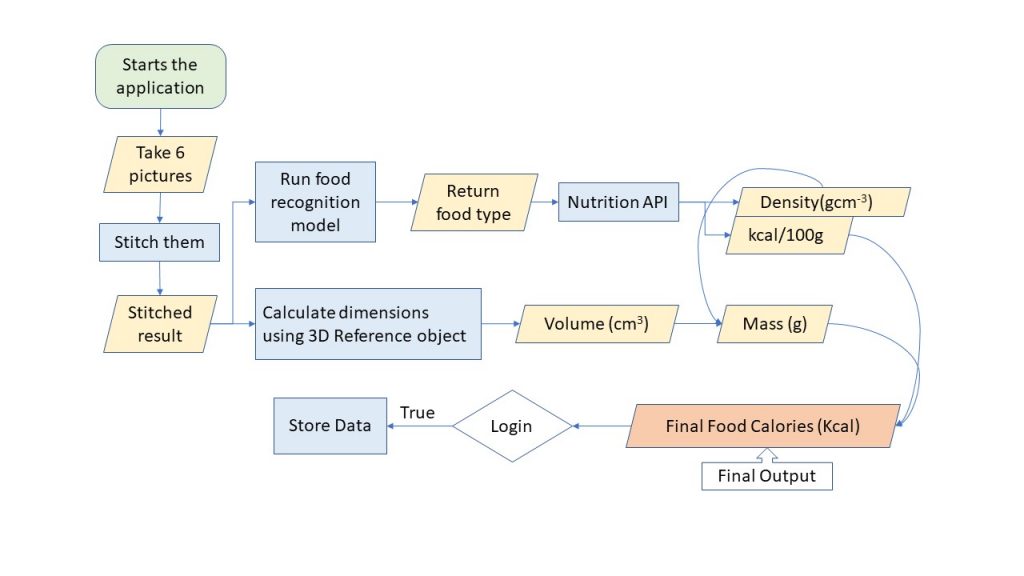

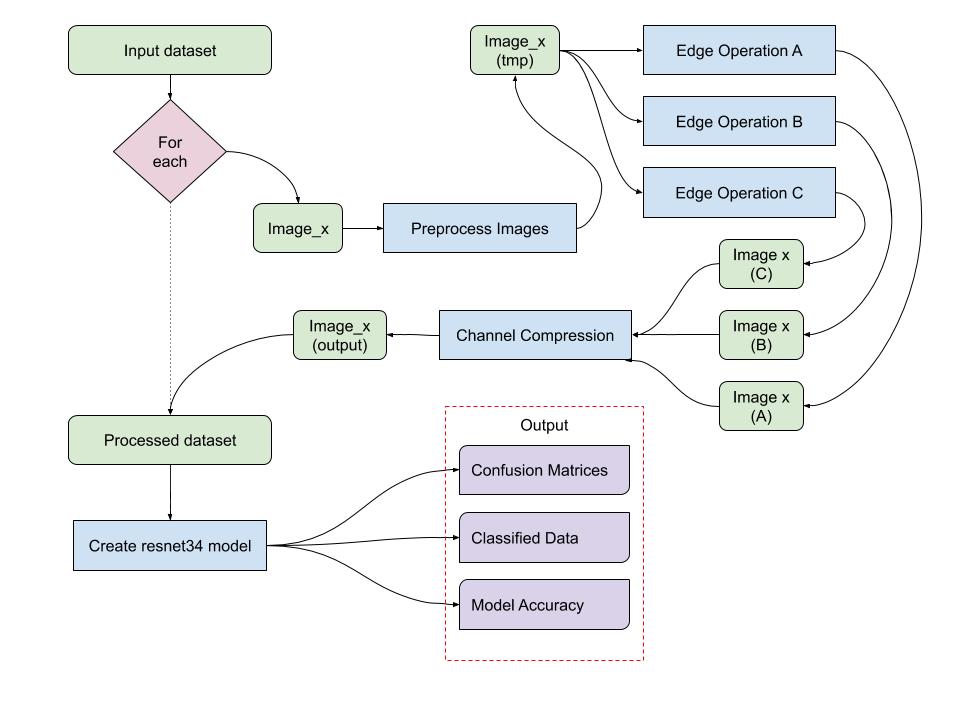

Software Pipeline

CS488 – Elevator Pitch

My project is about extracting features from images. Using low-cost collection techniques such as satellite imagery or drone surveys, a database of positive and negative cases can be created. Additional information will be extrapolated from each image in the database using a combination of modern algorithms and combined back into a single imager as different colored layers of a JPEG image. These processed images, the goal of which is to provide as much information as is possible, are used to train a machine learning model. Hypothetically, the additional information provided by the edge detection algorithms will enhance the accuracy and reliability of the machine learning model, reducing the need for expensive surveying equipment.

CS488-Week8-Update

I am currently working on a proof that non-contiguous kPARKS is NP-Complete. I am also mostly done with my paper.

CS488 – Software Architecture Diagram

Please click on the title above to view my flow diagram.

CS488 – Week 8

This week I focused more on refining my idea and how it would flow for a user, which then helped me to create a flow diagram for this week. During this process, I realized some flows in my code were inefficient, so I changed the flow of information through certain functions to match up with my flow diagram.

I created a validation function to test a loaded model and also an argument parser to make it easier to pass values for different and important variables into the code.

CS388 – Week 7 – Update

I have been working on writing the literature review for ten papers on the topic I chose, which is “Detect Chinese Text in Images”. I also sketched out the workflow for my project and discussed with my advisor about this.

CS388 – Week 6 – Update

I read another six papers and wrote the second annotated bibliography for my three ideas. After reading the papers for my three ideas and doing some research on the sources of datasets, I chose my first idea, which is “Detect Chinese Text in Images”, to be my research topic.

CS488 – Week 7 – Updates

I developed the feature extraction module for my project and it is working. It now converts a voice input file (.wav) to a sequence of acoustic feature vectors. I tested with my own voice. The two files of my voice recording produce two very different sequences of vectors. But I think we cannot tell my looking at these numbers. They are just a list of numbers of the .wav file. I am still having bugs on my modeling module. I followed Charlie’s suggestion to learn TensorFlow from the basic. I build and trained a model with TF’s dataset and it worked. But this is just a basic try. I will keep looking at it.

CS488 – Week 6 – Updates

Last week CS was down so I couldn’t post my week6 updates. I finally finished the environment setting for my modeling module code. I am using a model called VGGVox Models which are created by the same authors of the dataset I am using. I almost gave up this resource because it is written in Matlab which I have never used before. But then I found a python resource guiding me how to import this model. However, I am still having bugs running this model. It says the true_fn and false_fn have different data types. I tracked the error and found that the error is in TF innate files which i cannot modify. But I don’t know which step that I pass data incorrectly.

Elevator Pitch

My senior project is to develop a technology that provides higher performance and security for target applications. It is called unikernel which is an optimized library operating system. Unikernel consists of the minimum set of components that a target application requires from a complete operating system. Unikernel is light weight and has higher isolation than containers. It will be the trend of running environment for applications in many fields such as cloud computing and high performance computing in the near future.

CS488 – Week 7 Updates

In the past week, I worked on creating a survey to take inputs for content-based filtering, modified the skin type test questions, and obtained some responses. I also worked on implementing non-content-based filtering using TF-IDF which I am struggling with. I will be meeting with Xunfei on Thursday and try to finish this part as soon as possible.

CS 488 – Elevator Pitch

My project aims to create a skincare product recommender system based on the user’s skin type and ingredient composition of a product. The main component of the project is content-based filtering and the secondary component is non content-based filtering. For content-based filtering, a user provides his or her skin type and selects a skincare product from sephora.com. The system then identifies the chemical components of products and uses cosine similarity to recommend products that have similar ingredient compositions. 5 recommendations for each product category are then made and returned to the user. Non content-based filtering allows users not to input the product if they lack knowledge or have not found a product they like. A user provides his or her skin type and desired beauty effect to obtain top 5 product recommendations across all 6 categories.

CS488 – Elevator Pitch

Parks Puzzle is a popular puzzle game that is played on a square grid. A Parks Puzzle consists of an nxn grid with contiguous regions known as parks. The aim of the puzzle is to place trees within parks such that every row, column, and park contains one tree, and no two trees are on squares that border one another. My senior proposal is to show that the associated problem of deciding whether a given configuration of a Parks puzzle is consistent with a solution or not, dubbed PARKS, is NP-Complete. This result lets us meaningfully state that the Parks Puzzle in general is an NP-Complete problem, and that it is highly unlikely that there exist any polynomial time algorithms for the problem. Since I have already found a proof, I am currently working on the kPARKS problem, which is the analogous problem of placing k trees in each row, column and park.

CS488-Week7-Update

Now that I have a proof for the parks puzzle, I am spending time working on a more general puzzle that we’ve dubbed kPARKS, which is the analogous problem of placing k trees in every row, column and Park. I am also working on writing the proof and the results that build up to the proof in a clean and concise manner.

CS 488 – Week 7 Updates

For this week, I worked on integrating pieces codes into an application. I completed login and register pages with sql database. Since I usually work on bowie and it is down, I decided to install all dependencies on my local VM. It took quite some time to figure out tensorflow gpu and cpu installations. I also worked on creating a video demo of my project.

CS488 – Week 7

This week I began creating a model using the Keras Python library. I have been training it on the SemVal Task 8 2010 dataset, with accuracies of around 90% during training and 5 epochs and 60-65% validation accuracy. I was successfully able to save and reload the model.

I will be working on increasing the accuracy of this model in the coming week before applying it outside of its dataset.

CS488 – Elevator Pitch

My project aims to see how applicable semantic relation extraction models are outside of their dataset. Semantic relations are how we draw knowledge and facts from a text and no text is the same and when we research we usually look for these relationships regarding certain subjects in the text important to us. I want to see if a normal user can use state-of-the-art semantic models outside of their dataset to decrease the time needed to find specific knowledge about any entity in an unstructured text.

CS 488 – Week 7 – Elevator Pitch

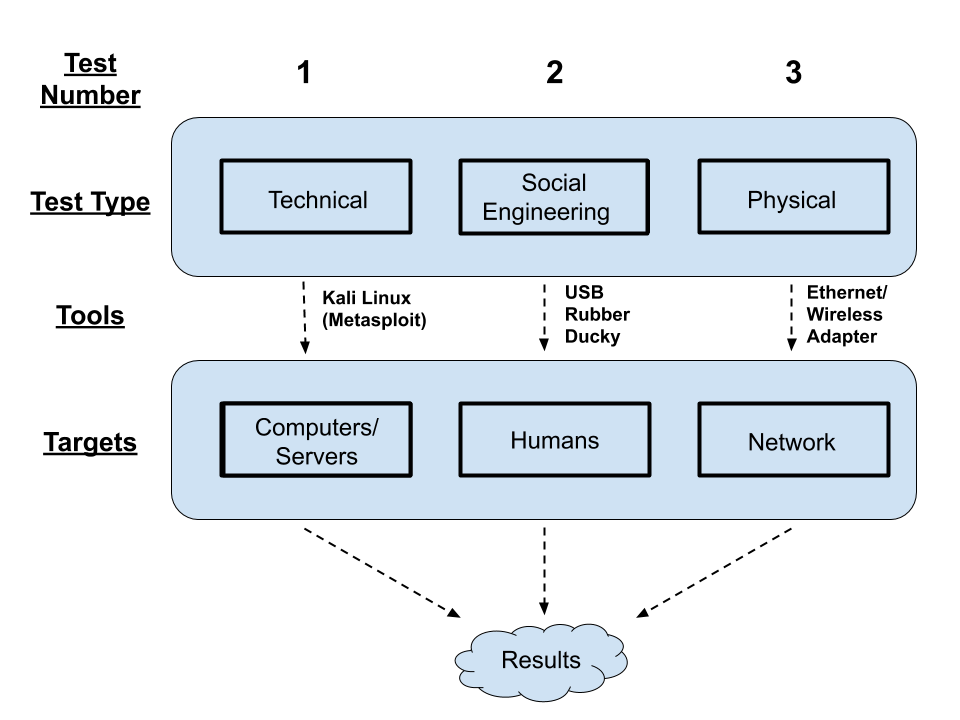

My project aims to develop a reproducible penetration test that can help secure a large network. Tests will come from three different avenues- physical and technical testing, as well as social engineering. The results from these tests will be put together in a final report and given to the appropriate people who can make appropriate changes as needed.

CS 488 – Week 6

— Elevator Pitch —

My project aims to use a sequence to sequence encoder-decoder model to make text-based advertisements more engaging and readable. This will help businesses get an edge over their competitors by attracting new customers as well as retaining their existing customers by making sure that their advertisements are readable and engaging to their target audience. This will be done through the analysis of pre-existing advertisements which will then be used to train the model on how to restructure sentences to make them more readable and engaging.

CS 488 – Week 7

My project is an application used in a library to issue and return books using QR code. The primary usage of this app is in college libraries. Using personal smartphones, users can scan the QR code and check out the books which reduce human work and reduces the average time spent in the library. Users can also search for any book in the library and learn basic information rapidly.

The login data and book data is stored in a firebase database. The librarian application involves 3-4 staff users who can manage the flow of books through the app and when a fraud activity takes place they get notified.

CS 488 – Update – Week 6

- This week I focused on getting part of the PolicialNews Data set from Castello et al. to work with Weka to be able to see if I can recreate the results used by their classification methods

- Downloaded a tool to combine excel files into one sheet without data loss, manually added headers and an extra column denoting which was fake and which was real

- But Weka still won’t load the data so that I can test it

- Next week I will focus on making smaller versions of the data set to see what features are the issue for Weka and testing features individually; I will also look into Keras as a machine learning tool and see what kind of testing can be done

CS488 – Week 6

This week I was trying to run two different TensorFlow models with checkpoints, however, I could get the checkpoints to work which is key to my project. After a discussion with Dave, I have decided to implement a simple model myself using the Keras library since it is more abstracted and well documented, so it shouldn’t take too much time. I will be aiming for a minimum of 50% accuracy with my model and the SemVal-2010 Task 8 Dataset, which I think is the best dataset choice for this task.

Following this implementation, I want then start testing my model outside its dataset.

Week 6

I did not manage to get the foreground (the food) with zero user interaction. I have achieved pretty good success at picking just the food with minimal user interaction. I will try it with a pure white background next.

I can now successfully manipulating individual contour areas.

For next week, I will (finally) work on learning more about food photography.

I I have most of the standard functionality working. Pretty much the only untouched coding is the resizing.

CS488-Week6-Update

This week I worked on finalizing my proof for the IFF and OR gadgets, and rewriting the final proof for the paper draft. I am now planning on working on an explicit algorithm for the reduction for use in the program.

CS 488 – Week 6

This week I started working on writing the first draft of the paper. The models seemed to run probably when I tried to test with the small data set. However the accuracy was not what I wanted since there were not enough data. I will tried to implement these models on an online cloud computing system while waiting for Layout to be available.

CS 488 – Week 6

Completed the paper outline. Working on the first draft as per the feedback received on the outline. Plan to complete the draft this week and present the first version of the project next week. I am still working on the QR code generation and scanning.

CS 488 – Week 5 – Updates

I wrote the outline of the senior project paper. It is similar to my proposal but i changed my modeling method. i need to rewrite the modeling from GMM-UBM to Convolutional Neural Networks. But I am still having bugs on the CNN resource I gained from GitHub. I am pretty sure I will use MFCC as feature extraction. But if i cannot get proper resource of CNN I probably need to change to DNN or others. But I will try my best cuz I want to use CNN.

CS488 Week 5

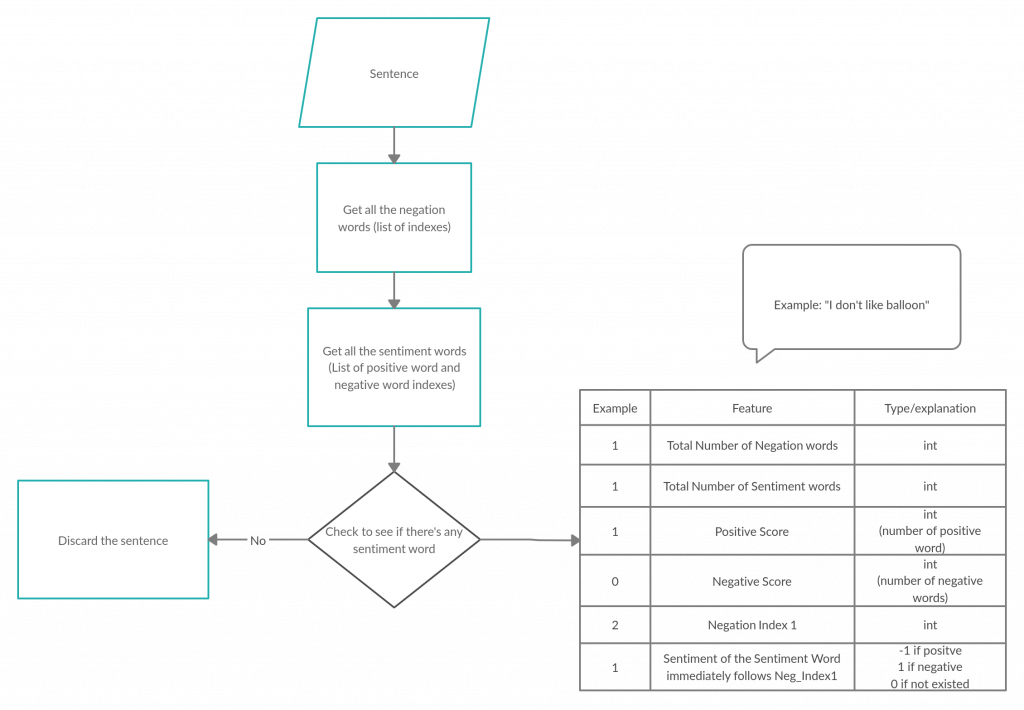

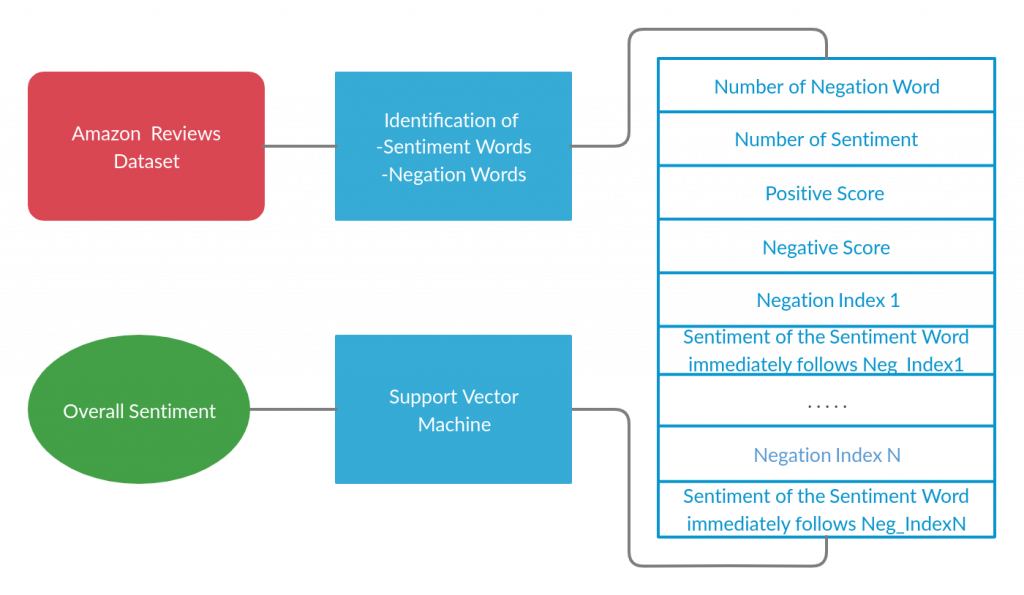

This week, I focused on writing the outline of the paper. I also have been using NLTK to extract features from the text reviews. I need to figure out how to handle a review that has multiple sentences since my feature extraction is currently only applied to one sentence.

CS488 Week 3

For this week, I followed a tutorial from Educative about Natural Language Processing and followed for data preprocessing. I was able to load the dataset and I am in the process of preprocessing it. I ran into a problem of sentences that do not contain any emotions at all, therefore need to be eliminated. Also, I need to extract the word ‘not’ from words such as ‘don’t’, haven’t’ for better indication of a negation. In the following week, I shall try to do so

CS488 Week 2

For the past week, I was able to skim through the Amazon Review datasets and chose Clothing, Shoes and Jewelry, on the criteria that its size is not too much. I then researched and learnt how to use pandas to import the dataset from its json type into a DataFrame. In the following week, I will continue to research and find out the way to extract the review of the data into multiple feature of interest that will be use to train our ML models with.

CS388 – Week 5 – Update

I read six papers for my three ideas. It was interesting that many research ideas have the same goal, but the ways they approach the problem are very different. For example, in the fall detection problem, some research ideas apply deep learning on images and videos, but others work on radio frequency instead of using the images. Another example of this is improving the performance of the Optical Character Recognition for Chinese text. My initial thought about how to solve this problem is to apply image processing techniques to improve the image quality and then deep neural networks. However, there was one paper approaching this problem from a different angle. They apply statistical natural language processing models such as N-gram in order to improve the accuracy of OCR. These ideas might help me come up with an approach that is different from the one I was thinking of doing.

CS488 – week 5 – Update

In the last week, I have spent more time learning about using fastai with a convolutional neural network, specifically the resnet34 and resnet50 models, which I think will be ideal for my purposes. I have also been working with Jordan to get the modules for this set up on Lovelace.

I have also been scavenger hunting for more data. I have data from Iceland, and a few confirmed spots on campus which I can use for both positive and negative training cases, but more data is better for this kind of AI. My search has lead me to reach out to Tom Hamm, Greg Vaughn, The Earlham Library, and the Geology department.

Over the next week, I will continue learning about fastai and start implementing my model to be trained over the data that I have already.

CS488-Week5-Update

The proof has been completed. This week I will work on writing it out rigorously, as well as designing the program.

CS 488 – Week 5

This week, I focus on the writing parts including the outline for the capstone paper. I also start implementing some neural network models for the image data set such as MobileNet and EfficientNet. I will try to test the model using a small sample training data set while waiting for Layout to be available so I can train the whole large data set.

CS 488 – Week 5

I finished writing my initial model and have trained it using a small sample dataset. The accuracy of my initial model is quite bad. I am currently researching how I can modify and improve the accuracy of my model as well as downloading a larger dataset to increase the accuracy. I also worked on the outline of the first draft of the final paper. Currently, the obstacle is the lack of accuracy of my model. This next week I will work on finishing up the first draft of the final paper as well as increasing the accuracy of my model.

CS 488 – Updates – Week 5

- I found a data set that would be the easiest to recreate results with

- I just need to merge the data set of credible news and the data set of non-credible news with an added column denoting whether it was real or fake to be able to test

- However, I ran into a major hiccup because Weka crashed and I can no longer open it.

- I am documenting the errors and trying to reinstall it and fix but because for some reason I can’ t delete some of the old folders and so I still have not gotten Weka to start back up again

- My hypothesis is that one of the packages is failing the whole opening process because I don’t have R on this machine

- I am also afraid of force deleting the folder because I have no idea how it will affect Weka or my computer

- I did successfully complete my outline but I do not have enough information to fill out the Results section and anything regarding my exact methodology.

CS488 – Week 5 – Updates

In the past week, I found and fixed a bug for cosine similarity calculation in the code I was referencing. I was able to obtain more accurate recommendations across different product types. I also thought about and planned for next week’s task.

Week 5

I am trying to succesfully detect the foreground object in the image, which will be food. While the initial idea was to use AI to detect the food in the image, this is not a completely solved problem yet. Since I can choose my own input, using the foreground image makes more sense. Charlie recommended a software called Image Magick, and I will look at that.

For next week, I want to finish the foreground, and start resizing the images, and passing them to the ranking AI.

CS 488 – Week 5 – Update

This week I spoke with Charlie and gained clarity into how Earlham handles guest connections through ECOpen and from there does not allow access to the network within Earlham but simply gives access to the outside internet. I also started my Social Engineering test on Thursday. I ran into some speed bumps with that and am currently resolving. For this next week, I plan to talk to Aaron in ITS about NMAP and its use to possibly check for any ports that are left open and could be vulnerable. I plan to meet with him sometime early next week.

CS488 – Week 5

This week I first worked on creating an outline for my final paper, which was useful as it sharpened my current understanding of my project and where it is headed. I also was working with a new model and was able to successfully train it, save checkpoints and load them. I also created basic pre-processing functions for my data to match the format of input sets.

Loading of weights did seem to not work with this model. When I reached a checkpoint with 80%+ accuracy and saved the weights, I followed up with loading the weights and feeding in test data from the dataset, but accuracy dropped to 5%. This was extremely confusing and is my priority to understand this week otherwise I will have to find another model.

CS 488 – Week 5

User login and authentication is complete. New user is able to sign and save details in the database. Sample set of 20 books to be used as testing data. Currently I am figuring out QR code scanning and generating new QR code’s. Next week scanning will work and books can be scanned and retrieved from DB.

CS 488 – Week 4 – Updates

TensorFlow is working fine on Lovelace now. But I just found that the demo uses TensorFlow 1 while the latest version installed on Lovelace is TF2………. The demo has a lot of code. I am not sure if i should work on this one and update all codes to TF 2, or just find another resource…… I talked to Xunfei, she told me to try other resource briefly. Because update that demo is not a small work.

CS488 – Week 3 – Update

I am still having issue on running the demo code from GitHub. I requested installation of TensorFlow in python3 on lovelace but it seems there’s still error. It is probably the issue of environment setting. I will communicate with the admins.

TensorFlow is working fine on Lovelace now. But I just found that the demo uses TensorFlow 1 while the latest version installed on Lovelace is TF2………. The demo has a lot of code. I am not sure if i should work on this one and update all codes to TF 2, or just find another resource…… I talked to Xunfei, she told me to try other resource briefly. Because update that demo is not a small work.

CS388 – Week 4 – Update

I am reading papers for my first idea, which is “Detect and Translate Chinese text in images”. One research that I read was about improving the performance of the Optical Character Recognition for Chinese books that are in precarious conditions. Instead of trying to enhance the image quality, their research applies N-gram, long short-term memory, and backward and forward N-gram statistics text model to develop a more accurate OCR model.

CS488 – Week 4

This week I did a lot of research and work on the more anthropological side of my project. I emailed Tom Hamm and Greg Vaughn and got some great information about where I could find the foundations of old buildings around campus that I could use for my project. This information will hopefully be detailed enough for me to create some labeled training images.

I also spent some time this week learning fast.ai, which I have settled on for now as the best option for identifying images. The library is extensively documented, and extremely robust. As soon as Layout or a similar machine is back up, I will be able to start testing code, but for now, learning the library is just as important.

CS 488 – Week 4

Last week I worked on collecting and preprocessing the data using Groupon API. I also started learning about and implementing my autoencoder model. So far the obstacle has been the learning curve but I have been extensively reading about neural networks and Keras and should be able to continue working on the project without any hiccups. Next week I plan to start my first draft of the paper as well as have a somewhat working version of the autoencoder model.

CS 488 – Week 4

This week, I started the data preprocess for my image data. The steps include, resizing, cropping, normalizing and lastly change to tensor value so that it can be fit in a neural network. For the numerical data set, I started looking into different algorithms which are not as computationally expensive as neural network such as k-nearest neighbor, support vector machine. In that way, I can test it when Layout server is still not available.

Week 4

I finished the ranking module. It take a folder of images, converts them to an array, passes the n best images to another function, which keeps processing the images, and then picking the best n again to be processed. There is no processing yet.

For the processing, I have started working on the genetic pixel changing for the image processing. I am reading the pixels into an array, and am changing each individual pixel. While it is (sort of) a genetic algorithm, I want the changes to be a little more intentional.

CS488-Week4-Update

This week I took a short break from working on the proof to start working on the app. I am currently trying to figure out whether it is worth designing the Parks app as a webapp, while also starting work on some of the basic modules.

CS 488 – Updates – Week 4

- I have spent this week analyzing the data sets that I have to see if there are any outside things that I need for these data sets to be able to be tested using Weka.

- I have found that some required me to have my app registered with Facebook Developers and Disqus and some were not actually in proper .csv format and so Weka (the tool that I am using to test classification methods) could not read it.

- This meant that I have a lot smaller pool of articles that I am able to replicate.

- I have found 27 different data sets but I haven’t read all the papers those data sets are used in and some of the papers that mention the data sets are just explaining how they created the data sets and not how to use them in this context.

- Because of all of these little setbacks, I am working on just finding smaller sample data to test Weka with, so that I can make sure Weka is working and I am focusing on recreating the results from Castello et al.’s work for the moment.

- Castello et al.’s data format is different than what I have used for Weka before and I have to do some more digging to see if I need to combine the fake news data set with the credible news data set for each year first before sending it through Weka, or if I can just open both within Weka and tell it how to find what it needs.

CS 488 – Week 4

In the past week, I worked on generating five recommendations from each of the the six product categories. I still have a confusion about the cosine similarity formula so I’m planning to meet with other faculties in the following week while keep working on the next task. Other than that, there wasn’t any obstacle and I just need to make the function return the results in a nice and clean way.

CS488 – Week 4

This week I made efforts to get predictions from my model that was trained last week. However, after some hours spent understanding the code, I realized that this model is not for practical use but rather theoretical predictions, as each query set requires a supporting set.

Following this setback, I have now found some models to train from a smaller dataset in comparison to FewRel. I believe these models are able to be used practically on random query sets. With the smaller training time required for them, I should be able to verify which is best for my project this week.

CS 488 – Week 4 – Update

This week, I continued my testing for the physical aspect of my project. During this testing, I tried to focus on ECOpen since it says there is no encryption associated with the network. Come to find out, there is still an authentication process that one must go through when trying to connect to ECOpen. So when I ran a packet sniffer on a device that was on an ECOpen channel, I could not see any data. (This is a good thing and was noted). I also finished preparing my social engineering test which will begin tomorrow, February 12th. This next week will consist of my social engineering test, processing results from physical test, and working on my paper.

CS 488 – Week 4

Working on the first draft of the paper.

Week 3

I have started working on passing images to the ranking algorithm.

I also have found some online food-photography courses I want to look at. Learning that will be helpful in knowing how to improve my images.

Update up to 2/5/2020

This past month i have been mostly working with getting everything for my project working and fighting some major issues. The first issue that has been almost solved is that the NorthStar uses DisplayPort out while my computer only has an HDMI port and a MiniDisplayPort in. Turns out HDMI outputs are not compatible with DisplayPort and so the adapter i got to do that does not appear to work. I am investigating getting the proper port by the end of this current week.

The other issue i had to fight was the fact that for a week and a half, i did not have my primary computer since it was broken and needed to be repaired. I had a much less good backup computer that i used to test the hypothesis above, so i was not entirely useless during that time.

This coming week and weekend, i am hoping to have a fully functioning environment running and have the ability to display tracked hands in the headset, depending on the availability of an adapter.

CS 488 – Week 3 – Updates

Last weekend, I spent time with a small group of friends filling out a spreadsheet of information for 2020 Senate candidates. So far, 154/348 filed candidates have been added to the sheet. During that time, we discovered that a few candidates operate their campaign on a public Facebook profile instead of a Facebook page. In talking with Charlie, he guessed that the process in the API to collect profile data shouldn’t be too different from page data. Therefore, I am planning to collect this data as well, while noting the names with profiles in case their results are drastically different from overall results. Next, I plan to develop the scripts to start collecting and analyzing small amounts of data, planning to scale and automize them later.

cs488 – Week 3

This week was a big planning week for me. I spent a lot of time writing down notes and ideas, as well as researching the details of what I need for my project. I also spent some time gathering resources for my project in the form of data from Iceland. A combination of 2018 and 2019 data will provide me a much-needed training/testing case.

I have progressed in my implementation, further streamlining the process of creating various edge detections of original images. This week I added the Prewitt edge detection algorithm and improved my Caney edge implementation to have a tight, wide, and auto mode.

I have also been researching technologies for image recognition via machine learning with multiple channels. This is the idea that a single “object” in the AI can have multiple images associated with it, and it is necessary for my project.

CS488 – Week 3

This week I was able to create a saved checkpoint of my learning model for semantic relation extraction. This hopefully means I won’t need to train it further and can now focus on feeding it my data, which now needs to be pre-processed before being fed into the model. A basic GUI window was also up and running this week with PyQt5 which was great to see! I will be writing more code in the coming weeks now so I need to ensure that my project files are organized.

CS 488 – Week 3

This week, I tried to implement some models and was hoping to get it on our Layout server with GPUs. However, the system admins were still working on that and I could not ssh to the server. Therefore, I created a google cloud free trial account and started writing and testing my model on their server.

CS 488 – Week 3

Since my project involves a significant part that’s marketing, I was advised by my instructor to talk to Seth and other professors about how I should approach a dataset. After talking to them, I have decided that a good approach would be creating a dataset using the readability formulas. First I will calculate the average readability and then filter the dataset using that average readability. A marketing dataset has been extremely hard to find, but asking around has led me to the Groupon API – it lets me get 100 deals per second which will help me easily scrape millions of deals in a few days. I plan to run a script in the background that does it. Since last week, I have also successfully implemented word2vec using Genism – a python library.

CS 488 – Week 3 – Update

In the past week, I worked on calculating the cosine similarity between the ingredient composition of an inputted item and that of the rest of the items in the data. I am struggling to decide on which formula to use for this, since the related project used the equation different from the “typical” formula used to compute cosine similarity. I will need to look into this more next week.

CS388 – Week 3 – Third Idea

- Name of Your Project

A Real Time Fall Detection System to Assist the Elderly Using Deep Neural Networks

- What research topic/question your project is going to address?

The elderly have a high chance of falling and get injured or faint. This might put them to danger if they are alone. One way that can help the elder people is having a system that can monitor their actions, detect the falling action and other behaviors after falling down, classify the levels of severity and send an alert to their emergency contacts or the emergency room if the level is serious.

- What technology will be used in your project?

Deep learning, pattern recognition, image processing

- What software and hardware will be needed for your project?

Python, PyTorch (or Keras)

I might also need a CCTV camera if I decide to build the actual device.

- How are you planning to implement?

First I will apply some image processing techniques to enhance the images and videos quality. If the dataset is small, I will use of image data augmentation techniques to produce more data. Then train the model that detect the person falling in the photo frame using deep neural networks, then use the people falling photos and videos to train a model that classify the level of severity. When the index of severity passes a threshold, send out the alert.

- How is your project different from others? What’s new in your project?

There are several projects that work on the similar problem. Most of them work on detecting the falling action only. In this project, I hope to build a system that is more detail and can decide whether it is an emergency case.

- What’s the difficulties of your project? What problems you might encounter during your project?

I might not be able to find a big enough dataset to train the model.

CS488-Week3-Update

I worked with possible ways of proving that non-contiguous Parks is NP-Complete, and found one good avenue for exploration. Over the week I produced a general technique to convert any instance of 3-SAT to an instance of the non-contiguous Parks Puzzle, thus proving that it is NP-Complete, our first major result. I am working to modify the proof, or try similar techniques for the contiguous case this week.

CS 488 – Update – Week 3

- I have started to keep a log of what I do every day for this project so if something goes wrong I know where to back up and begin again. This will also help later when writing about my process for the poster/paper

- I have started mapping all the datasets I found to what papers used them so that I could figure out which papers I could replicate

- I have started trying to replicate papers as well using Weka just to make sure I’ve set up everything correctly so that I can properly set up my own tools

- I’m having issues with how vague all the research papers are, however. So I think to fix that issue, I’ll need to email the researchers which more questions so I can actually replicate them and know what tools they used.

Idea 3

- Name of Project

Automating laptop checkouts from CST front desk using image recognition

- What research topic/question your project is going to address?

Although we need a human to address the needs of guests in the welcome desk of CST, it would be ideal for the worker and students if we can automate the process of MACs’ checkout. Humans are prone to error and we do not want any student worker to be liable of errors that could cost them thousands of dollars. So this project would allow a machine to handle the checkout using a camera to identify the laptop and the student wishing to check out the laptop and remove the process from the desk worker completely.

- What technology will be used in your project?

Image recognition, machine learning models,

- What software and hardware will be needed for your project?

This would need a good quality camera, python, and some database management software

- How are you planning to implement?

Have a camera stationed above the cst desk. Also I think it would be beneficial to change the barcodes in the laptops to bigger QR codes for easy recognition and better visuals for the camera. Use various machine learning models to train the software to recognize students and identify unique laptops. This product should also send out emails regarding reservation details to the students like the current system does.

- How is your project different from others? What’s new in your project?

This is different implementation from the current process we have in that we are removing human responsibility from this procedure. This will hopefully reduce human error in the process and decrease financial liability to student worker and the institution. It is also scalable to other use cases (like Runyan desk for example) to increase automation and improve efiiciency.

- What’s the difficulties of your project? What problems you might encounter during your project?

The problem I anticipate is making sure the model I have does not mis-identify students checking out the laptop or mistaking someone walking by the cst desk as someone checking out a product. Lighting might also be some issue as the desk is besides huge windows and so lighting is very different in night vs day, or summer vs winter. Another issue to consider is the camera quality (need to get good camera under reasonable budget)

Idea Number 3

- Name of Your Project

Ans: SARS

- What research topic/question your project is going to address?

Ans: Using trained neural nets to be able to tell when a statement/sentence is sarcasm

- What technology will be used in your project?

Ans: NLTK and

- What software and hardware will be needed for your project?

Ans: Botmock is the only software that will be needed for this project

- How are you planning to implement?

Ans: I plan on making this an extension of Botmock

- How is your project different from others? What’s new in your project?

Ans: With my project, I am using the same method of using CNN model hierarchy when it comes sentiment analysis to learn the context and space in which the sentence exists

- What’s the difficulties of your project? What problems you might encounter during your project?

Ans: Every sarcasm exist in a defined space one difficulty of this project is trying to build a barrier for that space. Another problem would be getting access to Botmock’s API to make this application compatible.

CS 488 – Week 3 – Update

This past week I have really dug into my physical testing. Using Kali Linux and a wireless adapter (supports monitor mode), I was able to use commands to see which networks were available and from there, I could see all of the clients connected to each network. However, I only could see the BSSID (MAC Address) of each device, nothing more. I then went in to WireShark which showed me a little more data. I could potentially see what type of device it was. However, all data was encrypted in ECSecure. Trying to break the encryption was hard as we have hundred of users with different passwords. It’s not just a single password for the ECSecure network (that would be too easy to break). I plan to continue this testing and see what else I can find through ECOpen.

I have also started to set-up my Social Engineering experiment that way everything is ready when the start date arrives.

CS 488 – Week 3

I worked on the login page and setup and almost done with the forget password setup. I have to decide on the database for the login, whether it will be single data base or sql for the whole application. I plan on working on the User interface features in the coming week. This involves setting up a db to store books, setting storage attributes etc. This is the main portion of the project and shall take the most time.

Week 2

I have spent some time thinking about how to split up the timeline into more detail. I have met with Charlie, and decided that the program should take a bulk of images as an input rather than a video. The next step is to learn more on the photography aspect of things.