https://drive.google.com/open?id=1fUOLlG9baN0_dGJD2Bqx9DcA0nGyknnr

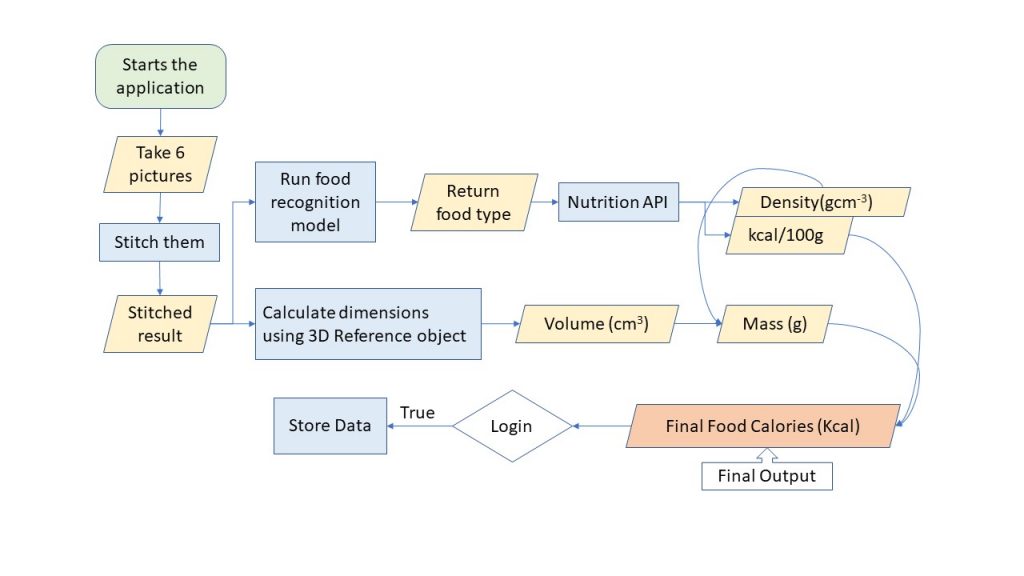

Capstone Poster – Image-based Fruit Calories Estimation using 3D Dimensions

Please refer to this link if the uploaded document is not working.

CS 488 – Week 12 Update

I am working on distance calculation. I had to start from scratch since there are no tutorials online to follow so it is taking up time. The image model is still not working so I am going to make the user to input fruit name instead. I am going to wrap up the application development. Next week will be for accuracy testing.

CS 488 – Week 11 Update

I worked on the project poster. I also continued working on software demo v2 and finally submitted it. I discussed with Becky on scaling the accuracy testing, and the method I should use. I have found the food density data that I was planning to use is very limited so I am going to put some more data by manual calculation. I am focusing on fruits instead of foods.

CS 488 – Week 10 Update

I worked on integrating the camera into my application. I found the bug for image processing, but I am working on finding a way to install the CUDA toolkit on Google Cloud VM. I also worked on software demo version 2. I made some changes in my project to scale it down discuss those with Becky.

CS 488 – Week 9 Update

I was mainly in the process of transitioning.

CS 488 – Week 7 Updates

For this week, I worked on integrating pieces codes into an application. I completed login and register pages with sql database. Since I usually work on bowie and it is down, I decided to install all dependencies on my local VM. It took quite some time to figure out tensorflow gpu and cpu installations. I also worked on creating a video demo of my project.

CS388 – Week 13 Update

I made final edits to my presentation and finished reading 3rd passes for all papers I have found. I have also revised my design by adding some more details to it. I have found a book about OpenCV projects so I have started implementing an application for image recognition. I am still working on my final draft proposal. More research is done on Android camera API, to see what I can use and what I cannot for my application. I plan to implement small chunks of my senior project during winter break, so I am looking for online resources to walk me through the process.

CS388 – Week 12 – Updates

I found an API for food calories. I worked on proposal second draft. I explored an image editing software I am thinking to use.

CS 388 Week 11 – Updates

I realized that the database I found for food nutrition is not enough for the calorie estimation so I looked for more resources. I started learning SQL.

CS 388 – Week 10 Updates

Finished propsal first draft. Explored softwares for image processing.

CS 388 – Week 9 Updates

I discussed my new idea with Charlie and Xunfei. I searched for more papers about 3D modeling and volume estimation but could not find a lot. I will be creating an Andriod application, so I looked into Andriod camera API and found that I can specify the distance between the food and phone camera until it satisfies the requirement. I plan to include face recognition as authentication for privacy purposes and found a GitHub repo for it that I can use. I also found a paper that is more closely related than what I have found so far.

CS 388 – Week 8 Updates

After coming back from CMU workshop for CS researches, I have decided to modify my idea a bit to integrate more CV into the project. From recipe recommendation and calorie estimation, I have decided to focus only on calorie estimation. There are many calorie estimation software that requires users to have a reference object when taking a picture of food. As much as this method has brought food calorie estimation to a new level of accuracy, it is inconvenient for users as they need to have the reference object with them at all times.

In my project, I aim to solve this problem as well as to bring the accuracy of calorie estimation to another level. Users will scan the reference object the first time they set up the application. The scanned object will be saved in the database as a 3D object with its area and volume. Next time the user scans the food, the object will appear next to food. These two will be compared and extract the volume of food from it. From volume, the calorie of food will be estimated.

CS 388 – Week 7 Updates

I read and did more research on different algorithms of recipe recommendation. I removed some papers from my box that turned out to be not quite related and added some more papers. I also wrote the final version of my literature review.

CS 388 – Week 6 Updates

I have decided on the project I will be working on as my senior project. I have talked to Charlie about it, discuss my ideas regarding this project. He will be my advisor for the project. I have found 10 more papers and a couple of technologies I might be using. I have also found the datasets of food and recipes I will be using for my project.

My final idea is nutrition management and recipe recommendation system. Users will be able to scan the ingredients they have using the app and the app will recommend recipes using the user input they have put before such as any allergies, or food they don’t want to or cannot consume. The next step of my project will be the calorie estimation of food the user will consume. For this part, I plan to use a texture mapping and scanning for the optimum estimation of calories, and ingredients. For the privacy issues, I plan to have users scan their face on the first use of the app and have an API that will determine whether the current user is the user of this account. I am still thinking about possible ways to detect liquid ingredients and seasonings of the food.

CS 388 – Week 5 Updates

I have talked to Xunfei about my 3 ideas and decided to discard one of my 3 ideas because of overhead issues. I wrote 2 annotated bibliographies of my 2 ideas with 3 papers each. I have also done more research on my 2 ideas.

CS388 – Week 4 Updates

I wrote 3 annotated bibliographies, one for each idea, and each of the annotated bibliographies is composed of 2 papers I have found for my ideas. I went to San Diego to attend Tapia conference of diversity in computing.

CS388 – Week 3 Updates

Talked to Charlie about my ideas and got feedback from him. Found 5 papers for each idea.

CS 388 – Week 2 (3 Ideas)

- My first idea is to create an application that scans the picture of foods and let users know what ingredients are in the dish. I am still deciding whether I want my app to be used as a diet and nutrition guide or aid for visually impaired people. This application will be available in multiple languages (at least 3). Xunfei had provided me more questions to explore as feedback. I will integrate computer vision and natural language processing in this project.

- My second idea is a program that detects prank calls made to 911 or other emergency centers. I will focus on details of the caller’s speech such as the urgency, intonations, breathing, etc. as well as background noises like whether the background is too quiet or too loud or is there any footsteps, etc.

- My third idea is to generate speech from a user’s hand gestures in several languages. I plan to piggyback an already existing and working program that translates hand gestures to speech. My main focus will be on improving that program and working to the accuracy of language translations.