The repository for this software can be found at https://gitlab.cluster.earlham.edu/senior-capstones-2020/laurence-ruberl-capstone

Week of march 23

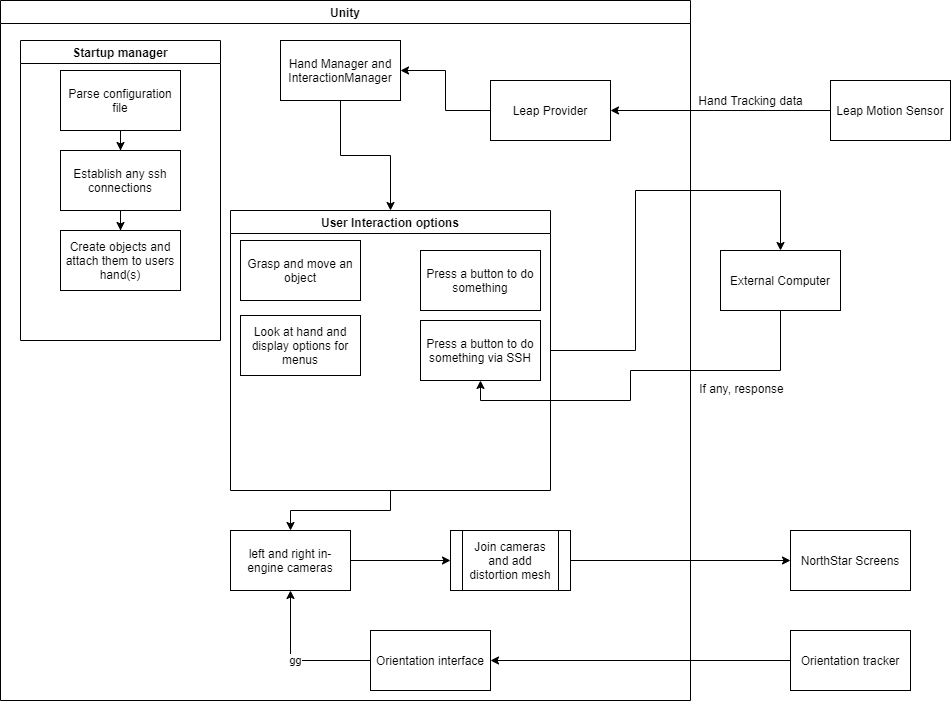

I’ve done some work on the configuration parser to enable to core of the project to work: allowing a user to generate a ui with functionality from a text file. All i’ve been able to do so far runtime wise is to have the user select whether they are using VR mode or AR mode. Backend wise, i’ve done a lot of setup of methods and variables and i’ve done the first tests with ssh and telnet (long story) integration which didn’t turn out like i wanted. Next on my list is to create the actual objects that the user defines and start hooking up the ability for said objects to do stuff.

Update up to 2/5/2020

This past month i have been mostly working with getting everything for my project working and fighting some major issues. The first issue that has been almost solved is that the NorthStar uses DisplayPort out while my computer only has an HDMI port and a MiniDisplayPort in. Turns out HDMI outputs are not compatible with DisplayPort and so the adapter i got to do that does not appear to work. I am investigating getting the proper port by the end of this current week.

The other issue i had to fight was the fact that for a week and a half, i did not have my primary computer since it was broken and needed to be repaired. I had a much less good backup computer that i used to test the hypothesis above, so i was not entirely useless during that time.

This coming week and weekend, i am hoping to have a fully functioning environment running and have the ability to display tracked hands in the headset, depending on the availability of an adapter.

Week of 9/9

I have finished buying the parts to make the headset and i have finished setting up a unity environment, but haven’t been able to run tests there yet.

Mega Update

This is a mega update on my to make up for several weeks of missing updates.

- I did a preliminary cost analysis for my first idea, which would result in either a budget of $400 or $3500 depending on what hardware i went with.

- I applied for the Yunger Fellowship as a funding source to be able to afford the hardware needed for my first project idea. I did not end up getting it, unfortunately, and so called in a favor for an alternative source of funding.

- In the midst of my research, I discovered that as near as I can tell, no one has ever published research on applying Augmented Reality to the Management aspect of theater, only the artistic side.

- I decided to combine my first and second ideas and switch from using Microsoft Hololens as the hardware to Leap Motion’s Project NorthStar, an open-source Augmented Reality device, after Microsoft decided that they couldn’t care less about small independent developer types such as myself.

- After settling on NorthStar, i started delving into the Unity API’s as well as sourcing hardware and 3D printing capabilities.

- Currently, I am waiting for the Leap Motion sensor to arrive in the mail so i can do concrete work with the APIs, and for my test print to finish to determine if i need to buy the recommended filament or if the one we use will suffice.

Topic ideas

Here are my ideas for topics! Another post with some cost analyses is coming soon!

Topic Name: Real-time management using Augmented Reality

Topic Description: Examine the applications of Head-Mounted augmented reality displays such as HoloLens or Project Northstar in real-time management scenarios like Theatre Stage Management or NASA rocket launch management and implement a basic proof of concept software to eventually be used in the Theatre department as part of my Theatre Capstone.

Topic Name: Using real-time spacial mapping to improve calling for stage managers during performances

Topic Description: Examine the feasibility of using technologies such as Kinect 2.0 in the area of theatre to allow stage managers to keep better track of the positions etc of their actors, allowing them to make more accurate cue calls when their vision of the actors might be obscured, and implement a proof-of-concept application for use in my Theatre Capstone.

Topic Name: Using micro controllers to facilitate cross-device communication and control

Topic Description: Use Arduinos or Raspberry Pis to allow two or more very different devices to be controlled by another device. For example, allow Qlab on a computer and cues on a light board to be controlled from a single application running on a different computer. If feasible, make a proof-of-concept for use in my Theatre Capstone.