Here’s the final draft of my paper to finish off the semester:

Last Program Update

Yesterday, I just changed the name of the executable file to “konductor” simply because I wanted the command to be a little more descriptive over just “main,” and all related files have also been updated to reflect the change. Unless something else happens, this will probably be my last program update for the semester.

Meanwhile, today is all about finishing my 2nd draft so that I can work on other projects due tomorrow.

Progress on Paper and Program

Today, I tried getting rid of the sleep function from OpenCV that watches when a key has been pressed so that the program can quit safely. This is the only sleep function left in the program that could possibly interfere with the timekeeping functions in the program, and keyboard control is not necessarily a key part of the program either. However, I couldn’t find any other alternatives in the OpenCV reference; I was looking for a callback function that is invoked when a window is closed, but that doesn’t seem to exist in OpenCV.

Nevertheless, I have been continuing to work on my paper and add the new changes to it. Although, I don’t know if I’ll have time to finish testing the program before the poster is due on Friday.

Demo Video and Small Update

Before I forget, here’s the link to the video of the demo I played in my presentation. This may be updated as I continue testing the program.

Today’s update mainly just consisted of a style change that moved all of my variables and functions (except the main function) to a separate header file so that my main.c file looks all nice and clean. I may separate all of the functions into their own groups and header files too as long as I don’t break any dependencies to other functions or variables.

Post-Presentation Thoughts

Today’s program update was all about moving blocks of code around so that my main function is only six lines long, while also adding dash options (-m, -f, -h) to my program as a typical command would.

Looking through the XKin library for a little bit and after having done my presentation, I’ll soon try to implement XKin’s advanced method for getting hand contours and see if that helps make the centroid point of the hand, and thus the hand motions, more stable. In the meantime, though, my paper is in need of major updates.

Before Testing

I forgot that I also needed to compose an actual orchestral piece to demo my program with, so that’s what I did for the entire evening. I didn’t actually compose an original piece, but rather just took Beethoven’s Ode to Joy and adapted it for a woodwind quintet (since I don’t have time to compose for a full orchestra). Soon, I’ll actually test my program and try to improve my beat detection algorithm wherever I can.

I also tried adding the optional features into my program for a little bit, but then quickly realized they would take more time than I have, so I decided to ditch them. I did, however, add back the drawing of the hand contour (albeit slightly modified) to make sure that XKin is still detecting my hand and nothing but my hand.

Core Program Complete!

Yesterday was a pretty big day as I was able to implement FluidSynth’s sequencer with fewer lines of code than I originally thought I needed. While the program is still not completely perfect, I was able to take the average number of clock ticks per beat and use that to schedule MIDI note messages that do not occur right on the beat. I may make some more adjustments to make the beat detection and tempo more stable, but otherwise, the core functionality of my program is basically done. Now I need to record myself using the program for demo purposes and measure beat detection accuracy for testing purposes.

Two other optional things I can do to make my program better are to improve the GUI by adding information to the window, and to add measure numbers to group beats together. Both serve to make the program and the music, respectively, a little more readable.

Learning to Schedule MIDI Events

The past few days have been pretty uneventful, since I’m still feeling sick and I had to work on another paper for another class, but I am still reading through the FluidSynth API and figuring out how scheduling MIDI events work. FluidSynth also seems to have its own method of counting time through ticks, so I may be able to replace the current timestamps I have from <time.h> and replace them with FluidSynth’s synthesizer ticks.

Configuring JACK and Other Instruments

Today, I found out how to automatically connect the FluidSynth audio output to the computer’s audio device input without having to manually make the connection in QjackCtl every time I run the program, and how to map each instrument to a specific channel. The FluidSynth API uses the “fluid_settings_setint” and “fluid_synth_program_select” functions, respectively, for such tasks. Both features are now integrated into the program, and I also allow the users to change the channel to instrument mapping as they see fit as well.

Now, the last thing I need to do to make this program a true virtual conductor is to incorporate tempo in such a way that I don’t have to limit myself and the music to eighth notes or longer anymore. Earlier today, Forrest also suggested that I use an integer tick counter of some kind to subdivide each beat instead of a floating point number. Also, for some reason, I’m still getting wildly inaccurate BPM readings, even without the PortAudio sleep function in the way anymore, but I may still be able to use the clock ticks themselves to my advantage. Although, a simple ratio can convert clock ticks to MIDI ticks easily, but I still need to figure out how I can trigger note on/off messages after a certain number of clock ticks have elapsed.

Another Feature To Add

Yesterday, I changed the velocity value of every offbeat to make them the same as the previous onbeat since the acceleration at each offbeat tends to be much smaller and the offbeat notes would be much quieter than desired. This change, however, also made me realize that my current method of counting beats may be improved by incorporating tempo calculations, which I forgot about until now and is not doing anything in my program yet. The goal would be to approximate when all offbeats (such as 16th notes) would occur using an average tempo instead of limit myself to trigger notes precisely at every 8th note. While this method would not be able to react to sudden changes in tempo, this could be a quick way for my program to be open to all types of music and not just those limited to those with basic rhythms.

Late Minor Update

Yesterday, I only had time to make relatively small changes to my program, but I did write more of the readme in more detail explaining how to use the program. Even more will be explained as I finish developing my program.

Also, I figured out that the reason for the bad points showing up so often was that part of the table was mistaken to be part of the hand, so I moved the Kinect camera a little higher, and sure enough, that issue was fixed. Now the only two important issues I have left to figure out are how to change the instrument that is mapped to each channel, and how the user will be allowed to change said mapping to fit the music. I may also try to figure out how to automatically connect the FluidSynth audio output to the device output of the computer, but I’m not completely sure if this is OS-specific.

Getting FluidSynth Working

In a shorter amount of time that I thought, I was not only able to add FluidSynth to my program, but also able to get working MIDI audio output from my short CSV files, although I had to configure JACK in such a way that all of the necessary parts are connected as well. For some reason, though, I’m seeing a lot more stuttering on the part of the GUI lately and the hand position readings aren’t so smooth anymore, and the beats suffer as a result. I’ll try figuring out the cause soon, but now I have a paper to finish.

Minor Improvements

I briefly removed the timestamps from my program, but I didn’t notice any change in performance any more, so I just left them in the program as before. I also made my program a little more interesting by playing random notes instead of looping through a sequence of notes, and changed the beat counter to increment every eighth note instead of every quarter note. The latter change will be important when I finally replace PortAudio with FluidSynth.

I also played around with the VMPK and Qsynth programs in Linux to refresh myself on how MIDI playback works and how I can send MIDI messages from my program to one of these programs. Thanks to that, I now have a better idea of what I need to do with FluidSynth to make Kinect to MIDI playback happen. I also plan to have a CSV file that stores the following values for the music we want to play:

beatNumber, channelNumber, noteNumber, noteOn/Off

The instruments that correspond to each channel will have to be specified beforehand too.

More Experimentation

The past few days have been really rough on me, as I attended the Techpoint Xtern Finalist reception all day yesterday, all while being sick with a sore throat and cold from the freezing weather recently. On a positive note, I used my spare time to continue writing my rough draft, so there wasn’t too much time lost.

Back to my program, I’ve added a number of features/improvements to it, the first one being adding timestamps for every recorded position in the hand so that I could actually calculate my velocity and acceleration using time. I did notice that the “frame rate” of the program dropped as a result, so I may try to reduce the number of timestamps later and minimize their usage. I also made sure that all of the points that fall outside the window are discarded to lessen the effect of reading points nowhere near the hand. This also means checking the distance between two consecutive points and discarding the last point if the distance is above some impossible value. I also use distance to make sure there are no false positives when the hand is still, so that the hand has to move a certain distance in order to register a beat. A number of musical functions have also been added for future use, such as converting a MIDI note number to the corresponding frequency of the sound.

Tempo Tracking and More Searching

Yesterday, as suggested by Forrest, I added the ability to calculate the current tempo of the music in BPM based on the amount of time in between the last two detected beats. It doesn’t attempt to ignore any false positive readings, and it doesn’t take into account the time taken up by the sleep function, but it’s a rough solution for now.

Now, the next major step I am hoping to take with this program is to use the beats to play some notes through MIDI messages. I am searching for libraries that will allow me to send MIDI note on/off messages to some basic synthesizer, and FluidSynth looks to be a decent option so far.

Fun with PortAudio and Next Steps

Today, I added the ability to change the volume of the sound based on the acceleration value, or how quickly the hand is moved, as well as change the frequency of the sound and thus change the note being played using a simple beat counter. I also noticed that the beat detection works almost flawlessly while I make the conductor’s motions repeatedly, which is a good sign that my threshold value is close to the ideal value, if there is one.

Now that the beat detection is working for the most part, the next thing I need to do is to figure out how to take these beats and either: a) convert them to MIDI messages, or b) route them through JACK to another application. Whichever library I find and use, it has to be one that doesn’t involve a sleep function that causes the entire program to freeze for the duration of the sleep.

Audio Implementation

I added the PortAudio functions necessary to enable simple playback as well as revised my beat detection algorithm to watch for both velocity and acceleration. My first impressions of the application so far is that the latency from gesture to sound is pretty good, but I noticed that the program freezes while the sound is playing (due to the Pa_Sleep function which controls the duration of the sound), which freezes the GUI, but could potentially mess up the velocity and acceleration readings as well. False positives or true negatives in the beats can also occur depending on the amount of threshold set, and the detection algorithm still needs more improvement to prevent them as much as possible.

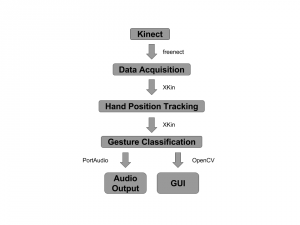

Current Design and Next Steps

Here’s the current version of the flow chart of my program design, although it will surely be revised as the program is revised.

I’ve also been thinking about how exactly the tracking of velocity and acceleration is going to work. At a bare minimum, I believe what we specifically want to detect is when the hand moves from negative velocity to positive velocity along the y-axis. A simple switch can watch when the velocity was previously negative and triggers a beat when the velocity turns positive (or above some threshold value to prevent false positives) during each iteration of the main program loop. The amount of acceleration at that point, or how fast the motion is being done, can then determine the volume of the music at that beat.

Quick Update

Small update today since I have other assignments I need to finish.

I implemented a simple modified queue that stores the last few recorded positions of the hand in order to quickly calculate acceleration. I also learned a bit more about the OpenCV drawing functions and was able to replace drawing my hand itself on the screen with drawing a line trail showing the position and movement of the hand. Those points are all we care about and that makes debugging the program a little bit easier.

Compiling the Program

After a good amount of online searching and experimentation, I finally got my Makefile to compile a working program. There is no audio output for my main program yet, but I am going to try out a different beat detection implementation that bypasses the clunky gesture recognition (namely tracking the position of the hand and calculating acceleration), and hopefully, it will result in simpler and better performance.

Testing PortAudio

I don’t know what took me so long to do it, but I finally installed PortAudio so that I can actually use it in my prototype program. To make sure it works, I ran one of the example programs, “paex_sine”, which plays a sine wave for five seconds, and got the following output:

esly14@mc-1:~/Documents/git/edward1617/portaudio/bin$ ./paex_sine PortAudio Test: output sine wave. SR = 44100, BufSize = 64 ALSA lib pcm.c:2239:(snd_pcm_open_noupdate) Unknown PCM cards.pcm.rear ALSA lib pcm.c:2239:(snd_pcm_open_noupdate) Unknown PCM cards.pcm.center_lfe ALSA lib pcm.c:2239:(snd_pcm_open_noupdate) Unknown PCM cards.pcm.side bt_audio_service_open: connect() failed: Connection refused (111) bt_audio_service_open: connect() failed: Connection refused (111) bt_audio_service_open: connect() failed: Connection refused (111) bt_audio_service_open: connect() failed: Connection refused (111) Play for 5 seconds. ALSA lib pcm.c:7843:(snd_pcm_recover) underrun occurred Stream Completed: No Message Test finished.

I’m not entirely sure what is causing the errors to appear, but the sine wave still played just fine, so I’ll leave it alone for now unless something else happens along the way.

Now that I have all the libraries I need for my prototype program, all I need to do next is to make some changes to the demo program to suit my initial needs. I’ll also need to figure out how to compile the program once the code is done and then write my own Makefile.

Kinect v1 Setup

The new (or should I say, old) Kinect finally arrived today, and plugging it into one of the USB 2.0 ports gives me the following USB devices:

Bus 001 Device 008: ID 045e:02bf Microsoft Corp. Bus 001 Device 038: ID 045e:02be Microsoft Corp. Bus 001 Device 005: ID 045e:02c2 Microsoft Corp.

. . . which is still not completely identical to what freenect is expecting, but more importantly, I was finally able to run one of the freenect example programs!

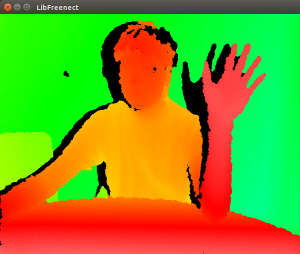

This is one-half of the freenect-glview program window, which shows the depth image needed to parse the body and subsequently the hand. I then dived into the tools that the XKin library provides, helper programs that let the user define the gestures that will be recognized by another program. With some experimentation, along with re-reading the XKin paper and watching the demo videos, I found out that the XKin gesture capabilities are more limited than I thought. You have to first close your hand to start a gesture, move your hand along some path, and then open your hand to end the gesture. Only then will XKin try to guess which gesture from the list of trained gestures was just performed. It is a bit of an annoyance since conductors don’t open and close their hands at all when conducting, but that is something that the XKin library can improve upon, and I know what I can work with in the meantime.

Program Design and Progress

While I am waiting for the Kinect to arrive in the mail, hopefully by tomorrow, I have been planning out the structure of my program and what exactly it is going to do. More will be added and revised as the gestures and musical output get more complex, but the foundation and the basic idea is, or at least should be, here.

Also, I was able to successfully push to my Gitlab repository from my computer (the one I borrowed from the Turing lab) after adding an SSH key. Check out what I have so far!

Setup Complications Part 2

Thanks to Charlie, I added a 2-slot PCI Express USB 3.0 Card into the PC, and now instead of these devices from the Kinect:

Bus 001 Device 006: ID 045e:02c4 Microsoft Corp. Bus 001 Device 003: ID 045e:02d9 Microsoft Corp.

I get these:

Bus 004 Device 003: ID 045e:02c4 Microsoft Corp. Bus 004 Device 002: ID 045e:02d9 Microsoft Corp. Bus 003 Device 002: ID 045e:02d9 Microsoft Corp.

. . . which is unfortunately still not what I’m looking for when compared to what freenect expects. Not surprisingly, I still couldn’t run the example programs with the Kinect through the new ports either. So the next step is to wait for the v1 Kinect to arrive. I would start writing the program now, but I hesitate to run into more problems if I’m not able to test the program at every step.

Setup Complications

My biggest fear for this project is being able to setup the hardware and software libraries in such a way that they would be able to work together. In terms of installing the libraries, I ran into a few complications that I had to manually fix myself, but thankfully, there weren’t any major issues I couldn’t solve.

The hardware, however, is a different story, since I couldn’t get the Kinect to be detected by the example programs. It turns out that according to a thread in the OpenKinect Google Group, the second version of the Kinect (v2), which is what the music department has now, doesn’t actually sends infrared images through USB instead of the depth data that libfreenect expects to receive. Moreover, OpenKinect says that I should be seeing the following USB devices through the “lsusb” command:

Bus 001 Device 021: ID 045e:02ae Microsoft Corp. Xbox NUI Camera Bus 001 Device 019: ID 045e:02b0 Microsoft Corp. Xbox NUI Motor Bus 001 Device 020: ID 045e:02ad Microsoft Corp. Xbox NUI Audio

Instead, I just get:

Bus 001 Device 006: ID 045e:02c4 Microsoft Corp. Bus 001 Device 003: ID 045e:02d9 Microsoft Corp.

To complicate things even further, the v2 Kinect connects through USB 3.0, but the CS department computers only have USB 2.0 ports. We are currently finding a USB 3.0 to 2.0 adapter to see if that changes anything, but I just ordered a v1 Kinect myself as a backup plan. Time is running short and I’m already falling behind schedule.

Project Design and Proposal

The current version of the proposal, which includes my revised thoughts from the survey paper as well as the design and timeline of the project:

Deadlines:

- October 26: Develop a preliminary test build for the application by learning a simple gesture and controlling the playback of a sawtooth wave.

- November 2: Add more complex gestures, particularly conductor gestures, and add more control over the sawtooth wave accordingly.

- November 9: Integrate JACK for routing gesture messages to LMMS for integration with VST instruments and synthesizers.

- November 16: Complete the first draft of the paper.

- November 23: Continue working on application. Complete outline for the poster.

- November 30 or December 4: Presentation

- December 12: Complete the second draft of the paper.

- December 16: Complete the final draft of the paper.

Next Steps

I’ve been trying to figure out which libraries and frameworks are best for developing my Kinect application on Linux, and without testing any of the libraries I’ve found for compatibility so far, the search has been really difficult. This paper provides one possible setup, using:

- openFrameworks for essential libraries such as OpenGL, a choice number of audio libraries, and a font library

- ofxOpenNI module, a wrapper for:

- OpenNI, providing access to the Kinect device and extracting the video stream (unfortunately, the original website was shut down, but there is this site instead)

- NITE, providing the skeleton tracking capabilities (also shut down with OpenNI)

- SensorKinect

I’ll look for other papers that have developed Kinect applications and check which of these libraries are absolutely necessary, if at all.

UPDATE: libfreenect (to replace OpenNI) and XKin (to replace NITE) seem to be attractive open-source alternatives.

Literature Review

Topic: Gesture Recognition for Virtual Orchestra Conducting

Annotated Bibliography

Surveys various methods for gesture recognition and audio processing for the purpose of playing music through physical motion.

New Project Proposal

Now that I have a better understanding of what I want (and need) to do, here’s the first draft of my new plan:

Using the 3D motion tracking data of the Microsoft Kinect, our goal is to create a virtual conductor application that uses gesture recognition algorithms in order to detect beats and control the tempo and volume of the music. Care must also be taken in order to minimize the latency of the system from gestural input to audio output for the system to be suitable for live performance. We will be testing various beat detection algorithms proposed by other papers in order to determine which is best in terms of latency. Moreover, in regards to the audio playback itself, we will generate the desired music from appropriate synthesizers, further allowing for the possibility of live musical performance as well as the possibility for custom instrument creation and music composition.

Advisors: Charlie Peck and Forrest Tobey

Revised Project Proposal

. . ., but it may be revised again soon.

Our goal is to make a 3D rhythm game that would, among other possible applications, teach players the gestural motions of an orchestral conductor and act as a teacher for conducting music. The basic gameplay is that at certain points in the music, the game will show where the wand needs to be placed in 3D space and calculate score based on the distance between the intended position and the actual position of the wand. The gameplay would be comparable to the free game osu!, except no other inputs (e.g. mouse buttons) are required to play the game.

The project will consist of both software and hardware components, namely the game itself and a controller made specifically for said game, respectively. Currently, the game is planned to be built from scratch while including libraries such as OpenGL for a graphical interface and PortAudio for interacting with audio. Meanwhile, the required hardware may include just two infrared cameras/sensors as well as one infrared emitter on the tip of a wand for the cameras to detect. The reason for using infrared is to minimize any background interference that may occur when tracking a specific object as opposed to tracking by color.

Project Ideas

Some of this is copy and pasted from the email I sent last spring, but anyway, here’s my two project ideas:

- Expanding on the research that I have been doing with Forrest, I hope to make a 3D rhythm game that just uses two Raspberry Pi infrared (NoIR) cameras as well as one infrared sensor on the tip of a wand for the cameras to detect. The basic gameplay is that at certain points in the music, the game will indicate where the wand needs to be placed in 3D space and calculate score based on the distance between the intended position and the actual position of the wand. If I have to compare this to any other rhythm game out there, it would be osu!, since the wand would effectively be treated as a mouse cursor for the game to interact with, and the gameplay would look similar, but without the mouse clicking.In terms of development, I may start with one camera and a 2D game for ease of programming and a simpler user interface, and then transition to 3D afterwards using algorithms already discussed with Forrest and Jim Rogers to get coordinates in 3D space using the two cameras. If all of this goes well, I may add a second sensor and a second wand, so that either one person can control both wands for added difficulty, or two people can control one wand each for competitive or cooperative play. My goal for this is to make a game that is relatively cheap on hardware compared to the hardware of most other rhythm games out there, and potentially teach players the gestural motions of a conductor or any other possible motions. For now, the player won’t be in direct control the music, but that may be a feature that can be implemented farther into the future, as part of the game or in a separate DAW application such as Ableton Live.

- I would like to learn more about data compression and the algorithms that go into it, especially in the context of compressing media files such as music, images, and video, and the difference in algorithms when using lossy or lossless compression. If there is an algorithm that can perform better (in terms of either running time or resulting file size) than what existing file formats currently provide, I can write a program that can compress files using said algorithm (either into a new file format or to improve an existing algorithm) using the new algorithm(s). If the compression is lossless, I can also decompress the compressed files back to their original state as well.