Full Paper (Final Draft)

Here’s the final draft of my paper to finish off the semester:

Here’s the final draft of my paper to finish off the semester:

Yesterday, I just changed the name of the executable file to “konductor” simply because I wanted the command to be a little more descriptive over just “main,” and all related files have also been updated to reflect the change. Unless something else happens, this will probably be my last program update for the semester.

Meanwhile, today is all about finishing my 2nd draft so that I can work on other projects due tomorrow.

I’ve been sick for the days I wanted to do the poster, so it’s substantially lower in quality than I wanted it to be and I haven’t submitted it for printing yet. I intend to do so before noon tomorrow.

Also because of illness, I have no other progress to report. Timeline:

Most of that is either on schedule or only slightly behind. The part of the project that will be set back most by this is the software component, which is in good enough shape that it is now lower priority than all of the other items.

work on running shell script to curl api url, also running them through arduino program

I have been mainly working on polishing up my presentation. I prepares the slides, rehearsed and gave some finishing touches to Robyn.

work on testing access to arduino once online, ssh ing to the arduino and running commands

Connecting Arduino Yun to internet, huge pain, arduino cant connect to ECsecure and trouble connecting to ECopen

work on organizing hardware to be less messy and more compact,

Finalizing front-end application

first day of presentation

Work on backend api, building out the update function to take arguments

Final soldering day, adding safety sheaths to protect against electrocution

practice soldering with craig

Conversation with charlie on how to get stranded wires into bread board, began work on soldering with help of craig early

work on api and front end web application

work on placing appliance into container given by charlie, trying to curl command to work, runshellcommand not working in program

started work on presentation, finalizing curl working from inside arduino program

Work on presentation slides and presentation

Final presentation day, finalized slides and prepared presentation

I met with Charlie this morning. Due to feeling increasingly sick throughout the day I haven’t finished the poster as planned, but I know what needs to be added on Thursday – just a few hours of work on that day, print either that afternoon or first thing in the morning Friday.

Today, I tried getting rid of the sleep function from OpenCV that watches when a key has been pressed so that the program can quit safely. This is the only sleep function left in the program that could possibly interfere with the timekeeping functions in the program, and keyboard control is not necessarily a key part of the program either. However, I couldn’t find any other alternatives in the OpenCV reference; I was looking for a callback function that is invoked when a window is closed, but that doesn’t seem to exist in OpenCV.

Nevertheless, I have been continuing to work on my paper and add the new changes to it. Although, I don’t know if I’ll have time to finish testing the program before the poster is due on Friday.

Wanted to update to make that clear.

I hope that my only requirements in the final version relate to style and language: adding the appropriate academic structure, rephrasing sentences and paragraphs, maybe moving something here or there.

I hope not to need additional content, though it’s possible that some section will need an extra paragraph or two.

It’s certainly enough for the poster.

I have been extensively working on improving the front end of Robyn’s web interface and the Natural Language Processing aspect of Robyn. Trying to give some finishing touches to Robyn before the demo this Wednesday.

The paper was my project for the weekend. I had intended it to be the final version, in order to be done with it entirely before exams and other projects came due, but I will instead consider this version the second draft and submit it to that slot on Moodle.

More specific status of the paper:

It’s a good second draft. It would be a bad final draft.

This week I will accomplish three things:

Looking forward to others’ presentations on Wednesday.

Before I forget, here’s the link to the video of the demo I played in my presentation. This may be updated as I continue testing the program.

Today’s update mainly just consisted of a style change that moved all of my variables and functions (except the main function) to a separate header file so that my main.c file looks all nice and clean. I may separate all of the functions into their own groups and header files too as long as I don’t break any dependencies to other functions or variables.

Over Thanksgiving break I have learned how to compile and modify the software for ffmpeg in Cygwin, a virtual Unix environment. This makes the process of modifying and compiling the code easier. Furthermore, I have been developing and working on implementing algorithms to use the conclusions from my experiment better choose how many keyframes to allocate for different types of videos. I have also made the full results of my experiments available here.

John Doe

555 merrly lane5.0

Jack Doe

555 merrly lane3.0

Jane Doe

555 merrly lane4.0

I did not do much work during Thanksgiving since I was travelling a lot. However, after coming back from the break I have spent a good amount of time everyday working on my senior sem project. I have especially been working making a web interface for the python script of Robyn. Since I had not done much work with python web frameworks, I had to learn quite a bit about these. After looking at several options like Django, CGI, Flask and Bottle, I decided to use Bottle since it has a relatively low barrier to learning and since it is light weight, which is sufficient for Robyn and makes the system easier to setup as well. Today I finished the plumbing for the web interface, as well as the front end for Robyn.

Now, for the next couple of days I will work on the NLP part of Robyn.

Today’s program update was all about moving blocks of code around so that my main function is only six lines long, while also adding dash options (-m, -f, -h) to my program as a typical command would.

Looking through the XKin library for a little bit and after having done my presentation, I’ll soon try to implement XKin’s advanced method for getting hand contours and see if that helps make the centroid point of the hand, and thus the hand motions, more stable. In the meantime, though, my paper is in need of major updates.

Notes post-presentation, including a few features I need to complete the base version of the code:

Now I’m putting the project down until this weekend, to get some other work done. This weekend I will complete the software and paper. If there’s time I’ll do the poster.

I am shortly going to complete the presentation for this class and a quiz for another, but I made massive progress today. It is not perfect but it has enough functionality for a demo tomorrow (though because it’s not perfect I’ll spend some time tomorrow afternoon practicing).

What it can do:

The core functionality deserves its own bullet points:

What I may still be able to do between now and tomorrow’s class but can’t guarantee:

See my git commit messages here.

I’m not sure how much more work I’ll get done on this software this semester. I hope more, because it’s got a lot of promise and a good foundation, but I would be satisfied with this for purposes of the course grade given my other academic constraints.

I forgot that I also needed to compose an actual orchestral piece to demo my program with, so that’s what I did for the entire evening. I didn’t actually compose an original piece, but rather just took Beethoven’s Ode to Joy and adapted it for a woodwind quintet (since I don’t have time to compose for a full orchestra). Soon, I’ll actually test my program and try to improve my beat detection algorithm wherever I can.

I also tried adding the optional features into my program for a little bit, but then quickly realized they would take more time than I have, so I decided to ditch them. I did, however, add back the drawing of the hand contour (albeit slightly modified) to make sure that XKin is still detecting my hand and nothing but my hand.

Have made progress in training cascades for different emotion. Meeting with Dave tomorrow to determine implementation methods.

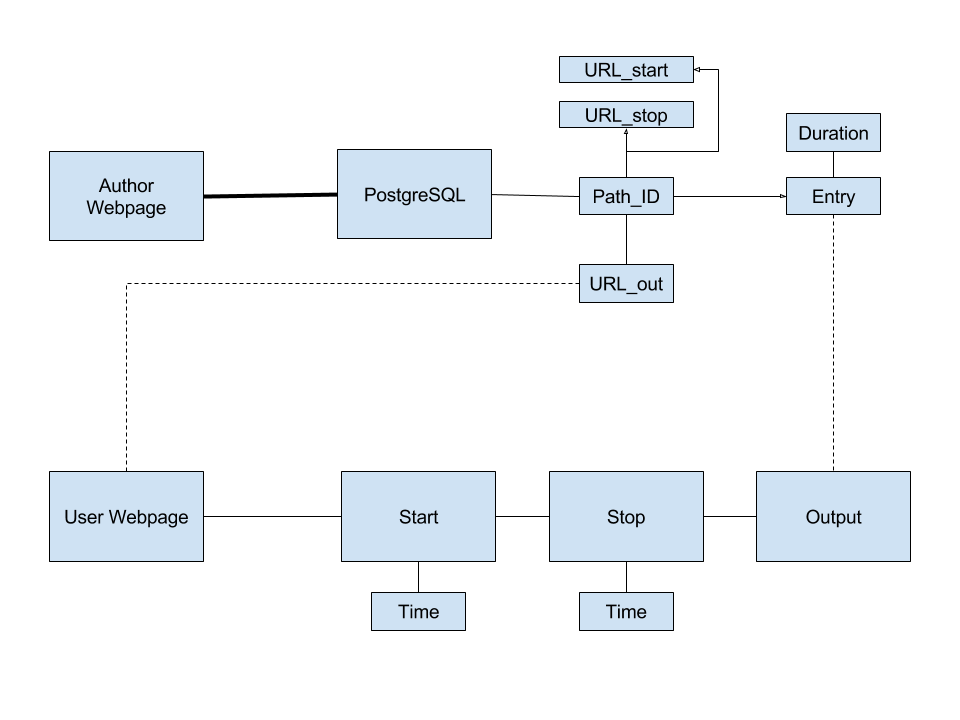

I solved multiple issues just by walking through this diagram …

… and it’s not even complete, I suspect. Subject to examination tomorrow morning.

Progress relative to what I anticipated: basically good. Details:

The biggest problem is that it does not (to use my metric from yesterday) “work, start->finish.” I’m planning to spend tomorrow much like today, except more emphasis on coding and testing. I will produce my presentation in the evening and demo what I have (which I will consider the final version, for purposes of this course) on Wednesday.

Also here’s a silly throwback to when I was initially doodling that diagram in Jot! for the iPad.

Thanksgiving is over. I exercised my prerogative to take a break and did no work in the last week. I also chose to give my final presentation this week, so I have a firm timetable that prioritizes this project (both code and paper) for the next week.

To wit:

There are a number of pitfalls I can foresee, and the reasons I would fall short of completion by Wednesday would most likely be because one of the following proved more complex than anticipated:

In short, this week will be mostly devoted to this class (and the following two weeks will emphasize others).

Yesterday was a pretty big day as I was able to implement FluidSynth’s sequencer with fewer lines of code than I originally thought I needed. While the program is still not completely perfect, I was able to take the average number of clock ticks per beat and use that to schedule MIDI note messages that do not occur right on the beat. I may make some more adjustments to make the beat detection and tempo more stable, but otherwise, the core functionality of my program is basically done. Now I need to record myself using the program for demo purposes and measure beat detection accuracy for testing purposes.

Two other optional things I can do to make my program better are to improve the GUI by adding information to the window, and to add measure numbers to group beats together. Both serve to make the program and the music, respectively, a little more readable.

The past few days have been pretty uneventful, since I’m still feeling sick and I had to work on another paper for another class, but I am still reading through the FluidSynth API and figuring out how scheduling MIDI events work. FluidSynth also seems to have its own method of counting time through ticks, so I may be able to replace the current timestamps I have from <time.h> and replace them with FluidSynth’s synthesizer ticks.

Today, I found out how to automatically connect the FluidSynth audio output to the computer’s audio device input without having to manually make the connection in QjackCtl every time I run the program, and how to map each instrument to a specific channel. The FluidSynth API uses the “fluid_settings_setint” and “fluid_synth_program_select” functions, respectively, for such tasks. Both features are now integrated into the program, and I also allow the users to change the channel to instrument mapping as they see fit as well.

Now, the last thing I need to do to make this program a true virtual conductor is to incorporate tempo in such a way that I don’t have to limit myself and the music to eighth notes or longer anymore. Earlier today, Forrest also suggested that I use an integer tick counter of some kind to subdivide each beat instead of a floating point number. Also, for some reason, I’m still getting wildly inaccurate BPM readings, even without the PortAudio sleep function in the way anymore, but I may still be able to use the clock ticks themselves to my advantage. Although, a simple ratio can convert clock ticks to MIDI ticks easily, but I still need to figure out how I can trigger note on/off messages after a certain number of clock ticks have elapsed.

Yesterday, I changed the velocity value of every offbeat to make them the same as the previous onbeat since the acceleration at each offbeat tends to be much smaller and the offbeat notes would be much quieter than desired. This change, however, also made me realize that my current method of counting beats may be improved by incorporating tempo calculations, which I forgot about until now and is not doing anything in my program yet. The goal would be to approximate when all offbeats (such as 16th notes) would occur using an average tempo instead of limit myself to trigger notes precisely at every 8th note. While this method would not be able to react to sudden changes in tempo, this could be a quick way for my program to be open to all types of music and not just those limited to those with basic rhythms.

Yesterday, I only had time to make relatively small changes to my program, but I did write more of the readme in more detail explaining how to use the program. Even more will be explained as I finish developing my program.

Also, I figured out that the reason for the bad points showing up so often was that part of the table was mistaken to be part of the hand, so I moved the Kinect camera a little higher, and sure enough, that issue was fixed. Now the only two important issues I have left to figure out are how to change the instrument that is mapped to each channel, and how the user will be allowed to change said mapping to fit the music. I may also try to figure out how to automatically connect the FluidSynth audio output to the device output of the computer, but I’m not completely sure if this is OS-specific.

I finished my draft yesterday and I am proofreading it today before submitting it. I am still also looking into integrating python scripts into a webpage.

In a shorter amount of time that I thought, I was not only able to add FluidSynth to my program, but also able to get working MIDI audio output from my short CSV files, although I had to configure JACK in such a way that all of the necessary parts are connected as well. For some reason, though, I’m seeing a lot more stuttering on the part of the GUI lately and the hand position readings aren’t so smooth anymore, and the beats suffer as a result. I’ll try figuring out the cause soon, but now I have a paper to finish.

I am spending today (Tuesday) writing the paper. I have now written all section and subsection headers that I need into it and started incorporating useful bits of the proposal as a baseline.

In an effort to produce a working version of the software, the following are my goals for the rest of the day:

This means that the following will be delayed for the second draft and the poster:

The final version of the paper will be a revision of the second draft, in which I will remove cruft rather than add content. The software version as of December 12, when the second draft is due, will be the last version of the software I work on for grading purposes in this class.

I intend to work minimally over break, so what I complete this week is likely all I will complete before the return the following week.

Working on the draft. Also worked on reorganizing my software directory for better structure.

I did a little more reading this weekend. Tomorrow (Monday) I’m occupied with meetings and classes, but I’m dedicating all day Tuesday to concluding my readings, completing the first draft, and (I hope) finishing iteration zero of the software.

Because of recent disruptions I am not keeping pace with my imagined progress from a few weeks ago, but Tuesday should catch me up enough that I can return from break and complete everything satisfactorily.

I have been working quite a bit on my program. I set up what I think will be my primary database for info related to diseases. I have also been updating my github to reflect the updated state of my code. I ran into issues where the sql script I was using was based of MySQL and had many syntax that is incompatible with SQLite3 which is my db server. After working on it and through several processes I was able to fix the script and create the DB.

I briefly removed the timestamps from my program, but I didn’t notice any change in performance any more, so I just left them in the program as before. I also made my program a little more interesting by playing random notes instead of looping through a sequence of notes, and changed the beat counter to increment every eighth note instead of every quarter note. The latter change will be important when I finally replace PortAudio with FluidSynth.

I also played around with the VMPK and Qsynth programs in Linux to refresh myself on how MIDI playback works and how I can send MIDI messages from my program to one of these programs. Thanks to that, I now have a better idea of what I need to do with FluidSynth to make Kinect to MIDI playback happen. I also plan to have a CSV file that stores the following values for the music we want to play:

beatNumber, channelNumber, noteNumber, noteOn/Off

The instruments that correspond to each channel will have to be specified beforehand too.

The past few days have been really rough on me, as I attended the Techpoint Xtern Finalist reception all day yesterday, all while being sick with a sore throat and cold from the freezing weather recently. On a positive note, I used my spare time to continue writing my rough draft, so there wasn’t too much time lost.

Back to my program, I’ve added a number of features/improvements to it, the first one being adding timestamps for every recorded position in the hand so that I could actually calculate my velocity and acceleration using time. I did notice that the “frame rate” of the program dropped as a result, so I may try to reduce the number of timestamps later and minimize their usage. I also made sure that all of the points that fall outside the window are discarded to lessen the effect of reading points nowhere near the hand. This also means checking the distance between two consecutive points and discarding the last point if the distance is above some impossible value. I also use distance to make sure there are no false positives when the hand is still, so that the hand has to move a certain distance in order to register a beat. A number of musical functions have also been added for future use, such as converting a MIDI note number to the corresponding frequency of the sound.

Got facedetect.cpp to compile correctly. Now working on testing it with data provided by openCV.

I have been working on trying to find database for diseases for Robyn.

I didn’t accomplish anything the first few days of the week. Today I want to read some more sources and finalize the paper topic. I may start incorporating the survey paper into the draft, so I can start building up the page count.

I have been working on my bot. I decided to name my bot Robyn. I also created a repo in my github (github.com/arai13) for Robyn and have started taking snapshots on a regular basis.

Yesterday, as suggested by Forrest, I added the ability to calculate the current tempo of the music in BPM based on the amount of time in between the last two detected beats. It doesn’t attempt to ignore any false positive readings, and it doesn’t take into account the time taken up by the sleep function, but it’s a rough solution for now.

Now, the next major step I am hoping to take with this program is to use the beats to play some notes through MIDI messages. I am searching for libraries that will allow me to send MIDI note on/off messages to some basic synthesizer, and FluidSynth looks to be a decent option so far.

I am continuing the tests that I mentioned in the previous update and adding them to the graph.

I spent Monday running more tests with ffmpeg to get better data. I am now forcing a specific number of prediction frames over a regular interval.

Today I open up the extension cord to see what I was going to be working with. I expected two solid pieces of copper instead, I found many very skinny pieces. Will need to consult with kyle about how to go about working from this point on.

Data collection and work with data to determine the exact resistor values I will need for the circuit, paid close attention to power dissipation

Communicated with kyle to work on circuit board online with circuit.io, made good progress

finalized circuit board online

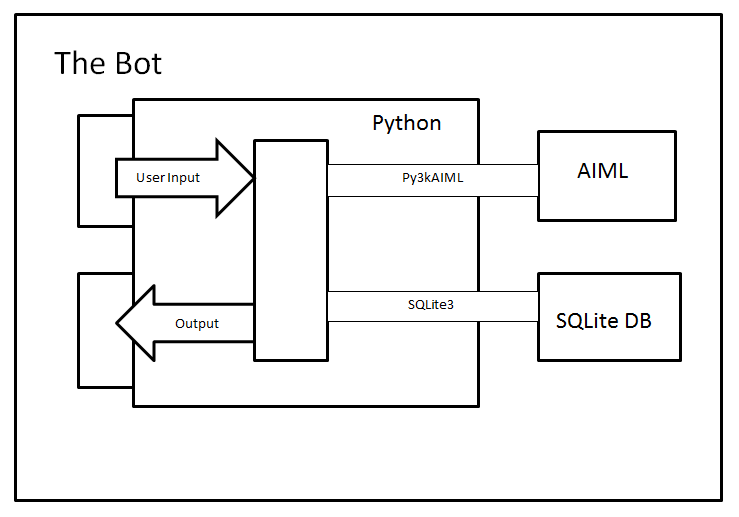

So after playing around and exploring for a bit, I have finally chosen my final set of tools for the project. I will be using Python, AIML, SQLite with Py3kAIML and sqlite3 libraries. I was able to finish the plumbing and now have a very basic bot that can listen to the user, fetch data from the SQLite database and print the result. Now that I have the main tools I will be using, the design of the system will be the following:

Today, I added the ability to change the volume of the sound based on the acceleration value, or how quickly the hand is moved, as well as change the frequency of the sound and thus change the note being played using a simple beat counter. I also noticed that the beat detection works almost flawlessly while I make the conductor’s motions repeatedly, which is a good sign that my threshold value is close to the ideal value, if there is one.

Now that the beat detection is working for the most part, the next thing I need to do is to figure out how to take these beats and either: a) convert them to MIDI messages, or b) route them through JACK to another application. Whichever library I find and use, it has to be one that doesn’t involve a sleep function that causes the entire program to freeze for the duration of the sleep.

November 2 – November 4

Since I figured that understanding Android development would take more time than I expected, I decided to speed up the development process by using Cordova as my development platform. I installed Cordova on my computer and started integrating the Wikitude API into it.

November 5 – November 6

I took an actual campus tour on the family weekend to see how the guides walk the guests through the campus of Earlham and see from a visitor’s perspective. It is really helpful and I collected some information for the application.

November 7

I started working with GPS locations and Image recognitions.

Nothing new from this weekend. Today I’m considering the IO of the URL’s for the software and, if that goes well, writing the code to create the visual interface. I’ll outline the paper and gather final reading material tomorrow. At the end of the day tomorrow I’ll post a comprehensive update.

Downloaded Android Studio to begin learning Android development.

I’ve been working on making an outline for the first draft of the paper.

I added the PortAudio functions necessary to enable simple playback as well as revised my beat detection algorithm to watch for both velocity and acceleration. My first impressions of the application so far is that the latency from gesture to sound is pretty good, but I noticed that the program freezes while the sound is playing (due to the Pa_Sleep function which controls the duration of the sound), which freezes the GUI, but could potentially mess up the velocity and acceleration readings as well. False positives or true negatives in the beats can also occur depending on the amount of threshold set, and the detection algorithm still needs more improvement to prevent them as much as possible.

Created cluster account and got the sample files from OpenCV copied to the cluster. Need to learn how to use qsub to compile programs.

I am still looking into setting up the architecture with Python.

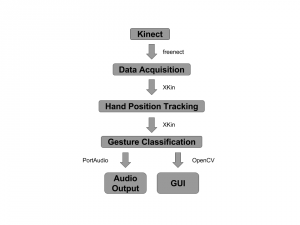

Here’s the current version of the flow chart of my program design, although it will surely be revised as the program is revised.

I’ve also been thinking about how exactly the tracking of velocity and acceleration is going to work. At a bare minimum, I believe what we specifically want to detect is when the hand moves from negative velocity to positive velocity along the y-axis. A simple switch can watch when the velocity was previously negative and triggers a beat when the velocity turns positive (or above some threshold value to prevent false positives) during each iteration of the main program loop. The amount of acceleration at that point, or how fast the motion is being done, can then determine the volume of the music at that beat.

For the last couple of days I’ve been walking through a simple Chrome extension, HexTab, the source code of which is open and published on GitHub. The functionality is nothing like mine, but it has the virtues of …

I would like to start hollowing out this code soon, but I’m spending a while reading the code first.

I have spent the last week split up between 3 different tasks: Starting to chart the twitter ER diagram, following the O’Reilly Social Media Mining book to continue to learn about harvesting through APIs, and reopening my database systems textbook to remind myself how views work.

After speaking with Charlie last week, we discussed the possibility of using views to select relevant tables from the larger Facebook and Twitter structures to create a model that was easily modifiable and a combination of the two existing models, rather than trying to force the models themselves together into a new, heavily set model.

Work on the Twitter model is coming along, and I hope to be done by the end of this week.

I spent today reading through more of the documentation for ffmpeg to learn more about its structure and the commands it supports.

I have been working on the outline for the draft.

Went to home depot and found a very help person who was knowledgeable in electronics. I have decided to use an extension cord as the backbone of my non-invasive device. I will plug both the power source of the Arduino and the washing machine into the extension cord, then open up the extension cord to read the voltage of the washing machine. I am working and talking to kyle about different technique with resistors to bring the voltage down to a readable amount and then using the Arduino’s built in 0-5V reader to read the voltage. Began working with online bread board and Arduino simulators to begin testing.

Small update today since I have other assignments I need to finish.

I implemented a simple modified queue that stores the last few recorded positions of the hand in order to quickly calculate acceleration. I also learned a bit more about the OpenCV drawing functions and was able to replace drawing my hand itself on the screen with drawing a line trail showing the position and movement of the hand. Those points are all we care about and that makes debugging the program a little bit easier.

Presentation went well today. No project updates, except that I’ve decided that I hope to complete a working software version by the end of November so I can focus on the paper during December. (This will not interfere with completing the first draft by class in two weeks.)

I have placed some of my early data in various spreadsheets. I am continuing the process of collecting data, and am ready to use my the information I have so far and observations I have made to start the first draft of my paper.

I have been gathering more data to find the optimal number of keyframes for various types of videos. The videos with larger file sizes take a long time to compress.

I have decided to implement the AIML, Python, MySQL architecture and have been looking at setting up an environment to run them all.

Couldn’t get OpenCV to install properly on my laptop so I asked the CS admins to create a cluster account for me. By sshing to the cluster I will be able to use OpenCV. Tomorrow I expect to get the facedetect sample to compile and run. From there I can start working on implementing the emotion code.

After a good amount of online searching and experimentation, I finally got my Makefile to compile a working program. There is no audio output for my main program yet, but I am going to try out a different beat detection implementation that bypasses the clunky gesture recognition (namely tracking the position of the hand and calculating acceleration), and hopefully, it will result in simpler and better performance.

Heading to home depot to talk to someone knows about voltage splitters or where else to measure voltage from. Heres hoping someone knows something.

Just wrapping the wires around the cord, doesn’t work, neither does attaching the wires to the prongs of the plug. I am thinking about going to an electrician or home depot to find someone who knows where to measure the voltage

With measuring voltage figured out, i have moved on to determining where to attach the wires to measure the voltage.

work on voltage monitoring, found 2 ways to determine voltage, the first measures 0-5V, the second measures higher voltages using voltage dividers and multiple resistors.

I don’t know what took me so long to do it, but I finally installed PortAudio so that I can actually use it in my prototype program. To make sure it works, I ran one of the example programs, “paex_sine”, which plays a sine wave for five seconds, and got the following output:

esly14@mc-1:~/Documents/git/edward1617/portaudio/bin$ ./paex_sine PortAudio Test: output sine wave. SR = 44100, BufSize = 64 ALSA lib pcm.c:2239:(snd_pcm_open_noupdate) Unknown PCM cards.pcm.rear ALSA lib pcm.c:2239:(snd_pcm_open_noupdate) Unknown PCM cards.pcm.center_lfe ALSA lib pcm.c:2239:(snd_pcm_open_noupdate) Unknown PCM cards.pcm.side bt_audio_service_open: connect() failed: Connection refused (111) bt_audio_service_open: connect() failed: Connection refused (111) bt_audio_service_open: connect() failed: Connection refused (111) bt_audio_service_open: connect() failed: Connection refused (111) Play for 5 seconds. ALSA lib pcm.c:7843:(snd_pcm_recover) underrun occurred Stream Completed: No Message Test finished.

I’m not entirely sure what is causing the errors to appear, but the sine wave still played just fine, so I’ll leave it alone for now unless something else happens along the way.

Now that I have all the libraries I need for my prototype program, all I need to do next is to make some changes to the demo program to suit my initial needs. I’ll also need to figure out how to compile the program once the code is done and then write my own Makefile.

Accomplished since 10/28 post:

Next to work on:

I worked on an architecture for my program which is based on AIML with Python and MySQL in the backend.

I have been looking at different ways to integrate a database into AIML

I’m still trying to get the libraries for OpenCV installed in order to compile facedetect.cpp.

Continuing to gather data to evaluate ffmpeg.

Continuing to work on gathering data for the speeds and compression ratios of ffmpeg.

Currently still trying to compile the OpenCV facedetect.cpp file from the samples directory. I keep getting an error saying it cannot locate the libraries in the OpenCV.pc file. I am trying to get this resolved as soon as possible so I can use that program and begin working on the emotion detection portion of the project.

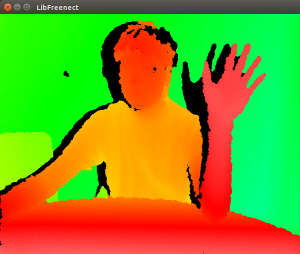

The new (or should I say, old) Kinect finally arrived today, and plugging it into one of the USB 2.0 ports gives me the following USB devices:

Bus 001 Device 008: ID 045e:02bf Microsoft Corp. Bus 001 Device 038: ID 045e:02be Microsoft Corp. Bus 001 Device 005: ID 045e:02c2 Microsoft Corp.

. . . which is still not completely identical to what freenect is expecting, but more importantly, I was finally able to run one of the freenect example programs!

This is one-half of the freenect-glview program window, which shows the depth image needed to parse the body and subsequently the hand. I then dived into the tools that the XKin library provides, helper programs that let the user define the gestures that will be recognized by another program. With some experimentation, along with re-reading the XKin paper and watching the demo videos, I found out that the XKin gesture capabilities are more limited than I thought. You have to first close your hand to start a gesture, move your hand along some path, and then open your hand to end the gesture. Only then will XKin try to guess which gesture from the list of trained gestures was just performed. It is a bit of an annoyance since conductors don’t open and close their hands at all when conducting, but that is something that the XKin library can improve upon, and I know what I can work with in the meantime.

I spent this week charting out ER diagrams for a Facebook database schema. A lot of this work involved converting DDL statements I found online into a class diagram, and understanding how the classes related to each other. I am now at a point where I understand the entities and their relationships, and the next step is figuring out which of these entities I care about for my project.

I have also been using Mining The Social Web. This book is an overview of data mining popular websites such as Twitter, Facebook, and (interestingly as a social media site), LinkedIn. It even touches on the semantic web and the not-so-popular Google Buzz. Each area is covered with explanations on how to set up programs, a brief introduction to and explanation on the workings of the API, some examples of mining code and a couple of suggestions on how to use it.

I plan to use the data I am learning to harvest through these APIs to test and iteratively hone my data model. I’m currently working on charting out the ER diagram for Twitter, although this is proving trickier than it’s Facebook counterparts because I’ve only been able to find fragments of the model in different places.

Catching up after traveling last week, I focused mostly on procedural bits for the project: opening an Overleaf project for the paper and getting the formatting/section headers right, researching Chrome extensions, and drawing some diagrams (about which more later).

I will focus on building up the paper for the next week. I also need to complete the IRB form (I’m about halfway through now) and the software design, which I intend to do by the time of the next class. Again see here for detailed timeline.

I have begun work on testing how long it takes ffmpeg to compress certain files, and how effectively it compresses files at certain key frame sizes.

I have also been working on compiling the program’s source code so I can work on modifications, but I haven’t yet succeeded at that.

Currently working on tagging the individual data fields in each message entry, and saving the newly tagged tweets to a new directory.

Made progress towards getting OpenCV to compile sample code.

While I am waiting for the Kinect to arrive in the mail, hopefully by tomorrow, I have been planning out the structure of my program and what exactly it is going to do. More will be added and revised as the gestures and musical output get more complex, but the foundation and the basic idea is, or at least should be, here.

Also, I was able to successfully push to my Gitlab repository from my computer (the one I borrowed from the Turing lab) after adding an SSH key. Check out what I have so far!

Working with the AIML tutorial at https://playground.pandorabots.com/en/tutorial/.

continued work on measuring voltage on arduino

After further researching open-source projects and tools that are available to me, I have decided that I will instead focus on ffmpeg. It is similar to Xvid in the sense that it is an open-source project that provides codecs for compressing and decompression data, but it has better documentation and seems easier to work with.

I have also attained several sample files and have begun experimenting with how well ffmpeg compresses them. In order to test their compression algorithms as best as possible, I have many different types of videos for performing testing on. One video is a black screen, and it compresses quite nicely, which makes since given that there is little randomness is the video. Another video, which involves confetti falling, compresses poorly, since the video is much less predictable. I plan to continue to experiment to see what ffmpeg excels at and struggles with, and I will study and evaluate its source code.

I played around with Wikitude and Vuforia SDKs and tested the sample examples that they gave. The next step would be testing how well each platform can recognize the target image. I have talked with Xunfei about how I should test these on certain scenarios like when there are multiple recognizable objects are in the view of the camera.

I will also start collecting important data and information that would be superimposed onto the screen when the object is recognized. Implementation of the application will be started shortly after I compare the test results of the two SDKs and decide which SDK to use.

Researched methods for voltage and current monitoring with Arduino and further experimented with Arduino programming on existing sensors.

Never done it before, but Google’s guide here is a good start.

I was traveling late last week with some Physics research students, so my accomplishments for this project this time around are sparser than in the past. I’ll sit down tomorrow or Thursday to sketch out a design on whiteboard for how the process should work.

It looks straightforward enough to get started. That’s good, both because in the short time available I can implement something and because, if I have to change to a webpage or the like, I will likely be able to preserve some of the design without having been too bogged down in details.

Some other notes:

Thanks to Charlie, I added a 2-slot PCI Express USB 3.0 Card into the PC, and now instead of these devices from the Kinect:

Bus 001 Device 006: ID 045e:02c4 Microsoft Corp. Bus 001 Device 003: ID 045e:02d9 Microsoft Corp.

I get these:

Bus 004 Device 003: ID 045e:02c4 Microsoft Corp. Bus 004 Device 002: ID 045e:02d9 Microsoft Corp. Bus 003 Device 002: ID 045e:02d9 Microsoft Corp.

. . . which is unfortunately still not what I’m looking for when compared to what freenect expects. Not surprisingly, I still couldn’t run the example programs with the Kinect through the new ports either. So the next step is to wait for the v1 Kinect to arrive. I would start writing the program now, but I hesitate to run into more problems if I’m not able to test the program at every step.

My biggest fear for this project is being able to setup the hardware and software libraries in such a way that they would be able to work together. In terms of installing the libraries, I ran into a few complications that I had to manually fix myself, but thankfully, there weren’t any major issues I couldn’t solve.

The hardware, however, is a different story, since I couldn’t get the Kinect to be detected by the example programs. It turns out that according to a thread in the OpenKinect Google Group, the second version of the Kinect (v2), which is what the music department has now, doesn’t actually sends infrared images through USB instead of the depth data that libfreenect expects to receive. Moreover, OpenKinect says that I should be seeing the following USB devices through the “lsusb” command:

Bus 001 Device 021: ID 045e:02ae Microsoft Corp. Xbox NUI Camera Bus 001 Device 019: ID 045e:02b0 Microsoft Corp. Xbox NUI Motor Bus 001 Device 020: ID 045e:02ad Microsoft Corp. Xbox NUI Audio

Instead, I just get:

Bus 001 Device 006: ID 045e:02c4 Microsoft Corp. Bus 001 Device 003: ID 045e:02d9 Microsoft Corp.

To complicate things even further, the v2 Kinect connects through USB 3.0, but the CS department computers only have USB 2.0 ports. We are currently finding a USB 3.0 to 2.0 adapter to see if that changes anything, but I just ordered a v1 Kinect myself as a backup plan. Time is running short and I’m already falling behind schedule.

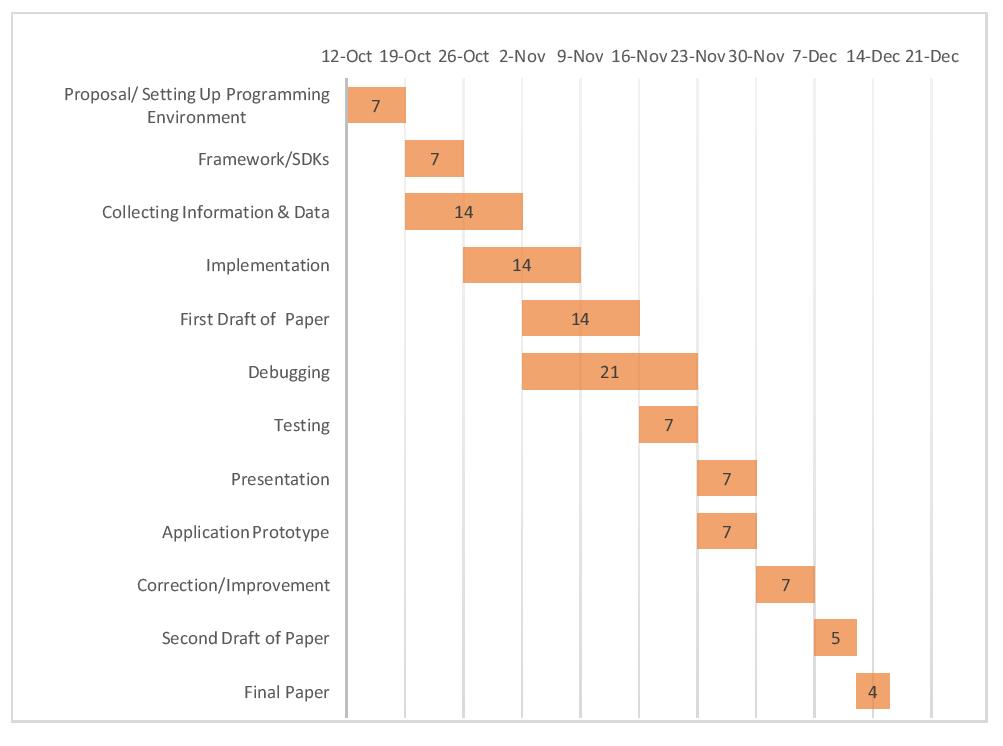

This is my estimated timeline for this semester.

I have also included my literature review and project proposal too.

Literature review – LiteratureReview_SawYan

Project Proposal – Proposal_SawYan

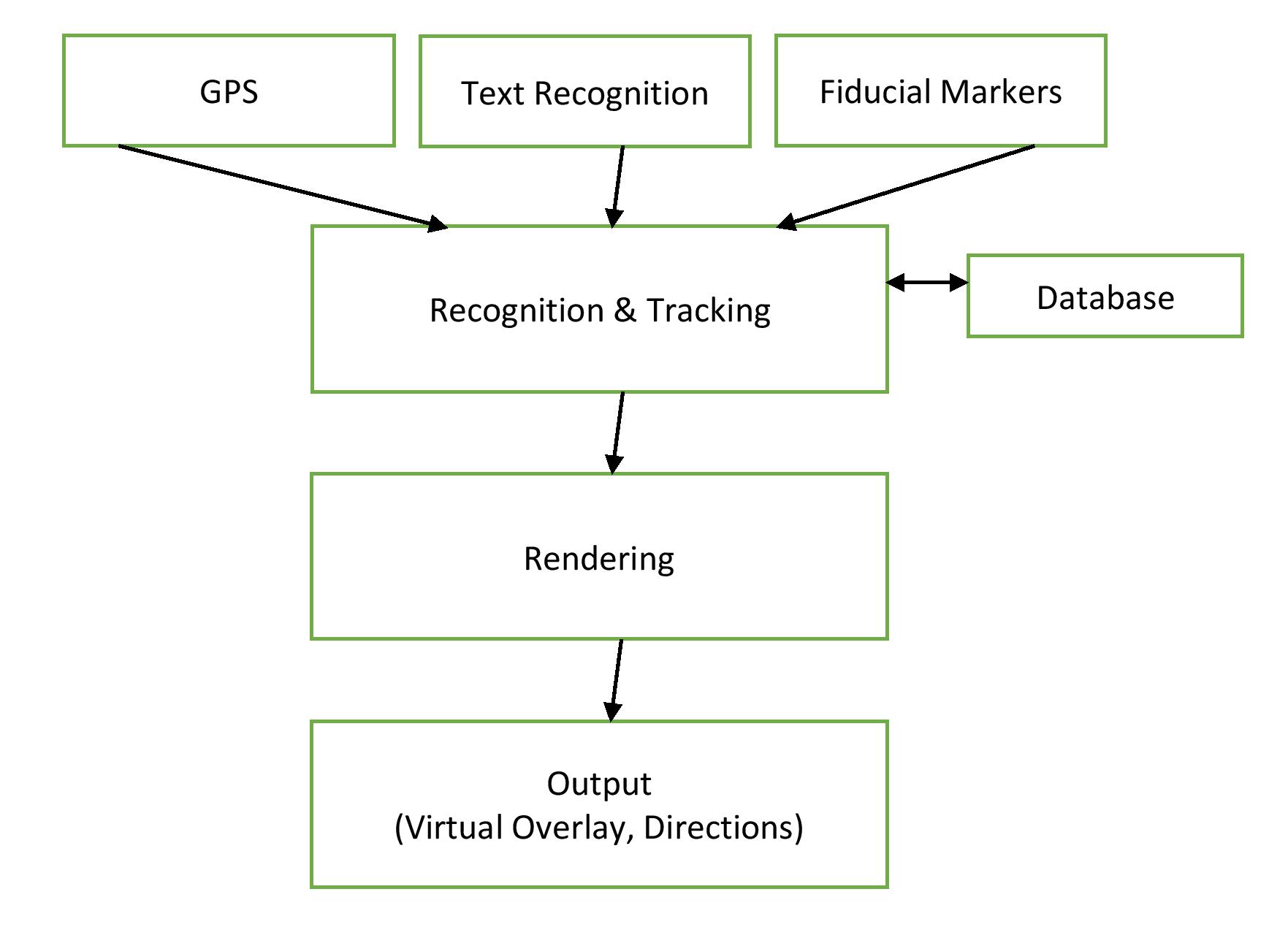

The following is the design flowchart for EARL: mobile app for better campus experiences. Input information will come from GPS, Text and markers when the device recognize them and it will be fed into the device for tracking. Then the device will look for the relevant virtual overlay for that object in the database. For finding the correct one, the application will render the virtual information and show it on the screen as an output.

I have completed my project proposal and powerpoint. Below is the timeline I have constructed for my project.

I’ve obtained an Arduino uno board and have been working and messing around with the sensors given to me.

Also, I have obtained a Watts up meter and have watched and measured the voltage used during different cycles and different machines. I have arrived at the conclusion that any Voltage over 100V indicates that a machine is running.

Up next is getting a voltage or current sensor for the Arduino board and working to connect the board to the wifi.

The current version of the proposal, which includes my revised thoughts from the survey paper as well as the design and timeline of the project:

Deadlines:

First, the documents.

The proposal outlines some research on HCI, in addition to a proposed browser extension (Chrome) to facilitate easier interface comparison tests on the scale of academic and independent developers. I will complete an IRB form and a (visual) sketch of the software logic for upload by the time of the next class.

Revised timeline, copied from the proposal:

These, as always, are subject to revision.

The GeoBurst algorithm detects local news events by looking for spatiotemporal ‘bursts’ of activity. This cluster analysis uses methods which look at geo-tag clusters of phrases.

Phrase network analysis has been able to historically link user clouds, however the use of GPS in mobile devices has led many users of social media to indicate their wherabouts on a reliable basis. Clusters appear not only in the spatial proximity of phrases, but also in their temporal proximity. This is being compared to a recent history which is sampled from a ‘sliding frame’ of historic phrases.

Possible changes may emerge as I rework the sampling process, in order to account for larger historic contextualization from previous years of data, in order to compare seasonal events, such as famous weather systems or sports. In the case of my research, the events are sports (specifically Football). This is because sports are temporal events on Twitter which happen in a simultaneous manner in the USA, giving me lots of clusters to look at. Though politics would be a fun topic, it is not resolved well in my dataset which dates to 2013.

The pursuit of GeoBurst is eventually to work towards disaster relief, however the behaviour of humans may arguably not be directed to social media in some disasters. The objective being that existing cyberGIS infrastructure may benefit from social media and be used to inform disaster response decision making.

In the mean time, it’s time to get GeoBurst running and looking at the Twitter API.

We use a self-hosted gitlab page for the Applied Groups and other internal CS work. All seniors have an account, which they can access through gitlab.cluster.earlham.edu upon receiving an email with your password.

If you haven’t worked with git, it’s good to learn now. Version control through git is ubiquitous in software development, so knowing how to do it before you graduate is valuable. A few tutorials:

We’ll add more. Command-line git is installed on cluster, so you can use that if you don’t want to install it on your local device.

If you have your own GitHub or similar account, please let us know and we can probably work with it.

One technical note you’ll need to know if you’re just getting started: when you log in to create your project, to create a local copy of it that you can update…

git clone <URL> <directory name>

If it worked, you should not be prompted for a password and should see text describing the cloning process. If there is an error, ask someone to help. See tutorials for details of how to do work.

I’ve been trying to figure out which libraries and frameworks are best for developing my Kinect application on Linux, and without testing any of the libraries I’ve found for compatibility so far, the search has been really difficult. This paper provides one possible setup, using:

I’ll look for other papers that have developed Kinect applications and check which of these libraries are absolutely necessary, if at all.

UPDATE: libfreenect (to replace OpenNI) and XKin (to replace NITE) seem to be attractive open-source alternatives.

I spent some time over break reading The Design of Everyday Things, the first work of popular literature on user design I’ve read for this project. I’m about a third of the way through – it’s a quick and illuminating read – and I’ll finish the relevant sections soon. Some of his insights will certainly be included in the final product.

I want to move onto Ralph Caplan and a bit from Tufte this week, then spend next week focusing on the software component.

Basic idea right now:

There will of course be overlap.

Also, our gitlab group is set up and we should all have our individual project repos set up soon.

Couple of things:

As noted in my update of the annotated bibliography post, I had cited one paper incorrectly there. This mistake was corrected and has not been repeated in the literature review.

Topic: Gesture Recognition for Virtual Orchestra Conducting

First, the annotated bibliography, the preliminary version submitted two days ago: craig_annotated_bibliography.

Second, I want to outline my general plan here. After a meeting with Charlie this week and carefully reading some of the more fundamental papers for the topic, I have greater clarity about the project.

My general plan, subject to – potentially major – modification as the class assignments are posted, is as follows (each date is a deadline):

I graduate in December so my project is somewhat more limited in scope than those who will be here the full year, but I anticipate a complete paper and a decent initial version of a piece of software by the end.

UPDATE: The annotated bibliography included here has been updated to correct a citation. Wang 2013, not Wang 2011, is the correct citation for the Facebook study.

Surveys various methods for gesture recognition and audio processing for the purpose of playing music through physical motion.

Topic: Augmented Reality to enhance campus tour experience

Advisor: Xunfei Jiang

I would like to develop an interactive and informative mobile application that will assist the prospies and other outside visitors during their campus visits with the use of Augmented Reality. By using Augmented Reality’s ability to create virtual overlays on the mobile screens, I would like to provide the users with rich information about the buildings on the campus, the departments and their curriculums, the current on-campus events and many other things that are available to the public. On top of providing information, the application will also help people navigate their ways on campus, offer interactive and fun mini game activities and has some features like scheduling a short meeting with a professor. Currently I am planning to use the fiducial markers and text recognition (OCR ?) for target object recognition. I can also resort to geolocation to explore more possibilities of making the user experience better. However, I am still working on the details and have to consider what is possible and what is not.

Now that I have a better understanding of what I want (and need) to do, here’s the first draft of my new plan:

Using the 3D motion tracking data of the Microsoft Kinect, our goal is to create a virtual conductor application that uses gesture recognition algorithms in order to detect beats and control the tempo and volume of the music. Care must also be taken in order to minimize the latency of the system from gestural input to audio output for the system to be suitable for live performance. We will be testing various beat detection algorithms proposed by other papers in order to determine which is best in terms of latency. Moreover, in regards to the audio playback itself, we will generate the desired music from appropriate synthesizers, further allowing for the possibility of live musical performance as well as the possibility for custom instrument creation and music composition.

Advisors: Charlie Peck and Forrest Tobey

I have chosen to do my senior project on data compression and my adviser for the project will be Xunfei Jiang.

Data compression is the concept of compressing data to fit into a smaller space. Lossless compression is when when some form of data, like a video file, is compressed into less space with no loss in quality. In lossy compression, a file can be compression even more, but at the expense of the quality of the data.

There are many different types of data one may want to compress. For instance, we can compress the amount of space it takes to store text using a technique like run-length encoding. In run-length encoding, we count the repetitions of characters, and store the number of times that character repeats itself. If the sequence EEEEE appears in a string, we could instead store it as 5E so that it takes up less space. This would be an example of lossless compression since the original string can still be perfectly reproduced despite requiring less storage space.

If one was compressing a video file, one might use bit rate compression. In bit rate compression, the number of bits used to determine the colors the pixels can turn is reduced. This will cause the video to require far less storage space, but at the cost of quality, since not as many color options are available. Thus, this would be an example of lossy compression.

For my personal project, I will read papers published on various data compression techniques. I will write my paper describing various compression techniques used in computer science. I will also come up with my own method of compressing data, probably for video files. I will write code to demonstrate this compression technique, and I will explain the method and how it works in my paper.

Project Idea (Charlie is the Advisor):

Developing some sort of hardware/software combination that would allow for monitoring of washers and dryers on Earlham’s campus. I would then create an app of some sort so that students could go on to the app and be able to 1) get notifications when a machine is done 2) look to see which machines are available so that they do not have to make the trek to their closest washing machine only to find out that that the machines are all taken. Right now, my idea for the hardware is just a machine that is plugged into the outlet at the same spot as the machine, kind of like an adaptor, and will broadcast a signal telling whether the machine is running or not. The software will then simply read the broadcast to determine if a machine is running or not.

Deeksha Srinath

Senior Seminar Topic Statement

Advisor: Charlie Peck

My interest in how social media today is influencing our lives influenced my topic. I will be working to design a unified data model for Facebook and Twitter data. I will be doing this in order to be able to query a pool of data that spans multiple social media platforms. This is useful to the scientific process because people interact with different social media sites differently. In designing a unified data model, I will be able to analyse trends across platforms.

Once my data model is established and I have moved my data into it, I am interested in exploring the different scenarios around disordered eating on social media. In a day and age when everyone has access to everyone else’s pictures at the touch of a button, I am curious about what this is doing to body image and body positivity among young women in the US, particularly women of color. Eating disorders in the US are steadily climbing, with thousands of young women losing their lives to disordered eating. Body positivity is also on the rise, with more and more people speaking out about loving their body as is and embracing the beauty in difference.

I am interested in exploring how to mine trends in the data across platforms. I do not have an ample psychological background to understand all the facets to this part of my project. I will be working with the Psychology department in order to better understand what to look for and how to query my data usefully once it is in a unified format.

For my Senior Research, my topic will be a data mining project using data collected from Twitter. Twitter’s API offers 1% of a spatial bandwidth (in my case, the continental U.S.A.) for users to collect. This data has been collected for over 3 years, and represents well over one billion tweets. Of these, a significant percentage of tweets contains at least one hashtag, which is one kind of data I will be looking at. The other datatype I have an interest in is geo-tags, which are an optional GPS coordinate which users may choose to include. Using machine learning algorithms, I hope to identify regular hashtags, in order to classify different kinds of signals based on hashtag frequency. The purpose of this is to see if I can predict hashtag occurrence, or whether hashtags are too noisy to classify or group into reliable frequencies.

My goal is to then study the noise, and to give that noise a geo-spatial context in which to understand the events which contributed to that noise.

Here’s a simple example:

Given that the State of Indiana tests tornado sirens on the first Tuesday of each month, it is likely that hashtags similar to #tornado or #siren appear in greater numbers on the same days as tests. This is a regular signal which could be reduced to a variability of +- 6 hours. This signal can be ignored. However, should a tornado strike on a different day, the sirens will go off, and #tornado or #siren might appear on an irregular day. The siren creates a spatial event which only affects the region which hears it, which might distinguish it from the more regular signals.

At a larger scale, looking at the noisy hashtags might give insights into real time, less predictable events. This can help de-obfuscate growing stories or events in real time, allowing us to find the meaningful information which hides under layers of signals.

I will be doing this research with David Barbella (Dave). Dave and I will be working with resources hosted by NCSA, including the CyberGIS Supercomputer ROGER (an XSEDE resource, for others that are interested).

. . ., but it may be revised again soon.

Our goal is to make a 3D rhythm game that would, among other possible applications, teach players the gestural motions of an orchestral conductor and act as a teacher for conducting music. The basic gameplay is that at certain points in the music, the game will show where the wand needs to be placed in 3D space and calculate score based on the distance between the intended position and the actual position of the wand. The gameplay would be comparable to the free game osu!, except no other inputs (e.g. mouse buttons) are required to play the game.

The project will consist of both software and hardware components, namely the game itself and a controller made specifically for said game, respectively. Currently, the game is planned to be built from scratch while including libraries such as OpenGL for a graphical interface and PortAudio for interacting with audio. Meanwhile, the required hardware may include just two infrared cameras/sensors as well as one infrared emitter on the tip of a wand for the cameras to detect. The reason for using infrared is to minimize any background interference that may occur when tracking a specific object as opposed to tracking by color.

Topic: Software Interfaces and Human Behavior

Adviser: Charlie Peck

While this requires some additional refinement, I’ve settled on the general topic and hope to incorporate some of my interests from the other topics along the way.

I will study how interfaces affect interactions between humans and computers. There is a rich history in this area, both in academia and in history/current events. Charlie recommended the Apple design guidelines, an outstanding trove of insights about why components of a software or OS interface should be designed in a particular way. From my own research I see that human-computer interaction (HCI) contributes to choices about everything from Facebook privacy to nuclear meltdowns.

In a potential paper, I would introduce the history of some high-profile HCI choices before zooming in on a few particular factors (to be determined) for more careful analysis and software design. This is a new area of study to me, so which factors I choose in particular will be determined upon completion of further research.

For the software project, I will approach this from the perspective of optimizing the response time for a given interaction. I intend to create a simple application, likely web-based to make scale feasible, with two simple interfaces and a series of prescribed interactions to be done in a given order. I have considered using a few of our local datasets: Iceland data, 911 emergency call data, transportation data, and a few public datasets on key topics. My intention with the software is to focus on the HCI components, so my preference is to use the data environment I am already familiar with as the backend for the project.

Since the major concern with my and most projects is getting directly to the CS, I intend to focus in particular on these subdomains:

In addition, this project draws on insights from several topics in the social sciences – behavioral economics, psychology, business – but I consider these topics as launch sites rather than journeys or landing sites.

Intelligent Personal Assistant for Medicine

Research Supervisor: Dave Barbella

I want to build a software (potentially mobile application) that acts as an intelligent personal assistant for medical purpose. The inspiration comes from modern programs like Siri, but instead of being a general purpose, I want it to have a narrower focus (i.e. medicine). While I am still working on the details, I envision that you can talk to the app about various things such as diseases, medicines, hospitals and so on. I want the communication between the user and the app/program to be as human-like as possible. The app will also do other things like remind you to take your medicine, tell you if your physical health is matching with the symptoms of some disease, tell you when it’s time to go for a regular check-up and so on. I anticipate integrating other 3rd party web services to make some of these functionalities possible. I am also expecting to go through the works of CALO (Cognitive Assistant that Learns and Organizes) a lot among other resources.

There will be various aspects of computer science (or Artificial Intelligence specifically) that will be at the heart of this project such as:

While these are all new fields of study for me, I am excited to learn more about these and apply these while conducting my research/project.

With this project, I would examine how a set of nations (a subset of Scandinavian nations) that are today relatively homogeneous in terms of race and economic capacity have vastly differing attitudes and policies around immigration and integration of immigrants. This interest developed as I was reading about Iceland’s policies around immigration before visiting there, and being struck by it’s vastly open immigration policy. Part of the reason this was so striking to me was it’s proximity to nations that in comparison, are very closed to immigration. I am yet to find the serious Computer Science in this project, but I am hoping that in learning more about the question I am trying to ask, I am helping myself find the Computer Science tools I could use to answer it.

2. Analyzing twitter data to study emotional health as tied to disordered eating

Social media is in our homes, and in our kitchens. This project would be an advent into studying twitter data about eating preferences. With information about healthy eating at everyone’s finger tips, it’s easy to get pulled into the 1234 fad diets that are popular on the interwebs on any given day. Through this project, I would study how patterns in popularity of fad diets affect dietary preferences as projected on twitter. Disordered eating is on the rise in the US, as is veganism. The question I will be trying to ask in this project is whether so called lifestyle changes(such as switching to a vegan lifestyle) have become the ad-hoc way of normlising disordered eating, and whether this phenomenon is discoverable through twitter data.

I’m primarily interested in human-computer interaction (HCI) and, extending the notion further, how the principles of computer science, when applied through software or social networks, produce changes in human behavior.

In general I’m drawing my thoughts from behavioral economics, network theory, research into user experience of software, and the general principles of software engineering and structuring information.

My three ideas on that general theme follow. Each contains a paragraph about the topic, a few areas of computer science that potentially relate to it, specific examples I’ve encountered, and some project possibilities. Nothing in the descriptions is final, comprehensive, or definitive, so change is not only possible but expected. It’s also not clear I have the CS in every one of these ideas, but they’re moving the right direction. The list is unordered.

We know that small changes in an environment can cause dramatic changes in behavior. In the social sciences, research has shown that switching employee retirement accounts from opt-in to opt-out, for example, can dramatically increase the percentage of employees who enroll in the accounts. Application and web developers must always consider how their design decisions, from the surface layer to the data structure layer, impact the human experience of interacting with the application and its information.

Computer science topics: HCI, Application/Web Design, Software Development, UI/UX

Potential examples: nudge literature in behavioral economics, campaign web design to encourage donations (Obama and HRC in particular, NPR story), user experience elements, Apple design guidelines

Project possibilities: Test two app designs (a la A/B testing) to determine how behavior changes; website designed to demonstrate the principles in various ways in the service of some simple task (to-do list, search, etc.)

Group behavior on social media and the filter bubble: Social media wants to keep you onsite and, if you move offsite, to do it by way of their site. Therefore they want you to stay, and in turn they give you what you want (think Facebook’s News Feed algorithm). The problem is that this breeds the dreaded filter bubble, in which epistemic closure takes over and only things you like or agree with ever have to come across your timeline. Is it possible to design systems to reduce this problem and therefore improve public understanding and social behavior?

Computer science topics: HCI, Network Theory, Information Architecture, Interface Design, UI/UX

Potential examples: Nextdoor and racial profiling, Twitter harassment and quality filtering algorithm, Facebook News Feed

Project possibilities: website or mobile app analyzing data mined from Twitter or Instagram

Big data is confusing, and we use visualizations to reduce the confusion and potentially communicate a message. Some vis forms are better – more comprehensible, less misleading, nicer to look at – than others. Does this matter for how humans experience the information? This emphasizes explanatory as opposed to exploratory data science.

Computer science topics: HCI, Application/Web Design, Scientific Computing, Databases

Potential examples: Dozens of vis/comp modeling websites exist now, so NYT Upshot/538/Our World In Data all potentially valuable; Tufte and other researchers in the field have covered this extensively

Project possibilities: Data visualizations of various kinds, some interactive and some not (may build on some existing projects at ECCS for this)

UPDATED IDEA: Object Recognition and Tracking for Augmented Reality

While exploring more about Augmented Reality and AR-based applications currently circulating on the internet, I have seen limitations of Augmented Reality, especially in object recognition and tracking. I would like to see the current status of the capability of object recognition and tracking technologies available and how we can improve them. If possible, I want to push further so that markerless augmented reality can be less complex and frustrating and we do not have to rely heavily on markers anymore.

Idea 1: Educational/Fun Augmented Reality Application

Seeing and interacting with digital creations of your favorite characters in reality would sound like an unrealistic fantasy but, thanks to the rapidly advancing realm of technology, we can bring our imaginations into reality now. I would like to make an educational yet fun application targeted to kids but the idea is not limited to only kids or education. The application allows the user to explore his/her surroundings and interact with the objects by using any devices that can do AR. The application should recognize the object or a part of an object and create an overlay which the user can interact with.

Idea 2: Facial/Image Recognition (Computer Vision) and Algorithms Behind it

While neurologists and other scientists are debating whether the ability to recognize is an innate ability, facial/image recognition has been an easy task for humans. It is so easy that we are not even aware of the fact that we can operate because we can recognize stuffs. However, it is still difficult for computers to perform this task. I think this part would be challenging in perfecting my idea 1 and I would like to spend time researching how we make computers recognize faces or objects under different circumstances.

Idea 3: Schedule Planner

At the beginning of every semester, the supervisor of libraries has to make a work schedule that works around the student workers’ timetable and it is a very tedious process. I want to come up with a software or at least an algorithm that would take in students’ varied timetable and build a schedule that makes everyone happy.

1.) Data Compression

I am interested in how data is represented as MPEG, JPEG, and other file formats, and how this data can be used to display an image or video. In particular, I am interested in the compression algorithms used to store this data in a smaller space, with little or no loss in the quality of the information. I would explore various lossless and lossy compression algorithms in the paper, and explain their strengths and weaknesses. I could then create some code to illustrate some compression algorithms and how they work.

2.) 3-D Passwords

While passwords are crucial to how we protect our information, they are also tedious to remember. One interesting alternative is 3-D passwords. The idea is the user is placed in some sort of 3-D environment with various objects that can be interacted with. The user could enter a passwords by interacting with various objects in the environment in a specific sequence. For example, a user might move a chair, head to a thermostat, and then change it to a specific temperature as a way of entering a password. This would be an appealing idea to explore in a project as well.

3.) Soft Computing

I was reading about soft computing and the idea seemed interesting and different from other ideas I have encountered so far in computer science. I would be interested in exploring it further, but don’t have a specific idea yet.

Topic: bioinformatics to track ones health:

The goal of this research is to be able to use ones personal health data to track and display a time line of ones health progress. By first gathering relevant data from various inputs, this software will be able to organize and store all the data. Second is the display of health records for easy access as well as reminders for prescription refills, appointments, and when to take medicine. The last part of this is to incorporate an algorithm that tracks ones heath record to create a time line or data sheet of once health for personal and medical use. The Computer Science aspect of this research will include a lot of machine learning such as input organization, and variance tracking.

Facial Recognition

Facial recognition is something that we as human beings have been doing since the beginning of time. We have also become masters at identifying a person’s mood or emotion simply by looking at their facial expression. Today, we have harnessed the powers of artificial intelligence and are now able to apply it to facial recognition software. This software usually consists of “faceprints” which are collections of data that contain certain features and dimensions of the face (length/width of nose, depth of eye socket, ect..). And with this software we are able to not only scan an image for a face, but we are able to determine the expression or emotion that the face is portraying. This is where I want to focus my research. I want to study facial recognition and how the software is able to detect faces and their expressions to determine human emotions.

Idea 1 (Intelligent Personal Assistant for Medicine):

I want to build a software (potentially mobile application) that acts as an intelligent personal assistant for medical purpose. The inspiration comes from modern programs like Siri, but instead of being a general purpose, I want it to have a narrower focus (i.e. medicine). While I am still working on the details, I envision that you can talk to the app about various things such as diseases, medicines, hospitals and so on. I want the communication style to be as human-like as possible. The app will also do other things like remind you to take your medicine, tell you when it’s time to go for a regular check-up and so on. I anticipate integrating other 3rd party web services to make some of these functionalities possible. I am also expecting to go through the works of CALO (Cognitive Assistant that Learns and Organizes) among other resources.

Idea 2 (Optimal Character Recognition):

The process of OCR of converting images of typed, handwritten or printed text into machine-encoded text has always been something I have been interested/curious about. I want to research on how this process is done and hopefully recreate the technology. For a more personalized experience, I will try to learn the particular user’s handwriting style better through the app and then hopefully have a higher degree of recognition accuracy.

Idea 3 (Dissecting/Adding functionality to a machine):

While this idea seems increasingly less likely, I thought I would make a note of this regardless. Having had some interest in working with hardware/circuits, I wanted to open up a machine, learn more about the internal components/circuits. Along with that, I also wanted to add some other piece of hardware and add functionality to the machine.

Some of this is copy and pasted from the email I sent last spring, but anyway, here’s my two project ideas:

Possible research: Spatial computational resource allocation

see also: CyberGIS’16 panel

Data structures are fundamental to the efficiency of algorithms pertaining to transfer and storage, computation, and visualization. Parallel and distributed computing comes in many implementations whose purposes vary greatly. Using centralized computing networks, new resources are available to more institutions, however the bridge between onsite spatial data collection and offsite computing is uncertain, even in terms of data structuring. The changes in resolution and computational needs have brought bitmap and vector closer than ever, however the software resources rely on centralized resources, for which there are few designed for LiDAR terrain mapping.

Research topics:

1: Study data structures to store spatial information. Do aspects of existing structures resolve any problems faced by users?

2: Study whether spatial data compression could be implemented to improve computability and

3: Study methods for data browsing and distributed storage solutions. Big data systems may limit the filesizes remote end users can personally compute with, however some data must be represented by the remote end user.

Panel: Future Directions of CyberGIS and Geospatial Data Science (Chair: Shaowen Wang)

Panelists: Budhendra Bhaduri, Mike Goodchild, Daniel S. Katz, Mansour Raad, Tapani Sarjakoski, and Judy —

Selected topics by Ben Liebersohn

Michael:

Judy:

Paul:

“As an outsider, when I see what’s going on in this community I ask: what unique problems is this community facing versus common problems? I presented networking and cloud stuff you may not have seen before. The application can drive the network and the compute resources. Flexible and scalable networks. Maybe both sides can help one another.”

<!–Idea 1:

Developing some sort of hardware/software combination that would allow for monitoring of washers and dryers on Earlham’s campus. I would then create an app of some sort so that students could go on to the app and be able to 1) get notifications when a machine is done 2) look to see which machines are available so that they do not have to make the trek to their closest washing machine only to find out that that the machines are all taken. Right now, my idea for the hardware is just a machine that is plugged into the outlet at the same spot as the machine, kind of like an adaptor, and will broadcast a signal telling whether the machine is running or not. The software will then simply read the broadcast to determine if a machine is running or not.

–>

Idea 1:

Developing a piece of software that is able to perform population estimation by scraping information from popular sites. I would most likely scrape instagram posts with tags, twitter posts that mention a location, facebook photos with location tags, and also if possible recent google searches regarding the location. For instance, if 1000 people in the last day had searched on google, food locations on Miami beach, it is a good predictor that a high proportion of those 1000 people are visiting Miami beach in the near future. Then a person would use my piece of software to say, search how busy Miami beach on that day, and a predictor of how busy it will be in the near future. This approach would require a lot of probability into the calculations.

Idea 3:

This idea would be the same concept as idea 2, but instead of scraping information, i would obtain the location information (or create fake data as charlie suggested) and process that data to then provide a more accurate depiction of the population at a location at any given moment. The predictive capability will then be based on past data that i had collected.